Session 7-9

... In statistics, you can analyze data based on a whole population, but more often you deal with sample data drawn from a given population. In this case, you need a decision rule that tells you if the values of the parameters of your sample-based linear regression model are likely to be close to the ‘t ...

... In statistics, you can analyze data based on a whole population, but more often you deal with sample data drawn from a given population. In this case, you need a decision rule that tells you if the values of the parameters of your sample-based linear regression model are likely to be close to the ‘t ...

Note

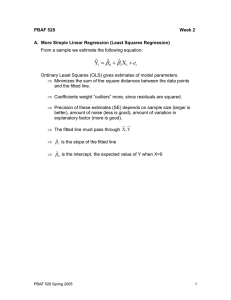

... OLS (that is, minimizing the squared errors) gives us ’s that minimize RSS (keeps the residuals as small as possible) thus gives largest R2 There is no simple method for deciding how high R2 has to be for a satisfactory and useful fit. Cannot use R2 to compare models with different dependent ...

... OLS (that is, minimizing the squared errors) gives us ’s that minimize RSS (keeps the residuals as small as possible) thus gives largest R2 There is no simple method for deciding how high R2 has to be for a satisfactory and useful fit. Cannot use R2 to compare models with different dependent ...

Slide 1

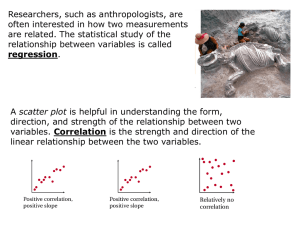

... should be. We can combine all the residuals into a single measure of accuracy by adding their squares. (We square the residuals in part to make them all positive.) The sum of the squares of the residuals is called the sum-of-squares error, SSE. Smaller values of SSE indicate more accurate models. ...

... should be. We can combine all the residuals into a single measure of accuracy by adding their squares. (We square the residuals in part to make them all positive.) The sum of the squares of the residuals is called the sum-of-squares error, SSE. Smaller values of SSE indicate more accurate models. ...

IPPTChap004

... expected value of Y for each level of X True (or actual) underlying relation between Y and X is unknown to the researcher but is to be discovered by analyzing the sample data Random error term ~ Unobservable term added to a regression model to capture the effects of all the minor, unpredictable ...

... expected value of Y for each level of X True (or actual) underlying relation between Y and X is unknown to the researcher but is to be discovered by analyzing the sample data Random error term ~ Unobservable term added to a regression model to capture the effects of all the minor, unpredictable ...

Basic principles of probability theory

... variance assumptions (number 3) can be removed. Then the treatments becomes a little bit more complicated. Note that for general solution, normal distribution of errors assumption is not used. This assumption is necessary to design test statistics. If this assumption breaks down then we can use boot ...

... variance assumptions (number 3) can be removed. Then the treatments becomes a little bit more complicated. Note that for general solution, normal distribution of errors assumption is not used. This assumption is necessary to design test statistics. If this assumption breaks down then we can use boot ...

PS 170A: Introductory Statistics for Political Science and Public Policy

... variables can be recoded into a set of binary “dummy” variables taking values 0/1. e.g. White/Black/Hispanic/Asian (Why we don’t want to use the multiple valued variable “race” in the regression model, if it’s coded say 1,2,3,4?) If there are m categories, we use m-1 dummies in the model, since the ...

... variables can be recoded into a set of binary “dummy” variables taking values 0/1. e.g. White/Black/Hispanic/Asian (Why we don’t want to use the multiple valued variable “race” in the regression model, if it’s coded say 1,2,3,4?) If there are m categories, we use m-1 dummies in the model, since the ...

Mid Term Exam

... For the following questions please give a True or False answer with one or two sentences in ...

... For the following questions please give a True or False answer with one or two sentences in ...

Linear regressions are the most popular model in studies of hedonic

... The sample used consist of 110 observations obtained through interviews that we carry out to the estate agency in the city of Vigo (Spain), from March to May 1998. For each house, we recollected information about his renting price and characteristics such as structural attributes and neighbourhood c ...

... The sample used consist of 110 observations obtained through interviews that we carry out to the estate agency in the city of Vigo (Spain), from March to May 1998. For each house, we recollected information about his renting price and characteristics such as structural attributes and neighbourhood c ...

Chapter 12 Simple Linear Regression

... 1. The error e is a random variable with mean of zero. 2. The variance of e , denoted by 2, is the same for all values of the independent variable. 3. The values of e are independent. 4. The error e is a normally distributed random variable. ...

... 1. The error e is a random variable with mean of zero. 2. The variance of e , denoted by 2, is the same for all values of the independent variable. 3. The values of e are independent. 4. The error e is a normally distributed random variable. ...

NATIONAL UNIVERSITY OF SINGAPORE NUS

... “Most companies today have plenty of data. However, creating intelligence and gleaning real insights from this data is what continues to elude organizations.”—Competing on Analytics: The New Science of Winning. ...

... “Most companies today have plenty of data. However, creating intelligence and gleaning real insights from this data is what continues to elude organizations.”—Competing on Analytics: The New Science of Winning. ...

Introduction to the Summer School

... – develop parsimonious models – provide a basis for further data collection through surveys or experiments ...

... – develop parsimonious models – provide a basis for further data collection through surveys or experiments ...

Linear regression

In statistics, linear regression is an approach for modeling the relationship between a scalar dependent variable y and one or more explanatory variables (or independent variables) denoted X. The case of one explanatory variable is called simple linear regression. For more than one explanatory variable, the process is called multiple linear regression. (This term should be distinguished from multivariate linear regression, where multiple correlated dependent variables are predicted, rather than a single scalar variable.)In linear regression, data are modeled using linear predictor functions, and unknown model parameters are estimated from the data. Such models are called linear models. Most commonly, linear regression refers to a model in which the conditional mean of y given the value of X is an affine function of X. Less commonly, linear regression could refer to a model in which the median, or some other quantile of the conditional distribution of y given X is expressed as a linear function of X. Like all forms of regression analysis, linear regression focuses on the conditional probability distribution of y given X, rather than on the joint probability distribution of y and X, which is the domain of multivariate analysis.Linear regression was the first type of regression analysis to be studied rigorously, and to be used extensively in practical applications. This is because models which depend linearly on their unknown parameters are easier to fit than models which are non-linearly related to their parameters and because the statistical properties of the resulting estimators are easier to determine.Linear regression has many practical uses. Most applications fall into one of the following two broad categories: If the goal is prediction, or forecasting, or error reduction, linear regression can be used to fit a predictive model to an observed data set of y and X values. After developing such a model, if an additional value of X is then given without its accompanying value of y, the fitted model can be used to make a prediction of the value of y. Given a variable y and a number of variables X1, ..., Xp that may be related to y, linear regression analysis can be applied to quantify the strength of the relationship between y and the Xj, to assess which Xj may have no relationship with y at all, and to identify which subsets of the Xj contain redundant information about y.Linear regression models are often fitted using the least squares approach, but they may also be fitted in other ways, such as by minimizing the ""lack of fit"" in some other norm (as with least absolute deviations regression), or by minimizing a penalized version of the least squares loss function as in ridge regression (L2-norm penalty) and lasso (L1-norm penalty). Conversely, the least squares approach can be used to fit models that are not linear models. Thus, although the terms ""least squares"" and ""linear model"" are closely linked, they are not synonymous.