Mining Frequent Patterns with Counting Inference

... patterns in a levelwise manner. During each iteration corresponding to a level, a set of candidate patterns is created by joining the frequent patterns discovered during the previous iteration, the supports of all candidate patterns are counted and infrequent ones are discarded. The most prominent a ...

... patterns in a levelwise manner. During each iteration corresponding to a level, a set of candidate patterns is created by joining the frequent patterns discovered during the previous iteration, the supports of all candidate patterns are counted and infrequent ones are discarded. The most prominent a ...

Powerful Forecasting with Excel

... (See Appendix C on how to access Excel 2007 and Excel 2010 Solver) ...

... (See Appendix C on how to access Excel 2007 and Excel 2010 Solver) ...

Using Interviewer Random Effects to Calculate Unbiased HIV

... HIV status (Clark and Houle, 2012). In the standard model, which we refer to as a fixed effects model, and which has previously been used to adjust for missing data in HIV surveys, the selection variable (interviewer identity) takes the form of a series of binary indicator variables in the selection ...

... HIV status (Clark and Houle, 2012). In the standard model, which we refer to as a fixed effects model, and which has previously been used to adjust for missing data in HIV surveys, the selection variable (interviewer identity) takes the form of a series of binary indicator variables in the selection ...

HSC: A SPECTRAL CLUSTERING ALGORITHM

... data processing and analysis tool. Many clustering applications can be found in these fields, such as web mining, biological data analysis, social network analysis [1], etc. However, clustering is still an attractive and challenging problem. It is hard for any clustering method to give a reasonable p ...

... data processing and analysis tool. Many clustering applications can be found in these fields, such as web mining, biological data analysis, social network analysis [1], etc. However, clustering is still an attractive and challenging problem. It is hard for any clustering method to give a reasonable p ...

Enhancements on Local Outlier Detection

... set too low, the groups of outlying objects will be wrongly identified as clusters. On the other hand, MinPts is also used to compute the density of each point, so if MinPts is set too high, some outliers near dense clusters may be misidentified as clustering points. We notice there are in fact two ...

... set too low, the groups of outlying objects will be wrongly identified as clusters. On the other hand, MinPts is also used to compute the density of each point, so if MinPts is set too high, some outliers near dense clusters may be misidentified as clustering points. We notice there are in fact two ...

Minimum spanning tree based split-and

... degrees of vertices. However, there may exist two or more vertices in Gmst(X0 , 3) simultaneously having the maximum degree. In this case, the vertex with the minimum sum of weights of its edges is to be selected. After the K0 initial prototypes have been determined, K-means is applied. The seven su ...

... degrees of vertices. However, there may exist two or more vertices in Gmst(X0 , 3) simultaneously having the maximum degree. In this case, the vertex with the minimum sum of weights of its edges is to be selected. After the K0 initial prototypes have been determined, K-means is applied. The seven su ...

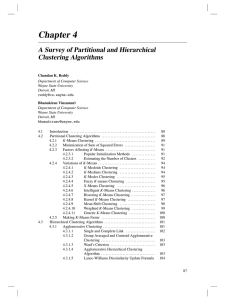

10ClusBasic

... as a sample of the underlying data generation mechanism to be analyzed Easy to understand, same efficiency as algorithmic agglomerative clustering method, can handle partially observed data ...

... as a sample of the underlying data generation mechanism to be analyzed Easy to understand, same efficiency as algorithmic agglomerative clustering method, can handle partially observed data ...

3 - UdG

... 1. Introduction and History SEM make it possible to: Fit linear relationships among a large number of variables. Possibly more than one is dependent. Validate a measurement instrument. Quantify measurement error and prevent its biasing effect. Freely specify, constrain and test each possible ...

... 1. Introduction and History SEM make it possible to: Fit linear relationships among a large number of variables. Possibly more than one is dependent. Validate a measurement instrument. Quantify measurement error and prevent its biasing effect. Freely specify, constrain and test each possible ...

Clustering

... as a sample of the underlying data generation mechanism to be analyzed Easy to understand, same efficiency as algorithmic agglomerative clustering method, can handle partially observed data ...

... as a sample of the underlying data generation mechanism to be analyzed Easy to understand, same efficiency as algorithmic agglomerative clustering method, can handle partially observed data ...

Clustering - upatras eclass

... Then probably you’ll say 20 clusters (each point defines its own) In terms of a dataset: you can view the same dataset from very different levels. Are you interested in big-effects on your data (top level view) or are you interested at fine grained effects (lower levels)? ...

... Then probably you’ll say 20 clusters (each point defines its own) In terms of a dataset: you can view the same dataset from very different levels. Are you interested in big-effects on your data (top level view) or are you interested at fine grained effects (lower levels)? ...

chapter 6 data mining

... It is common to have observations with missing values for one or more variables. The primary options for addressing missing data are: (1) discard observations with any missing values, (2) discard variable(s) with missing values, (3) fill-in missing entries with estimated values, or (4) apply a data ...

... It is common to have observations with missing values for one or more variables. The primary options for addressing missing data are: (1) discard observations with any missing values, (2) discard variable(s) with missing values, (3) fill-in missing entries with estimated values, or (4) apply a data ...

Expectation–maximization algorithm

In statistics, an expectation–maximization (EM) algorithm is an iterative method for finding maximum likelihood or maximum a posteriori (MAP) estimates of parameters in statistical models, where the model depends on unobserved latent variables. The EM iteration alternates between performing an expectation (E) step, which creates a function for the expectation of the log-likelihood evaluated using the current estimate for the parameters, and a maximization (M) step, which computes parameters maximizing the expected log-likelihood found on the E step. These parameter-estimates are then used to determine the distribution of the latent variables in the next E step.