* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download In this module you will learn how to use the... • Capture a workload on the production database

Survey

Document related concepts

Entity–attribute–value model wikipedia , lookup

Microsoft Access wikipedia , lookup

Serializability wikipedia , lookup

Oracle Database wikipedia , lookup

Ingres (database) wikipedia , lookup

Concurrency control wikipedia , lookup

Microsoft Jet Database Engine wikipedia , lookup

Database model wikipedia , lookup

ContactPoint wikipedia , lookup

Clusterpoint wikipedia , lookup

Microsoft SQL Server wikipedia , lookup

Relational model wikipedia , lookup

Transcript

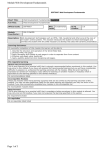

In this module you will learn how to use the Workload Replay web console to: • Capture a workload on the production database • Review the captured workload • Prepare it for replaying on the test database • Review the transformed workload before you replay it • Replay the workload • Create reports to compare and analyze the execution results The tasks in this module are based on a query workload tuning scenario where you want to analyze how running InfoSphere Optim Query Tuner recommendations, such as creating new indexes might improve the performance of applications that access this environment. First, let’s review the capture and replay tasks that you will complete. These tasks are divided into two stages: • Stage 1: Create a baseline. This first stage consists of the following steps: 1. Capture and review a representative workload. This step in turn consists of the following sub-steps. A) Capture a representative workload in the source, or production, environment B) Review the captured workload 2. Prepare and review the workload before you replay it. This step consists of the following sub-steps. A) Prepare to replay the workload in the test environment B) Review the replay-ready workload 3. Replay the workload in the test environment to produce a baseline workload 4. Create a comparison report to analyze and validate that the baseline replayed workload behaves like the original captured workload when you replay it. • Stage 2: Assess the impact of changes This second stage consists of the following steps: 1. Apply query workload tuning recommendations in the test database 2. Replay the workload again on the test database 1 3. Create a comparison report that compares the accuracy and performance of the first baseline workload with the newly replayed workload to determine the impact of the tuning. Let’s begin with the first capture step of stage 1. (Stage 1 – Step 1A - Capturing) Workloads are captured by the lightweight S-TAP software component. This component is installed and configured on the DB2 server that hosts the source (or production) database. The S-TAP component captures inbound database traffic for the local and remote applications that are accessing the database. It then transmits the information to the Workload Replay server. By default, all executed dynamic and static SQL statements are captured. You can filter the captured traffic to eliminate traffic that is originating from certain IP addresses. Let’s now walk through the process of capturing a workload. When working with Workload Replay in real-life you will handle many workloads. To make it easy to manage these, you can organize them in folders. Let’s create a folder in which to store the workloads that you create in this module. The new folder is displayed as a sibling to the Default folder. We will use this folder when we go through the capture and replay process You can specify whether you want to capture actual large object (LOB) or XML data values, or if you just want to capture the length information for these objects. Capturing LOB or XML data might significantly increase the amount of disk space required on the Workload Replay server. You should use this option only when needed. In this example, we only capture the LOB and XML data length. You can schedule to start capturing the workload at a later time, but in this example we will start the workload capture now, and capture data for 4 minutes. Only authorized user IDs are permitted to perform the capture and replay tasks. In this example, the user wr_u1 is authorized to capture workloads on the production database. The workload capture process starts. A new Details tab opens to show the progress and status of the workload capture process. In this example, some application workload is running on the database, and SQL traffic included in this workload is captured. After four minutes, the workload capture completes with 1426 captured SQL statements. 2 Let’s close the capture progress details tab. A new workload with “Captured” stage is displayed in the SQL Workloads grid. (Stage 1 - Step 1B - Reviewing the captured workload) You have the option to review the captured workload before you replay it to ensure that the workload has been correctly captured. The capture report provides summarized information about unique SQL statements and transactions, as well as basic execution metrics. Let’s create a Capture Report. The capture report includes three tabs. The Details tab displays information about the report generation. Let’s review the captured SQL. The capture report identifies the number of unique SQL statements that were captured and how many times each unique statement was executed. For each unique SQL, the SQL statement, execution count, total response time and number of rows returned or updated are displayed. You can view the complete SQL statement by clicking the statement link. You can also export the report information to a delimited text file by clicking Export. Let’s review information about the captured transaction. This report displays the unique transactions that were captured and the total number of executions for each unique transaction. For each transaction, the first SQL statement is displayed along with the number of SQL statements in the transaction as well as aggregated execution metrics. You can drill down and look at a transaction by clicking the link in the first column. This view shows the list of unique SQL statements in the transaction. We have completed the workload capture step and validated our captured workload with a Capture Report. Let’s close the capture report and move on to the prepare step. (Stage 1 - Step 2A – Preparing) 3 Before replaying the workload, in the second workflow step we prepare the test environment. Two major tasks must to be completed: • First, transform the workload into a replay-ready format. • Second, prepare the replay environment so that the workload can be run successfully. This includes creating database objects and loading data using any of your favorite database tools, such as Recovery Expert, High Performance Unload, Merge Backup, and Optim Test Data Management. The replay environment should adequately mirror the source database server to make it possible to accurately replay the captured workload. In this scenario, the replay database has already been prepared; the required database objects have been created, data has been loaded, and permissions have been granted. We can therefore focus on transforming the workload for replay. Let’s walk through the process of transforming the workload. If database objects in the replay database reside in a schema other than the one they reside in the source database you can specify schema mappings. In this example, the schemas are the same, so we leave the schema mapping blank. The user IDs that were executing the SQLs on the source database was recorded when we captured the workload. If the user IDs with the required permissions to access or manipulate the database objects that are referenced in the workload are different in the test environment, we need to map these user IDs. Two types of user credential mappings can be defined: • Many-to-one: The entire workload will be replayed using the Default replay user ID. • One-to-one: SQL that is associated with Captured User ID will be replayed using the Replay Database User ID that you specify. In this scenario, the workload was executed on the source database by users db2_u1 and db2_u2. We will replay the workload on the test database using a single user, db2_u3. Click the Test button to validate the credentials you entered for the mapping userid. To transform the workload, we need to specify the credentials of a user that holds the Can Capture Workload privilege in the source database and the credentials of a user that holds the Can Replay Workload privilege in the target database. In this setup, user wr_u1 exists on both databases and holds those privileges. The transform process completes. Let’s close the transform details tab. The resulting replay-ready workload is now ready to be replayed on the target database. 4 (Stage 1 - Step 2B – Reviewing Transform Report) Let’s create a Transform Report to preview the prepared SQL workload that will be executed during the replay. Three tabs are displayed after the transform report is complete. The Details tab displays information about the report generation. The two other tabs display information that is similar to what was displayed in the Capture Report. The main difference between the two reports is that this report displays the workload’s SQL statements with the optional schema mapping information in place. Let’s close the transform report and move on to the next step, replaying the workload. (Stage 1 - Step 3 – Replaying) When you start the replay process, the transformed workload is processed and replayed on the target system, matching the original workload’s SQL concurrency and execution sequence. While the workload is replaying, in- and outbound database traffic on the target database is captured. This captured workload is used to compare and validate how well the captured workload replayed on the replay system. The goal of initially replaying the workload on the test database is to produce a baseline that accurately reflects the characteristics of the captured workload, such as SQL return codes and number of rows retrieved or updated. Ideally the baseline matches those characteristics perfectly but there might be differences. Some of the reasons for differences might be less powerful hardware or missing data in the replay database. Only after a good baseline has been established should changes be introduced in the replay environment to asses the impact the changes have on the workload execution behavior. Let’s walk through the process of replaying a workload. Workload Replay provides the option to replay your workload with reduced, increased, or no wait time. In this example, we use the default option to replay at the rate of the original captured workload. While the workload replay is in progress, all SQL database activity on the replay database is captured. This captured workload lets you compare the execution results and metrics with the original workload. Two logs track the progress of the workload replay: • The replay log lists the replay activities and events as they happen. • The replay capture log lists the details of the concurrent workload capture that takes place as the workload is replayed. The Replay Process Status provides information about the replay progress. 5 The Replay Workload Success area provides summarized information about any replay accuracy issues. The workload replay completes with 100 percent matched statements. Let’s close the Replay Details tab and move on to the next step, Compare and Analyze. (Stage 1 - Step 4 – Comparing and analyzing) You can analyze differences between two workload executions by comparing a replayed workload with the original captured workload. You can also compare subsequent replays of the workload. The reports provide information about SQL replay accuracy and performance. These aspects of the replayed workload are typically what you need to pay attention to before making any changes to the replay environment. Let’s walk through the process of creating a report that compares the replayed workload with the original captured workload to help you analyze any differences. We choose the default option to compare the selected replayed workload with the original captured workload. A new Details tab shows the report generation progress, the report log, and information about the two workloads that are being compared. The Replay Results tab shows a summary of the replay accuracy. From here you can drill down into more granular reports if needed. In this example, the results show that 1426 SQL statements were executed and that they all matched perfectly, giving a 100 percent accuracy. The report consolidates all the matching SQL statements to a list of 23 unique SQL statements. No unmatched statements are listed. Unmatched statements might result from different returned codes or number of rows returned and updated during the SQL execution. All the SQL statements in the baseline workload ran successfully when we replayed the workload. There are no other applications running during the workload replay that could cause new SQL statements. The information shown at the bottom of the table is broken down by transactions. All transactions replayed successfully, and there are no new transactions. Let’s look at the Matched SQL replays. This report shows the execution statistics for all the aggregated SQL statements that match between the baseline and replayed workload. Each aggregated SQL statement represents multiple executions of the same statement in the two workloads. How statements are aggregated can be configured by specifying different grouping options. 6 Note that you can export this list of matched SQL replays to an XML file that can be used with Optim Query Workload Tuner for performance tuning. You can drill down to see the details of each aggregated statement. We will do that later. Let’s close this tab and look at replay performance in the Response Time tab. This view displays the captured metrics that you can use to compare the performance of the two workloads. The Cumulative Statement Response Time panel shows the time it took to process all SQL statements in the workload. In this example, the response time for the two workloads is about the same. Note that this metric does not include any application think time or time that is spent in other layers, such as network delay, that contribute to the processing time. The SQL Executions Over Time panel shows information about the workload’s SQL throughput. Since there are no significant differences between the captured and replayed environments, we can expect the graphs to align fairly closely. The Rows Returned Over Time panel indicates whether there are significant differences in data between the two environments. Since the replay database is an identical clone of the capture database, the two graphs align. The workload level statistics panel quantifies the performance numbers with total improvement and regression. • Response time difference identifies the change in the time it took to process the SQL during the replay. • The report lists SQL processing that is on average 5% faster or slower as an improvement or regression. In this example, out of the 23 unique statements, 19 have improved and 2 have regressed. You can configure the improvement and regression threshold for your reports. • The elapsed time shows how long the workloads ran during capture and replay. As the replay accuracy is perfect with similar performance, we can accept this replayed workload as a valid baseline. Before we move on to the next stage, let’s briefly look at the regressed SQL statements. This list shows the regressed statements where the response time is longer in the replay workload than in the baseline workload. Let's sort this list by Percentage Total Response Time Change in descending order. Let’s drill down into the SQL statements with the high regression. 7 This shows the top-N improved and regressed SQL executions for this particular aggregated SQL statement. In the Statement Text section, any literal values are replaced with parameter marker. Detailed execution information for the baseline and replay workloads is provided. Let’s drill down to the specific SQL that had the highest regressed response time percentage. This shows the exact SQL with the literal values and with detailed execution information. Information about the application executing the statement is also provided. Now that we have seen all the details of the regressed SQL, let’s close the comparison report and move on to the next stage. You have just completed the first stage of the capture replay workflow and produced a baseline workload. We will use this workload to analyze the result of tuning the replay database. (Stage 2) Next, we will go through stage two by doing the prepare, replay, and compare and analyze steps one more time. For the prepare step, as we have already transformed the captured workload as part of stage one, only one task must be completed: • Prepare the database server for the replaying the workload. For this task, Optim Query Workload Tuner has been used to import the SQL statements that we exported earlier, and then to run advisors to identify and apply a number of tuning recommendations on the replay database. The replay database has also been reset to undo any data changes that were made as part of the first workload replay. For the scope of this learning course, we don’t go over those steps. Now, with the replay environment configured and the baseline workload ready to be replayed, you can determine what impact the tuning actions might have on the workload. Let’s walk through the process of replaying the workload. The replay process is completed. Let’s close the Replay Details tab. We just completed the replay step of stage two. Next, to assess the accuracy and performance impact of the tuning, let’s create a new comparison report to compare the previous baseline workload with the newly replayed workload. 8 The report shows a replay accuracy of 100 percent with no unmatched SQL statements and no new statements. Let’s look at the replay performance. The report shows that the response time has improved 92 percent, with the same number of SQL executions and number of rows over time. The elapse time for both the baseline workload and the replayed workload is the same. Based on these metrics we confirm that performance has improved significantly as a result of the tuning. Let’s look at the improved SQL statements. The summarized SQL list is sorted by total response time change to identify the SQL statements that benefited the most from tuning. You can click on any column name to sort the list by this value. Let’s close the report details. We just completed the Compare and Analyze step of stage two. You have learned the basic capture replay flow in two stages using a simple migration and workload-tuning scenario. During each of the stages, a set of workloads are created. (Summary) Workload Replay lets you capture production workloads and replay them in nonproduction environments to reproduce realistic workloads for activities such as regression testing, stress and performance testing, capacity planning, and for other diagnostics. You can increase or decrease the speed at which the workloads are replayed to simulate higher or lower SQL throughput. The change-impact reports provide accuracy and performance analysis to help IT teams assess and quickly find potential problems before production deployment and ensure optimal performance. You can drill-down into SQL statements and transactions. This type of detailed analysis lets organizations more efficiently manage lifecycle events such as changes in hardware, workloads, databases, or applications without production impact. For more information, refer to the links shown here. 9