Document

... An FPGA is a class of integrated circuits for which the logic function is defined by the customer after the IC has been manufactured and delivered to the end user. FPGA’s allow users to implement their algorithms at the chip level, as opposed to writing programs that are translated into machine leve ...

... An FPGA is a class of integrated circuits for which the logic function is defined by the customer after the IC has been manufactured and delivered to the end user. FPGA’s allow users to implement their algorithms at the chip level, as opposed to writing programs that are translated into machine leve ...

Lec1 GP Computer Systems

... Instructions and data are stored in memory CPU fetches instructions and data to execute Programs can operate on programs ...

... Instructions and data are stored in memory CPU fetches instructions and data to execute Programs can operate on programs ...

05~Chapter 5_Target_..

... • During the 1950s and the early 1960s, the instruction set of a typical computer was implemented by soldering together large numbers of discrete components that performed the required operations • To build a faster computer, one generally designed extra, more powerful instructions, which required e ...

... • During the 1950s and the early 1960s, the instruction set of a typical computer was implemented by soldering together large numbers of discrete components that performed the required operations • To build a faster computer, one generally designed extra, more powerful instructions, which required e ...

Introduction: chap. 1 - NYU Computer Science Department

... C was originally created in 1972 by Dennis Ritchie at Bell Labs= C is a relatively low-level high-level language; i.e. deals with numbers, characters, and memory addresses ...

... C was originally created in 1972 by Dennis Ritchie at Bell Labs= C is a relatively low-level high-level language; i.e. deals with numbers, characters, and memory addresses ...

Chapter 5

... – data values and parts of addresses are often scaled and packed into odd pieces of the instruction – loading from a 32-bit address contained in the instruction stream takes two instructions, because one instruction isn't big enough to hold the whole address and the code for load • first instruction ...

... – data values and parts of addresses are often scaled and packed into odd pieces of the instruction – loading from a 32-bit address contained in the instruction stream takes two instructions, because one instruction isn't big enough to hold the whole address and the code for load • first instruction ...

Introduction to Computers and Java

... – The appearance of the screens – The way information is presented to the user – The program’s “user friendliness” – Manuals, help systems, and/or other forms of written documentation. ...

... – The appearance of the screens – The way information is presented to the user – The program’s “user friendliness” – Manuals, help systems, and/or other forms of written documentation. ...

System Bus

... Layers of Abstraction • A computer system can be examined at various levels of abstraction. At a higher level of abstraction, fewer details of the lower levels are observed. • Instead, new (functional) details may be implemented at that level of abstraction. • Each level of abstraction provides a s ...

... Layers of Abstraction • A computer system can be examined at various levels of abstraction. At a higher level of abstraction, fewer details of the lower levels are observed. • Instead, new (functional) details may be implemented at that level of abstraction. • Each level of abstraction provides a s ...

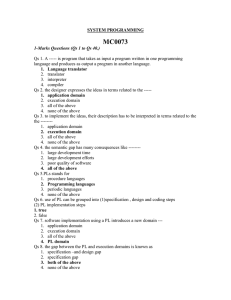

smu_MCA_SYSTEM PROGRAMMING(MC0073)

... Qs 14 TP stands for 1. Transaction program 2. Target program 3. Terminal program 4. target processing Qs 15. Reduction in the specification gap does not increases the reliability of the generated program. 1. True 2. False Qs 16. Program translation model bridges the execution gap by translating a pr ...

... Qs 14 TP stands for 1. Transaction program 2. Target program 3. Terminal program 4. target processing Qs 15. Reduction in the specification gap does not increases the reliability of the generated program. 1. True 2. False Qs 16. Program translation model bridges the execution gap by translating a pr ...

History-Perofrmance

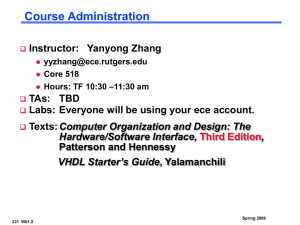

... Main: Computer Organization and Design — The Hardware Software Interface – 5th Edition, David Patterson and John Hennessy ...

... Main: Computer Organization and Design — The Hardware Software Interface – 5th Edition, David Patterson and John Hennessy ...

Computer Hardware: 2500 BC - Computer Science and Engineering

... • many libraries and learning resources • widely used for writing operating systems and compilers as well as industrial and scientifc applications • provides low level access to machine • language you must know if you want to work with hardware ...

... • many libraries and learning resources • widely used for writing operating systems and compilers as well as industrial and scientifc applications • provides low level access to machine • language you must know if you want to work with hardware ...

C++ Programming: Program Design Including Data

... perform are input (get data), output (display result), storage, and arithmetic and logic operations. ...

... perform are input (get data), output (display result), storage, and arithmetic and logic operations. ...

1up

... • A positive integer (including zero) operated on by two operations named P (passeren) and V (vrijgeven) – P(s) waits until s is greater than 0 and decrements s by 1 and allows the process to continue – V(s) increments s by 1 to release any one of the waiting processes (if any) – P and V operations ...

... • A positive integer (including zero) operated on by two operations named P (passeren) and V (vrijgeven) – P(s) waits until s is greater than 0 and decrements s by 1 and allows the process to continue – V(s) increments s by 1 to release any one of the waiting processes (if any) – P and V operations ...

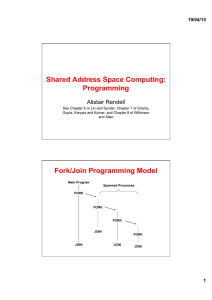

Shared Address Space Computing: Programming Fork/Join

... • A positive integer (including zero) operated on by two operations named P (passeren) and V (vrijgeven) – P(s) waits until s is greater than 0 and decrements s by 1 and allows the process to continue – V(s) increments s by 1 to release any one of the waiting processes (if any) – P and V operati ...

... • A positive integer (including zero) operated on by two operations named P (passeren) and V (vrijgeven) – P(s) waits until s is greater than 0 and decrements s by 1 and allows the process to continue – V(s) increments s by 1 to release any one of the waiting processes (if any) – P and V operati ...

The IC Wall Collaboration between Computer science + Physics

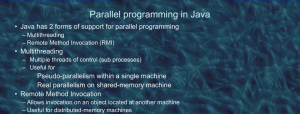

... mythread t1 = new mythread(); // allocates a thread mythread t2 = new mythread(); // allocates another thread t1.start(); // starts first thread and invokes t1.run() t2.start(); // starts second thread and invokes t2.run() t1.hi(); ...

... mythread t1 = new mythread(); // allocates a thread mythread t2 = new mythread(); // allocates another thread t1.start(); // starts first thread and invokes t1.run() t2.start(); // starts second thread and invokes t2.run() t1.hi(); ...

CHAPTER 1 Introduction to Computers and Programming

... 1.1 What are hardware and software? 1.2 List five major hardware components of a computer. 1.3 What does the acronym “CPU” stand for? 1.4 What unit is used to measure CPU speed? 1.5 What is a bit? What is a byte? 1.6 What is memory for? What does RAM stand for? Why is memory called RAM? 1.7 What uni ...

... 1.1 What are hardware and software? 1.2 List five major hardware components of a computer. 1.3 What does the acronym “CPU” stand for? 1.4 What unit is used to measure CPU speed? 1.5 What is a bit? What is a byte? 1.6 What is memory for? What does RAM stand for? Why is memory called RAM? 1.7 What uni ...

w(x)

... concurrent threads in a consistent fashion even if they are executing on different compute servers. Since several threads can simultaneously execute in an object, it is necessary to coordinate access to the object data. This is handled by Clouds object programmers using Clouds system synchronization ...

... concurrent threads in a consistent fashion even if they are executing on different compute servers. Since several threads can simultaneously execute in an object, it is necessary to coordinate access to the object data. This is handled by Clouds object programmers using Clouds system synchronization ...

Matlab Computing @ CBI Lab Parallel Computing Toolbox

... In local mode, the client Matlab® session maps to an operating system process, containing multiple threads. Each lab requires the creation of a new operating system process, each with multiple threads. Since a thread is the scheduled OS entity, all threads from all Matlab® processes will be competin ...

... In local mode, the client Matlab® session maps to an operating system process, containing multiple threads. Each lab requires the creation of a new operating system process, each with multiple threads. Since a thread is the scheduled OS entity, all threads from all Matlab® processes will be competin ...

Document

... Within both the ALU and the control unit are small numbers of memory locations known as registers which are used to hold single pieces of data or single instructions immediately before and after processing. The ALU and the control unit along with their registers are together referred to as the centr ...

... Within both the ALU and the control unit are small numbers of memory locations known as registers which are used to hold single pieces of data or single instructions immediately before and after processing. The ALU and the control unit along with their registers are together referred to as the centr ...

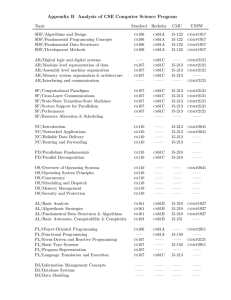

Appendix B Analysis of CSE Computer Science Program

... data structures. The course emphasizes parallel algorithms and analysis, and how sequential algorithms can be considered a special case. The course goes into more theoretical content on algorithm analysis than 15-122 and 15-150 while still including a significant programming component and covering a ...

... data structures. The course emphasizes parallel algorithms and analysis, and how sequential algorithms can be considered a special case. The course goes into more theoretical content on algorithm analysis than 15-122 and 15-150 while still including a significant programming component and covering a ...

name: aderibigbe zainab yetunde course code: gst 115

... number-crunching crisis. By 1880, the U.S. population had grown so large that it took more than seven years to tabulate the U.S. Census results. The government sought a faster way to get the job done, giving rise to punch-card based computers that took up entire rooms. Today, we carry more computing ...

... number-crunching crisis. By 1880, the U.S. population had grown so large that it took more than seven years to tabulate the U.S. Census results. The government sought a faster way to get the job done, giving rise to punch-card based computers that took up entire rooms. Today, we carry more computing ...

The 5 generations of computers

... and more reliable than their first-generation predecessors. Though the transistor still generated a great deal of heat that subjected the computer to damage, it was a vast improvement over the vacuum tube. Second-generation computers still relied on punched cards for input and printouts for output. ...

... and more reliable than their first-generation predecessors. Though the transistor still generated a great deal of heat that subjected the computer to damage, it was a vast improvement over the vacuum tube. Second-generation computers still relied on punched cards for input and printouts for output. ...

BUILDING GRANULARITY IN HIGHLY ABSTRACT PARALLEL

... an abstract machine providing certain operations to the programming level above and requiring implementations for each of these operations on all of the architectures below. It is designed to separate software-development concerns from effective parallel-execution concerns and provides both abstract ...

... an abstract machine providing certain operations to the programming level above and requiring implementations for each of these operations on all of the architectures below. It is designed to separate software-development concerns from effective parallel-execution concerns and provides both abstract ...

SIT102 Introduction to Programming

... Software is the programs and data that a computer uses. Programs are lists of instructions for the processor Data can be any information that a program needs: character data, numerical data, image data, audio data, etc. Both programs and data are saved in computer memory in the same way. C ...

... Software is the programs and data that a computer uses. Programs are lists of instructions for the processor Data can be any information that a program needs: character data, numerical data, image data, audio data, etc. Both programs and data are saved in computer memory in the same way. C ...

Parallel computing

Parallel computing is a form/type of computation in which many calculations are carried out simultaneously, operating on the principle that large problems can often be divided into smaller ones, which are then solved at the same time. There are several different forms of parallel computing: bit-level, instruction level, data, and task parallelism. Parallelism has been employed for many years, mainly in high-performance computing, but interest in it has grown lately due to the physical constraints preventing frequency scaling. As power consumption (and consequently heat generation) by computers has become a concern in recent years, parallel computing has become the dominant paradigm in computer architecture, mainly in the form of multi-core processors.Parallel computing is closely related to concurrent computing – they are frequently used together, and often conflated, though the two are distinct: it is possible to have parallelism without concurrency (such as bit-level parallelism), and concurrency without parallelism (such as multitasking by time-sharing on a single-core CPU).In parallel computing, a computational task is typically broken down in several, often many, very similar subtasks that can be processed independently and whose results are combined afterwards, upon completion. In contrast, in concurrent computing, the various processes often do not address related tasks; when they do, as is typical in distributed computing, the separate tasks may have a varied nature and often require some inter-process communication during execution.Parallel computers can be roughly classified according to the level at which the hardware supports parallelism, with multi-core and multi-processor computers having multiple processing elements within a single machine, while clusters, MPPs, and grids use multiple computers to work on the same task. Specialized parallel computer architectures are sometimes used alongside traditional processors, for accelerating specific tasks.In some cases parallelism is transparent to the programmer, such as in bit-level or instruction-level parallelism, but explicitly parallel algorithms, particularly those that use concurrency, are more difficult to write than sequential ones, because concurrency introduces several new classes of potential software bugs, of which race conditions are the most common. Communication and synchronization between the different subtasks are typically some of the greatest obstacles to getting good parallel program performance.A theoretical upper bound on the speed-up of a single program as a result of parallelization is given by Amdahl's law.