Research Article Missing Value Estimation for

... in the target gene by the row’s average value first. But in our experiment, we found that row-average cannot reflect the real structure of the dataset. Because row-average only uses the information of an individual row, the missing values in a target gene do not only rely on the known values in its ...

... in the target gene by the row’s average value first. But in our experiment, we found that row-average cannot reflect the real structure of the dataset. Because row-average only uses the information of an individual row, the missing values in a target gene do not only rely on the known values in its ...

Regularization and Variable Selection via the Elastic Net

... Median numbers of non-zero coefficients for LASSO and ENet are 11 and 16 respectively. The Elastic Net tends to select more variables than LASSO, owing to the grouping effect. Prediction performance of Elastic Net outperforms other methods, since including correlated predictors makes the model more ...

... Median numbers of non-zero coefficients for LASSO and ENet are 11 and 16 respectively. The Elastic Net tends to select more variables than LASSO, owing to the grouping effect. Prediction performance of Elastic Net outperforms other methods, since including correlated predictors makes the model more ...

Working Paper Number 168 April 2009

... variables is to assume that the data-generating process is classically linear and unbounded at its heart, with a normally distributed error term. Link functions of chosen form translate these latent variables into the observed ones. Examples include the probit, ordered probit, rank-ordered probit, m ...

... variables is to assume that the data-generating process is classically linear and unbounded at its heart, with a normally distributed error term. Link functions of chosen form translate these latent variables into the observed ones. Examples include the probit, ordered probit, rank-ordered probit, m ...

A Parametricness Index for Model Selection

... view, this is not a problem. For instance, for a truly parametric scenario with many small coefficients of various magnitudes, for a small or moderate sample size, the selected model will most likely be different from the true model and it is also among multiple models that perform similarly in esti ...

... view, this is not a problem. For instance, for a truly parametric scenario with many small coefficients of various magnitudes, for a small or moderate sample size, the selected model will most likely be different from the true model and it is also among multiple models that perform similarly in esti ...

Continuous - rxsped596

... One way to control for an extraneous variable is to match subjects from the comparison groups on that variable Creating or finding homogeneous subgroups would be another way to control for an extraneous variable ...

... One way to control for an extraneous variable is to match subjects from the comparison groups on that variable Creating or finding homogeneous subgroups would be another way to control for an extraneous variable ...

Logistic Regression - Michael Kalsher Home

... Logistic Regression • In linear regression, coefficients (parameters) are estimated using the least squares method. • In logistic regression, maximum-likelihood estimation is used. – Selects coefficients that make the observed values most likely to have occurred. – The chosen estimates of the bs wil ...

... Logistic Regression • In linear regression, coefficients (parameters) are estimated using the least squares method. • In logistic regression, maximum-likelihood estimation is used. – Selects coefficients that make the observed values most likely to have occurred. – The chosen estimates of the bs wil ...

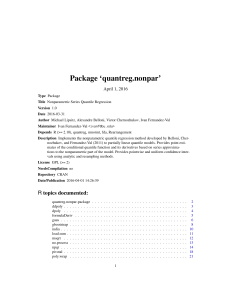

Package `quantreg.nonpar`

... either a basis generated using the fda package of type "bspline" or "fourier", a factor variable, or an orthogonal polynomial basis generated using the poly command. This basis is the series regressor to be added to formula. ...

... either a basis generated using the fda package of type "bspline" or "fourier", a factor variable, or an orthogonal polynomial basis generated using the poly command. This basis is the series regressor to be added to formula. ...

2014 Technical Notes

... You can also do this when g() is piecewise monotonic. it means, you should be able to partition χ, the domain of the original RV, into contiguous sets so the function is monotonic in each partition. For example, if f (X) = X 2 , we should split the real line into (−∞, 0] and (0, ∞). ...

... You can also do this when g() is piecewise monotonic. it means, you should be able to partition χ, the domain of the original RV, into contiguous sets so the function is monotonic in each partition. For example, if f (X) = X 2 , we should split the real line into (−∞, 0] and (0, ∞). ...

Elliott

... “The idea of imputation is both seductive and dangerous. It is seductive because it can lull the user into the pleasurable state of believing that the data are complete after all, and it is dangerous because it lumps together situations where the problem is sufficiently minor that it can be legitima ...

... “The idea of imputation is both seductive and dangerous. It is seductive because it can lull the user into the pleasurable state of believing that the data are complete after all, and it is dangerous because it lumps together situations where the problem is sufficiently minor that it can be legitima ...

Coefficient of determination

In statistics, the coefficient of determination, denoted R2 or r2 and pronounced R squared, is a number that indicates how well data fit a statistical model – sometimes simply a line or a curve. An R2 of 1 indicates that the regression line perfectly fits the data, while an R2 of 0 indicates that the line does not fit the data at all. This latter can be because the data is utterly non-linear, or because it is random.It is a statistic used in the context of statistical models whose main purpose is either the prediction of future outcomes or the testing of hypotheses, on the basis of other related information. It provides a measure of how well observed outcomes are replicated by the model, as the proportion of total variation of outcomes explained by the model (pp. 187, 287).There are several definitions of R2 that are only sometimes equivalent. One class of such cases includes that of simple linear regression where r2 is used instead of R2. In this case, if an intercept is included, then r2 is simply the square of the sample correlation coefficient (i.e., r) between the outcomes and their predicted values. If additional explanators are included, R2 is the square of the coefficient of multiple correlation. In both such cases, the coefficient of determination ranges from 0 to 1.Important cases where the computational definition of R2 can yield negative values, depending on the definition used, arise where the predictions that are being compared to the corresponding outcomes have not been derived from a model-fitting procedure using those data, and where linear regression is conducted without including an intercept. Additionally, negative values of R2 may occur when fitting non-linear functions to data. In cases where negative values arise, the mean of the data provides a better fit to the outcomes than do the fitted function values, according to this particular criterion.