Identification of demand functions

... with the explanatory variables. In panel (b) on the previous slide, high values of the residual " (or e; same thing) are associated with high values of the price variable P and vice versa. Hence P and " are positively correlated, which leads to upward bias in the OLS estimate of the price elasticity ...

... with the explanatory variables. In panel (b) on the previous slide, high values of the residual " (or e; same thing) are associated with high values of the price variable P and vice versa. Hence P and " are positively correlated, which leads to upward bias in the OLS estimate of the price elasticity ...

Ch.7: Estimation 1 Introduction

... how to report the uncertainty of estimators through their standard error and how that leads to confidence intervals for estimators which have (or have approximately) a normal distribution. The above estimation concepts will be developed here in the context of a single sample, but will be applied in ...

... how to report the uncertainty of estimators through their standard error and how that leads to confidence intervals for estimators which have (or have approximately) a normal distribution. The above estimation concepts will be developed here in the context of a single sample, but will be applied in ...

The White test for heteroskedasticity

... • Using these formulas for standard errors, the usual t test is valid asymptotically valid with heteroskedasticity • The usual F statistic does not work under heteroskedasticity, but heteroskedasticity robust versions are available in Stata • To obtain heteroskedasticity robust standard errors and F ...

... • Using these formulas for standard errors, the usual t test is valid asymptotically valid with heteroskedasticity • The usual F statistic does not work under heteroskedasticity, but heteroskedasticity robust versions are available in Stata • To obtain heteroskedasticity robust standard errors and F ...

Logistic Regression

... Some Basic Background An Underlying Normal Variable Binary Logistic Regression Binomial Logistic Regression Interpreting Logistic Regression Parameters ...

... Some Basic Background An Underlying Normal Variable Binary Logistic Regression Binomial Logistic Regression Interpreting Logistic Regression Parameters ...

Data Mining Essentials

... higher resistance to distortion and noise. 2. Discretization. Consider a continuous feature such as money spent in our previous example. This feature can be converted into discrete values – High, Normal, and Low – by mapping different ranges to different discrete values. The process of converting co ...

... higher resistance to distortion and noise. 2. Discretization. Consider a continuous feature such as money spent in our previous example. This feature can be converted into discrete values – High, Normal, and Low – by mapping different ranges to different discrete values. The process of converting co ...

PPT

... df.offset = 0, penalty = 1) • Arguments: • Required: X, values of the predictor variable. There should be at least ten distinct x values. • Optional: • Y: response variable, of the same length as x. • Df:a number which supplies the degrees of freedom = trace(S)rather than a smoothing parameter. ...

... df.offset = 0, penalty = 1) • Arguments: • Required: X, values of the predictor variable. There should be at least ten distinct x values. • Optional: • Y: response variable, of the same length as x. • Df:a number which supplies the degrees of freedom = trace(S)rather than a smoothing parameter. ...

(2005) Applied Linear Regression. (3

... The continuing theme of the second edition was the need for diagnostic methods, in which fitted models are analyzed for deficiencies, through analysis of residuals and influence. This emphasis was unusual when the second edition was published and important quantities like Studentized residuals and C ...

... The continuing theme of the second edition was the need for diagnostic methods, in which fitted models are analyzed for deficiencies, through analysis of residuals and influence. This emphasis was unusual when the second edition was published and important quantities like Studentized residuals and C ...

IBM SPSS Missing Values 22

... v Fills in (imputes) missing values with estimated values using regression or EM methods; however, multiple imputation is generally considered to provide more accurate results. Missing value analysis helps address several concerns caused by incomplete data. If cases with missing values are systemati ...

... v Fills in (imputes) missing values with estimated values using regression or EM methods; however, multiple imputation is generally considered to provide more accurate results. Missing value analysis helps address several concerns caused by incomplete data. If cases with missing values are systemati ...

Application of a New Statistical Method to Derive

... RRR usually are sorted by decreasing eigenvalues. The first factor of PCA is the linear function of predictors that maximizes the explained variation in predictors. However, in general, it is not optimal in terms of response variation. In contrast, the first factor of RRR explains more variation in ...

... RRR usually are sorted by decreasing eigenvalues. The first factor of PCA is the linear function of predictors that maximizes the explained variation in predictors. However, in general, it is not optimal in terms of response variation. In contrast, the first factor of RRR explains more variation in ...

Threshold detection: matching statistical methodology to ecological

... for binary data, where they are sometimes called occurrence thresholds (Guénette and Villard 2004, 2005). In this context, a logistic regression model is typically fit to the data. The data are then split into two groups (points where the species is likely to be present, versus points where it is li ...

... for binary data, where they are sometimes called occurrence thresholds (Guénette and Villard 2004, 2005). In this context, a logistic regression model is typically fit to the data. The data are then split into two groups (points where the species is likely to be present, versus points where it is li ...

1 David A. Kenny APS Boston May 29, 2010 David A. Kenny APS

... DataToText Examples Example 1: Moderation Example In moderation, the causal effect of one variable is assumed to vary as a function of another variable, the moderator. As an example, consider a study by Livi, Carrus, and Gentile, M. (2010) that investigated the causal of effect that noise sensitivit ...

... DataToText Examples Example 1: Moderation Example In moderation, the causal effect of one variable is assumed to vary as a function of another variable, the moderator. As an example, consider a study by Livi, Carrus, and Gentile, M. (2010) that investigated the causal of effect that noise sensitivit ...

“What-If” Deployment and Configuration Questions with WISE

... error between 8% and 11% for cross-validation with existing deployments and only 9% maximum cumulative distribution difference compared to ground-truth response time distribution for whatif scenarios on a real deployment as well as controlled experiments on Emulab. Finally, WISE must be fast, so tha ...

... error between 8% and 11% for cross-validation with existing deployments and only 9% maximum cumulative distribution difference compared to ground-truth response time distribution for whatif scenarios on a real deployment as well as controlled experiments on Emulab. Finally, WISE must be fast, so tha ...

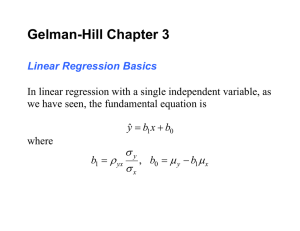

Linear regression

In statistics, linear regression is an approach for modeling the relationship between a scalar dependent variable y and one or more explanatory variables (or independent variables) denoted X. The case of one explanatory variable is called simple linear regression. For more than one explanatory variable, the process is called multiple linear regression. (This term should be distinguished from multivariate linear regression, where multiple correlated dependent variables are predicted, rather than a single scalar variable.)In linear regression, data are modeled using linear predictor functions, and unknown model parameters are estimated from the data. Such models are called linear models. Most commonly, linear regression refers to a model in which the conditional mean of y given the value of X is an affine function of X. Less commonly, linear regression could refer to a model in which the median, or some other quantile of the conditional distribution of y given X is expressed as a linear function of X. Like all forms of regression analysis, linear regression focuses on the conditional probability distribution of y given X, rather than on the joint probability distribution of y and X, which is the domain of multivariate analysis.Linear regression was the first type of regression analysis to be studied rigorously, and to be used extensively in practical applications. This is because models which depend linearly on their unknown parameters are easier to fit than models which are non-linearly related to their parameters and because the statistical properties of the resulting estimators are easier to determine.Linear regression has many practical uses. Most applications fall into one of the following two broad categories: If the goal is prediction, or forecasting, or error reduction, linear regression can be used to fit a predictive model to an observed data set of y and X values. After developing such a model, if an additional value of X is then given without its accompanying value of y, the fitted model can be used to make a prediction of the value of y. Given a variable y and a number of variables X1, ..., Xp that may be related to y, linear regression analysis can be applied to quantify the strength of the relationship between y and the Xj, to assess which Xj may have no relationship with y at all, and to identify which subsets of the Xj contain redundant information about y.Linear regression models are often fitted using the least squares approach, but they may also be fitted in other ways, such as by minimizing the ""lack of fit"" in some other norm (as with least absolute deviations regression), or by minimizing a penalized version of the least squares loss function as in ridge regression (L2-norm penalty) and lasso (L1-norm penalty). Conversely, the least squares approach can be used to fit models that are not linear models. Thus, although the terms ""least squares"" and ""linear model"" are closely linked, they are not synonymous.