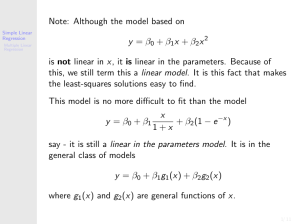

Note: Although the model based on y = β0 + β1x + β2x2 is not linear

... Define new data xij (t) where ½ 1 if t = i xij (t) = 0 if t 6= i ...

... Define new data xij (t) where ½ 1 if t = i xij (t) = 0 if t 6= i ...

x - My Teacher Pages

... I visited cnnsi’s website and checked out some of Kobe Bryant’s personal scoring numbers. I looked at the number of times he shot the ball and his point total for each game so far this year. Let’s come up with the regression equation for this data. ...

... I visited cnnsi’s website and checked out some of Kobe Bryant’s personal scoring numbers. I looked at the number of times he shot the ball and his point total for each game so far this year. Let’s come up with the regression equation for this data. ...

BMTRY 701 Biostatistical Methods II

... plot(father, son, xlab="Father's Height, Inches", ylab="Son's Height, Inches", ...

... plot(father, son, xlab="Father's Height, Inches", ylab="Son's Height, Inches", ...

PDF

... the empirical analyss, researchers should decide whether they are going to either generate or test hypotheses. Stepwise regression routines are quite useful for generating hypotheses to be tested subsequently with new data. It is only subsequently with new data. It is only attempting to present hypo ...

... the empirical analyss, researchers should decide whether they are going to either generate or test hypotheses. Stepwise regression routines are quite useful for generating hypotheses to be tested subsequently with new data. It is only subsequently with new data. It is only attempting to present hypo ...

Chapter 6

... then X1 and X2 are said to be collinear or linearly dependent. On the other hand, if last equation holds true only when λ2 = λ3 = 0, then X1 and X2 are said to be linearly independent; X1i = −4X2i or X1i + 4X2i = 0 If the two variables are linearly dependent, and if both are included in a regressi ...

... then X1 and X2 are said to be collinear or linearly dependent. On the other hand, if last equation holds true only when λ2 = λ3 = 0, then X1 and X2 are said to be linearly independent; X1i = −4X2i or X1i + 4X2i = 0 If the two variables are linearly dependent, and if both are included in a regressi ...

Ultrahigh Dimensional Feature Selection: Beyond The Linear Model

... justified by Fan & Fan (2008) under some ideal situations. However, common sense can carry us only so far. As indicated above and illustrated further in Section 4.1, it is easy to construct features that are marginally unrelated, but jointly related with the response. Such features will be screened ...

... justified by Fan & Fan (2008) under some ideal situations. However, common sense can carry us only so far. As indicated above and illustrated further in Section 4.1, it is easy to construct features that are marginally unrelated, but jointly related with the response. Such features will be screened ...

Power Point Presentation

... What We Need to Have to Do Inference • Construct 95% confidence interval for the slope • Get p-values for the t-statistic • This will allow us to state whether there is a statistically significant association between the independent and dependent variables – Test the hypothesis that β1 = 0 • SPSS, ...

... What We Need to Have to Do Inference • Construct 95% confidence interval for the slope • Get p-values for the t-statistic • This will allow us to state whether there is a statistically significant association between the independent and dependent variables – Test the hypothesis that β1 = 0 • SPSS, ...

Powerpoint for Chapter 15

... variance of the Y scores is uniform for all values of X – If the Y scores are evenly spread above and below the regression line for the entire length of the line, the relationship is homoscedastistic – If the variance around the regression line is greater at one end or the other, the relationship is ...

... variance of the Y scores is uniform for all values of X – If the Y scores are evenly spread above and below the regression line for the entire length of the line, the relationship is homoscedastistic – If the variance around the regression line is greater at one end or the other, the relationship is ...

Regression - NYU Stern

... and less accurate for other parts (larger σ). This property is called homoscedasticity, and its violation is called heteroscedasticity. A violation of this assumption means that the least squares estimates are not as efficient as they could be in estimating the true parameters, and better estimates ...

... and less accurate for other parts (larger σ). This property is called homoscedasticity, and its violation is called heteroscedasticity. A violation of this assumption means that the least squares estimates are not as efficient as they could be in estimating the true parameters, and better estimates ...

Multiple Linear Regression Review

... • Analyze the output and make changes in the model −− this is where the action is. • Test the regression result on “out-of of-sample” data ...

... • Analyze the output and make changes in the model −− this is where the action is. • Test the regression result on “out-of of-sample” data ...

Worksheet of Nov. 22

... A natural goal is to try to use the distance of a destination to predict the airfare for flying there, and the simplest model for this prediction is to assume that a straight line summarizes the relationship between distance and airfare. 1. Place a straightedge over the scatterplot above so that the ...

... A natural goal is to try to use the distance of a destination to predict the airfare for flying there, and the simplest model for this prediction is to assume that a straight line summarizes the relationship between distance and airfare. 1. Place a straightedge over the scatterplot above so that the ...

Slide 1

... Chapter 10: Simple Linear Regression A model in which a variable, X, explains another variable, Y, using a linear structure, with allowance for error, e—the unexplained part of Y: Y = b + m*X + e ...

... Chapter 10: Simple Linear Regression A model in which a variable, X, explains another variable, Y, using a linear structure, with allowance for error, e—the unexplained part of Y: Y = b + m*X + e ...

Weighted Ridge Estimation in a Collective Bargaining Context

... sectional units facing exactly the same contract with a single schedule of wages and benefits. Thus, there are no differences in the wages and benefits offered to the different locals. Thus, the only real differences between the locals in real expected compensation lie In the rate of change in the c ...

... sectional units facing exactly the same contract with a single schedule of wages and benefits. Thus, there are no differences in the wages and benefits offered to the different locals. Thus, the only real differences between the locals in real expected compensation lie In the rate of change in the c ...

Worksheet 4

... A natural goal is to try to use the distance of a destination to predict the airfare for flying there, and the simplest model for this prediction is to assume that a straight line summarizes the relationship between distance and airfare. 1. Place a straightedge over the scatterplot above so that the ...

... A natural goal is to try to use the distance of a destination to predict the airfare for flying there, and the simplest model for this prediction is to assume that a straight line summarizes the relationship between distance and airfare. 1. Place a straightedge over the scatterplot above so that the ...

Chapter14 - Karen A. Donahue, Ph.D.

... The association is negative when it slopes down Your book shows a positive relationship, because cases with high scores on X also tend to have high scores on Y For a negative relationship, high scores on X would tend to have low scores on Y, and vice versa ...

... The association is negative when it slopes down Your book shows a positive relationship, because cases with high scores on X also tend to have high scores on Y For a negative relationship, high scores on X would tend to have low scores on Y, and vice versa ...

Logistic Regression & Survival Analysis

... because the relationship between the DV (a discrete variable) and a predictor is non-linear ...

... because the relationship between the DV (a discrete variable) and a predictor is non-linear ...

Oct 10

... response variable y changes as an explanatory variable x changes. We can use a regression line to predict the value of y for a given value of x. Example: Predict the number of new adult birds that join the colony based on the percent of adult birds that return to the colony from the previous year. I ...

... response variable y changes as an explanatory variable x changes. We can use a regression line to predict the value of y for a given value of x. Example: Predict the number of new adult birds that join the colony based on the percent of adult birds that return to the colony from the previous year. I ...

IPPTChap004

... Summary If the F-statistic exceeds the critical F-value, the regression equation is statistically significant In multiple regression, the coefficients measure the change in Y associated with a one-unit change in that explanatory variable Quadratic regression models are appropriate when the cu ...

... Summary If the F-statistic exceeds the critical F-value, the regression equation is statistically significant In multiple regression, the coefficients measure the change in Y associated with a one-unit change in that explanatory variable Quadratic regression models are appropriate when the cu ...

Introduction to Applied Statistics

... The null hypothesis implies that SST = SSE, or SSR = 0 MSR = SSR/1 = variance explained by regression F = MSR/MSE If F > critical value, it is likely that b1 0, or that the regression line is significant ...

... The null hypothesis implies that SST = SSE, or SSR = 0 MSR = SSR/1 = variance explained by regression F = MSR/MSE If F > critical value, it is likely that b1 0, or that the regression line is significant ...

Logistic regression

... Multiple linear regression – residual error and estimations As the collected data is not expected to fall in a plane an error term must be added ...

... Multiple linear regression – residual error and estimations As the collected data is not expected to fall in a plane an error term must be added ...

L1 penalized LAD estimator for high dimensional linear

... where Y = (y1 , y2 , · · · , yn )′ is the n dimensional vector of outcomes, X is the n × p design matrix, and z = (z1 , z2 , · · · , zn )′ is the n dimensional vector of measurement errors (or noises). We assume X = (X1 , X2 , · · · , Xp ) where Xi ∈ Rn denotes the ith regressor or variable. Through ...

... where Y = (y1 , y2 , · · · , yn )′ is the n dimensional vector of outcomes, X is the n × p design matrix, and z = (z1 , z2 , · · · , zn )′ is the n dimensional vector of measurement errors (or noises). We assume X = (X1 , X2 , · · · , Xp ) where Xi ∈ Rn denotes the ith regressor or variable. Through ...

Chapter 14 iClicker Questions

... a) Graph a has a smaller standard error of estimate. b) Graph b has a smaller standard error of estimate. c) The standard error of estimate for both graphs is homogenous. d) The standard error of estimate for both graphs cannot be determined because they contain outliers which distort the data. 7. T ...

... a) Graph a has a smaller standard error of estimate. b) Graph b has a smaller standard error of estimate. c) The standard error of estimate for both graphs is homogenous. d) The standard error of estimate for both graphs cannot be determined because they contain outliers which distort the data. 7. T ...

Cl19

... 1) There are different values of Y for each X, however these different Ys are relatively close in value (the variability in Y associate with a given X is less than the overall variability in Y) 2) knowing X allows prediction of approximately what Y will be: data points will fall near the regression ...

... 1) There are different values of Y for each X, however these different Ys are relatively close in value (the variability in Y associate with a given X is less than the overall variability in Y) 2) knowing X allows prediction of approximately what Y will be: data points will fall near the regression ...

Limitation of ridge regression

... It can be shown that the expected value of the sum of these squared errors (bk*-βk*)2 is given by : ...

... It can be shown that the expected value of the sum of these squared errors (bk*-βk*)2 is given by : ...