* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Log Tuning

Survey

Document related concepts

Transcript

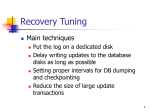

OS and Hardware Tuning Tuning Considerations OS Threads Thread Switching Priorities DB buffer size File System Disk layout and access Storage subsystem Virtual Memory Hardware Configuring the disk array Using the controller cache Components upgrades Multiprocessor Architectures Threads Shared vs. Dedicated mode: Fewer active threads than connected users trades increased waiting time for some users for decreased thread switching (and increased overall throughput). Switching control from one thread to another is expensive Long time slices to avoid switches, e.g. 1 sec. Transactions that do not all run at the same priority may incur in priority inversion Database system should not run below priority of other applications Threads Priority Inversion Three transactions: T1, T2, T3 in priority order (high to low) 1. T1 Priority #1 2. Priority #2 3. T2 Priority #3 T3 Transaction states running waiting T3 obtains lock on x and is preempted T1 blocks on x lock, so is descheduled T2 does not access x and runs for a long time Net effect: T1 waits for T2 Watch priority Avoid priority inversion Give all transactions the same priority (recommended by Oracle). Can lead to undesirable fairness. Dynamic priorities: Holder of lock inherits priority of highest priority waiter of lock (SQL Server) Tuning Considerations OS Threads Thread Switching Priorities DB buffer size File System Disk layout and access Storage subsystem Virtual Memory Hardware Configuring the disk array Using the controller cache Components upgrades Multiprocessor Architectures Impact of the buffer Goal of the buffer: reduce the nb of physical accesses to secondary memory (usually disks) The impact of the buffer on the number of physical accesses depends on three parameters: Logical reads and writes: Pages that the DBMS accesses via system read and write commands DBMS page replacements: Physical writes to disk that occur when a page must be brought into the bufer; there are no free pages; and the occupied pages are dirty Some of these will be in the buffer; others will be transalated to physical reads and writes By keeping the DB disks as up to date as possible, the tumer can ensure replacements occur rarely OS paging: physical accesses to disk that occur when part of the buffer space lies outside RAM. This should never happen. Database Buffer DATABASE PROCESSES hit ratio = (logical acc. - physical acc.) / (logical acc.) UNSTABLE MEMORY RAM LOG BUFFER DATABASE BUFFER Paging Disk LOG DATA STABLE MEMORY DATA Buffer too small, then hit ratio too small Buffer too large, risk of paging. Recommended strategy: monitor hit ratio and increase buffer size until hit ratio flattens out. If there is still paging, then buy memory. Database Buffer Size Scan Query Throughput (Queries/sec) 0.1 0.08 0.06 0.04 0.02 0 0 200 400 600 800 1000 Buffer Size (Mb) SQL Server 7 on Windows 2000 630 Mb relation -- Warm buffer(the table is scanned once before each run) Scan query: Multipoint Query Throughput (Queries/sec) 160 120 Multipoint query: 80 40 0 0 200 400 600 Buffer Size (Mb) 800 1000 Either relation accessed in RAM, or entire relation accessed on disk. This is because of LRU replacement policy Throughput increases linearly with buffer size up to the point where all data is accessed from RAM. Tuning Considerations OS Threads Thread Switching Priorities DB buffer size File System Disk layout and access Storage subsystem Virtual Memory Hardware Configuring the disk array Using the controller cache Components upgrades Multiprocessor Architectures Tunable parameters for file systems Size of disk chunks allocated at one time Allocate long sequential slices of disk to files that tend to be scanned. History or log file Scan-intensive file Usage factor on disk pages: percentage of a page that can be utilized, yet still permitting a further insertion Depending on scan/update ratio High utilization helps scan because fewer pages need be scanned (provided there are no overflows) Low utilization reduces likelihood of overflows when updates change the size of a record (e.g., string fields inserted with NULL value). Number of pages that may be prefetched Prefetching: strategy used to speed up table/index scans by physically reading ahead more pages than requested by a query at a specific point in the hope that future requests be logically fulfilled. Useful for queries that scan files Usage Factor Throughput (Trans/sec) 0.2 0.15 0.1 scan 0.05 DB2 UDB v7.1 on Windows 2000 Scan lineitem table (aggregation) Throughput increases significantly with usage factor. 0 70 80 90 100 Usage Factor (%) The bigger the usage factor the fuller the pages are The fewest pages have to be read Prefetching Throughput (Trans/sec) 0.2 0.15 0.1 scan 0.05 0 32Kb 64Kb 128Kb Prefetching 256Kb DB2 UDB v7.1 on Windows 2000 Scan lineitem table (aggregation) Throughput increases up to a certain point when prefetching size increases. Tuning Considerations OS Threads Hardware Thread Switching Priorities DB buffer size File System Disk layout and access Storage subsystem Virtual Memory Configuring the disk array Using the controller cache Components upgrades Multiprocessor Architectures RAID Levels (recap) RAID Level 0: Block striping; nonredundant. Used in high-performance applications where data lost is not critical. RAID Level 1: Mirrored disks with block striping Offers best write performance. Popular for applications such as storing log files in a database system. RAID Level 5: Rotated parity striping Partitions data and parity among all N + 1 disks, rather than storing data in N disks and parity in 1 disk. E.g., with 5 disks, parity block for nth set of blocks is stored on disk (n mod 5) + 1, with the data blocks stored on the other 4 disks. RAID Levels Log File RAID 1 is appropriate Temporary Files RAID 0 is appropriate. Fault tolerance with high write throughput. Writes are synchronous and sequential. No benefits in striping. No fault tolerance. High throughput. Data and Index Files RAID 5 is best suited for read intensive apps. RAID 10 is best suited for write intensive apps. RAID Levels Throughput (tuples/sec) Read-Intensive Read-Intensive: 80000 60000 40000 20000 0 SoftRAID5 RAID5 RAID0 RAID10 RAID1 Single Disk Write-Intensive: Throughput (tuples/sec) Write-Intensive 160 120 80 40 0 SoftRAID5 RAID5 RAID0 RAID10 RAID1 Single Disk Using multiple disks (RAID0, RAID 10, RAID5) increases throughput significantly. Negative impact on performance is obvious with Software RAID5. The controller manages to hide poor RAID5 performances using its cache Controller Cache Read-ahead: Prefetching at the disk controller level. No information on access pattern. Not recommended. Write-back vs. write through: Write back: transfer terminated as soon as data is written to cache. Batteries to guarantee write back in case of power failure Fast cache flushing is a priority Write through: transfer terminated as soon as data is written to disk. Controller Cache Updates on 2 Disks - Cache Size 80Mb SQL Server 7 on Windows 2000. Adaptec ServerRaid controller: Throughput (tuples/sec) 2000 no cache cache 1500 1000 500 Controller cache increases throughput whether operation is cache friendly or not. 0 cache friendly (90Mb) cache unfriendly (900Mb) 80 Mb RAM Write-back mode This controller implements an efficient replacement policy! Hardware Configuration Add Memory Add Disks Increase buffer size without increasing paging Log on separate disk Mirror frequently read file Partition large files Add Processors Off-load non-database applications onto other CPUs Off-load data mining applications to old database copy Increase throughput to shared data Shared memory or shared disk architecture Hardware Configuration SHARED EVERYTHING Site#1 Site#3 SHARED NOTHING (CLUSTER) Site#2 SHARED DISKS