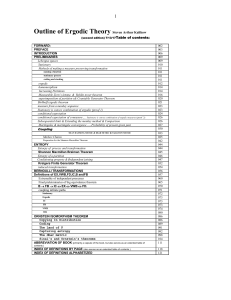

Outline of Ergodic Theory Steven Arthur Kalikow

... Example 2: We are receiving a sequence of signals from outer space coming from a random stationary (stationary means that the probability law is stationary in time) process. We are unable to detect a precise signal but we can encode it by interpreting 5 successive signals as one signal, but this cod ...

... Example 2: We are receiving a sequence of signals from outer space coming from a random stationary (stationary means that the probability law is stationary in time) process. We are unable to detect a precise signal but we can encode it by interpreting 5 successive signals as one signal, but this cod ...

Entropy Demystified : The Second Law Reduced to Plain Common

... the particles acquiring new identity. The correspondence was now between a die and a particle, and between the identity of the outcome of throwing a die, and the identity of the particle. I found this correspondence more aesthetically gratifying, thus making the correspondence between the dice-game ...

... the particles acquiring new identity. The correspondence was now between a die and a particle, and between the identity of the outcome of throwing a die, and the identity of the particle. I found this correspondence more aesthetically gratifying, thus making the correspondence between the dice-game ...

Information Theory Notes

... This particular encoding has the property that it is “prefix-free”, no codeword is a prefix of another codeword. This makes it easy to determine how many bits to read until we can determine which horse is being referenced. Now note some codewords are longer than 3 bits, but on the average, we see th ...

... This particular encoding has the property that it is “prefix-free”, no codeword is a prefix of another codeword. This makes it easy to determine how many bits to read until we can determine which horse is being referenced. Now note some codewords are longer than 3 bits, but on the average, we see th ...

On Generalized Measures of Information with

... Now, let us examine an alternate interpretation of the Shannon entropy functional that is important to study its mathematical properties and its generalizations. Let X be the underlying random variable, which takes values x 1 , . . . xn ; we use the notation ...

... Now, let us examine an alternate interpretation of the Shannon entropy functional that is important to study its mathematical properties and its generalizations. Let X be the underlying random variable, which takes values x 1 , . . . xn ; we use the notation ...

Computing Conditional Probabilities in Large Domains by

... A pair-wise probability matrix P will rank less than b. . . . . . . . . . . . . . . . . The weights ωi, j computed for the RLN. . . . . . . . . . . . . . . . . . . . . . . . Converged values for different τ using equation 3.32 and table 3.5. . . . . . . . . . Weights for functions in the parity exam ...

... A pair-wise probability matrix P will rank less than b. . . . . . . . . . . . . . . . . The weights ωi, j computed for the RLN. . . . . . . . . . . . . . . . . . . . . . . . Converged values for different τ using equation 3.32 and table 3.5. . . . . . . . . . Weights for functions in the parity exam ...

A Unified Maximum Likelihood Approach for Optimal

... p0 = (p0 (a) = 1/3, p0 (b) = 2/3) have the same entropy. In the common setting for these questions, def an unknown underlying distribution p ∈ ∆ generates n independent samples X n = X1 , , . . . ,Xn , and from this sample we would like to estimate a given property f (p). An age-old universal approa ...

... p0 = (p0 (a) = 1/3, p0 (b) = 2/3) have the same entropy. In the common setting for these questions, def an unknown underlying distribution p ∈ ∆ generates n independent samples X n = X1 , , . . . ,Xn , and from this sample we would like to estimate a given property f (p). An age-old universal approa ...

From Boltzmann to random matrices and beyond

... after fifty years of contributions. The proof of this high dimensional phenomenon involves tools from potential theory, from additive combinatorics, and from asymptotic geometric analysis. The circular law phenomenon can be checked in the Gaussian case using the fact that the model is then exactly so ...

... after fifty years of contributions. The proof of this high dimensional phenomenon involves tools from potential theory, from additive combinatorics, and from asymptotic geometric analysis. The circular law phenomenon can be checked in the Gaussian case using the fact that the model is then exactly so ...

Mathematical Structures in Computer Science Shannon entropy: a

... It is formally similar to the Boltzmann entropy associated with the statistical description of the microscopic configurations of many-body systems and the way it accounts for their macroscopic behaviour (Honerkamp 1998, Section 1.2.4.; Castiglione et al. 2008). The work of establishing the relationsh ...

... It is formally similar to the Boltzmann entropy associated with the statistical description of the microscopic configurations of many-body systems and the way it accounts for their macroscopic behaviour (Honerkamp 1998, Section 1.2.4.; Castiglione et al. 2008). The work of establishing the relationsh ...

Shannon entropy: a rigorous mathematical notion at the

... probability distribution p. In plain language, one could correctly say (Balian 2004) that Ip (x) is the surprise in observing x, given prior knowledge on the source summarized in p. Shannon entropy S(p) thus appears as the average missing information, that is, the average information required to spe ...

... probability distribution p. In plain language, one could correctly say (Balian 2004) that Ip (x) is the surprise in observing x, given prior knowledge on the source summarized in p. Shannon entropy S(p) thus appears as the average missing information, that is, the average information required to spe ...

The Entropy of Musical Classification A Thesis Presented to

... to consider the literal and emotional messages music conveys as the most distinguishable components. However, many times, two songs will have lyrics that dictate the same message, or two melodies that evoke the same emotion. Instead, what if we consider the temporal aspect of music to be at the fore ...

... to consider the literal and emotional messages music conveys as the most distinguishable components. However, many times, two songs will have lyrics that dictate the same message, or two melodies that evoke the same emotion. Instead, what if we consider the temporal aspect of music to be at the fore ...

Finite Probability Distributions in Coq

... this context there is an increasing trend to study such systems, where the specification of security requirements is provided in order to establish that the proposed system meets its requirements. Thus, from a scientific point of view, one of the most challenging problems in cryptography is to build ...

... this context there is an increasing trend to study such systems, where the specification of security requirements is provided in order to establish that the proposed system meets its requirements. Thus, from a scientific point of view, one of the most challenging problems in cryptography is to build ...

ENTROPY, SPEED AND SPECTRAL RADIUS OF RANDOM WALKS

... a few rolls one is still uncertain about the value of next roll. Conversely, if X has low entropy, after observing a few outcomes one obtains little information afterwards. For example, say X is a biased coin toss: P(X = 1) = p Then, the entropy is ...

... a few rolls one is still uncertain about the value of next roll. Conversely, if X has low entropy, after observing a few outcomes one obtains little information afterwards. For example, say X is a biased coin toss: P(X = 1) = p Then, the entropy is ...

(pdf)

... after a few rolls one is still uncertain about the value of next roll. Conversely, if X has low entropy, after observing a few outcomes one obtains little information afterwards. For example, say X is a biased coin toss: P(X = 1) = p ...

... after a few rolls one is still uncertain about the value of next roll. Conversely, if X has low entropy, after observing a few outcomes one obtains little information afterwards. For example, say X is a biased coin toss: P(X = 1) = p ...

The entropy per coordinate of a random vector is highly constrained

... term may be explicitly bounded. ...

... term may be explicitly bounded. ...

Maximum Entropy Inference and Stimulus Generalization

... especially for large positive !, so this extra condition was required to obtain a sensible solution. This observation may suggest that some crucial piece of information is missing from the assumed moment set. In fact, the nonmonotonic property of the maximum entropy solution disappears (i.e., no add ...

... especially for large positive !, so this extra condition was required to obtain a sensible solution. This observation may suggest that some crucial piece of information is missing from the assumed moment set. In fact, the nonmonotonic property of the maximum entropy solution disappears (i.e., no add ...

entropy

... where p(x) ∈ [0.0, 1.0], x∈X p(x) = 1.0, and − log p(x) represents the information associated with a single occurrence of x. The unit of information is called a bit. Normally, the logarithm is taken in base 2 or e. For continuity, zero probability does not contribute to the entropy, i.e., 0 log 0 = ...

... where p(x) ∈ [0.0, 1.0], x∈X p(x) = 1.0, and − log p(x) represents the information associated with a single occurrence of x. The unit of information is called a bit. Normally, the logarithm is taken in base 2 or e. For continuity, zero probability does not contribute to the entropy, i.e., 0 log 0 = ...

Uniqueness of maximal entropy measure on essential

... all spanning forests FH of H that give the same relationship (and for which each component of FH contains at least one point on the boundary of H) occur with equal probability. If µ did not have this property, then we could obtain a different measure µ′ from µ by first sampling a random collection S ...

... all spanning forests FH of H that give the same relationship (and for which each component of FH contains at least one point on the boundary of H) occur with equal probability. If µ did not have this property, then we could obtain a different measure µ′ from µ by first sampling a random collection S ...

Exercise Problems: Information Theory and Coding

... Therefore we need only compute the coefficients associated with (say) the positive frequencies, because then we automatically know the coefficients for the negative frequencies as well. Hence the two-fold “reduction” in the input data by being real- rather than complex-valued, is reflected by a corr ...

... Therefore we need only compute the coefficients associated with (say) the positive frequencies, because then we automatically know the coefficients for the negative frequencies as well. Hence the two-fold “reduction” in the input data by being real- rather than complex-valued, is reflected by a corr ...

PDF 0.28MB

... not necessarily effect the amount of information that a shape contains. This notion initially seems counter intuitive since we would tend to believe that shapes with higher curvature would have more information. Higher curvature however is rather a level of degree and not a level of information. Loc ...

... not necessarily effect the amount of information that a shape contains. This notion initially seems counter intuitive since we would tend to believe that shapes with higher curvature would have more information. Higher curvature however is rather a level of degree and not a level of information. Loc ...

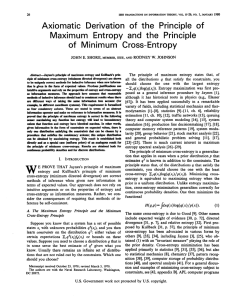

Axiomatic Derivation of the Principle of Maximum Entropy and the

... whether one treats an independent subset of sysminimization. Cross-entropy has properties that are desirtem states in terms of a separate conditional able for an information measure [33], [34], [53], and one density or in terms of the full system density. can argue [54] that it measures the amount o ...

... whether one treats an independent subset of sysminimization. Cross-entropy has properties that are desirtem states in terms of a separate conditional able for an information measure [33], [34], [53], and one density or in terms of the full system density. can argue [54] that it measures the amount o ...

PDF

... theory to quantify the capacity of a noisy channel to send information[1]. This capacity can be expressed using the mutual information between input and output for a single use of the channel: although correlations between subsequent input bits are used to correct errors, they cannot increase the ca ...

... theory to quantify the capacity of a noisy channel to send information[1]. This capacity can be expressed using the mutual information between input and output for a single use of the channel: although correlations between subsequent input bits are used to correct errors, they cannot increase the ca ...

Entropy (information theory)

... sider the example of a poll on some political issue. Usually, such polls happen because the outcome of the poll isn't already known. In other words, the outcome of the poll is relatively unpredictable, and actually performing the poll and learning the results gives some new information; these are ju ...

... sider the example of a poll on some political issue. Usually, such polls happen because the outcome of the poll isn't already known. In other words, the outcome of the poll is relatively unpredictable, and actually performing the poll and learning the results gives some new information; these are ju ...

Spike train entropy-rate estimation using hierarchical Dirichlet

... where s0 denotes the context s with the earliest symbol removed. This choice gives the prior distribution of gs mean gs0 , as desired. We continue constructing the prior with gs00 |gs0 ∼ Beta(α|s0 | gs00 , α|s0 | (1 − gs00 )) and so on until g[] ∼ Beta(α0 p∅ , α0 (1 − p∅ )) where g[] is the probabil ...

... where s0 denotes the context s with the earliest symbol removed. This choice gives the prior distribution of gs mean gs0 , as desired. We continue constructing the prior with gs00 |gs0 ∼ Beta(α|s0 | gs00 , α|s0 | (1 − gs00 )) and so on until g[] ∼ Beta(α0 p∅ , α0 (1 − p∅ )) where g[] is the probabil ...

Compression: 1

... Obviously, there is some structure to this data. However, if we look at it one symbol at a time the structure is difficult to extract. Consider the probabilities: P(1) = P(2) = 0,25, and P(3) = 0,5. The entropy is 1.5 bits/symbol. This particular sequence consists of 20 symbols; therefore, the total ...

... Obviously, there is some structure to this data. However, if we look at it one symbol at a time the structure is difficult to extract. Consider the probabilities: P(1) = P(2) = 0,25, and P(3) = 0,5. The entropy is 1.5 bits/symbol. This particular sequence consists of 20 symbols; therefore, the total ...

Entropy (information theory)

In information theory, entropy (more specifically, Shannon entropy) is the expected value (average) of the information contained in each message received. 'Messages' don't have to be text; in this context a 'message' is simply any flow of information. The entropy of the message is its amount of uncertainty; it increases when the message is closer to random, and decreases when it is less random. The idea here is that the less likely an event is, the more information it provides when it occurs. This seems backwards at first: it seems like messages which have more structure would contain more information, but this is not true. For example, the message 'aaaaaaaaaa' (which appears to be very structured and not random at all [although in fact it could result from a random process]) contains much less information than the message 'alphabet' (which is somewhat structured, but more random) or even the message 'axraefy6h' (which is very random). In information theory, 'information' doesn't necessarily mean useful information; it simply describes the amount of randomness of the message, so in the example above the first message has the least information and the last message has the most information, even though in everyday terms we would say that the middle message, 'alphabet', contains more information than a stream of random letters. Therefore, we would say in information theory that the first message has low entropy, the second has higher entropy, and the third has the highest entropy.In a more technical sense, there are reasons (explained below) to define information as the negative of the logarithm of the probability distribution. The probability distribution of the events, coupled with the information amount of every event, forms a random variable whose average (also termed expected value) is the average amount of information, a.k.a. entropy, generated by this distribution. Units of entropy are the shannon, nat, or hartley, depending on the base of the logarithm used to define it, though the shannon is commonly referred to as a bit.The logarithm of the probability distribution is useful as a measure of entropy because it is additive for independent sources. For instance, the entropy of a coin toss is 1 shannon, whereas of m tosses it is m shannons. Generally, you need log2(n) bits to represent a variable that can take one of n values if n is a power of 2. If these values are equiprobable, the entropy (in shannons) is equal to the number of bits. Equality between number of bits and shannons holds only while all outcomes are equally probable. If one of the events is more probable than others, observation of that event is less informative. Conversely, observing rarer events compensate by providing more information when observed. Since observation of less probable events occurs more rarely, the net effect is that the entropy (thought of as the average information) received from non-uniformly distributed data is less than log2(n). Entropy is zero when one outcome is certain. Shannon entropy quantifies all these considerations exactly when a probability distribution of the source is known. The meaning of the events observed (a.k.a. the meaning of messages) do not matter in the definition of entropy. Entropy only takes into account the probability of observing a specific event, so the information it encapsulates is information about the underlying probability distribution, not the meaning of the events themselves.Generally, entropy refers to disorder or uncertainty. Shannon entropy was introduced by Claude E. Shannon in his 1948 paper ""A Mathematical Theory of Communication"". Shannon entropy provides an absolute limit on the best possible average length of lossless encoding or compression of an information source. Rényi entropy generalizes Shannon entropy.