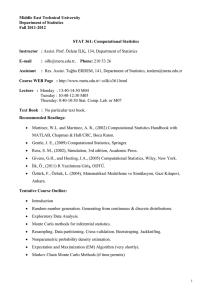

STAT 361: Computational Statistics

... Please do not have last minute requests. Especially, don’t try to contact us right before exams! ...

... Please do not have last minute requests. Especially, don’t try to contact us right before exams! ...

Lesson1

... For a continuous distribution, p(x), over a range (a,b) (i.e., x=a to x=b). the true mean, x , is the first moment of x: ...

... For a continuous distribution, p(x), over a range (a,b) (i.e., x=a to x=b). the true mean, x , is the first moment of x: ...

Lesson1

... For a continuous distribution, p(x), over a range (a,b) (i.e., x=a to x=b). the true mean, x , is the first moment of x: ...

... For a continuous distribution, p(x), over a range (a,b) (i.e., x=a to x=b). the true mean, x , is the first moment of x: ...

sample only Get fully solved assignment, plz drop a mail with your

... Hungarian method algorithm is based on the concept of opportunity cost and is more efficient in solving assignment problems. The following steps are adopted to solve an AP using the Hungarian method algorithm. Step 1: Prepare row ruled matrix by selecting the minimum values for each row and subtract ...

... Hungarian method algorithm is based on the concept of opportunity cost and is more efficient in solving assignment problems. The following steps are adopted to solve an AP using the Hungarian method algorithm. Step 1: Prepare row ruled matrix by selecting the minimum values for each row and subtract ...

Topic 9: The Law of Large Numbers

... So the mean of these running averages remains at µ but the variance is inversely proportional to the number of terms in the sum. The result is the law of large numbers: For a sequence of random variables each independent from the other and having a common distribution X1 , X2 , . . ., lim ...

... So the mean of these running averages remains at µ but the variance is inversely proportional to the number of terms in the sum. The result is the law of large numbers: For a sequence of random variables each independent from the other and having a common distribution X1 , X2 , . . ., lim ...

X - Computer Science and Engineering

... •Pick independent samples from distributions of Level0 parameters •Compute functions using these samples until highest level reached •Iterate by repeating the preceding 2 steps ...

... •Pick independent samples from distributions of Level0 parameters •Compute functions using these samples until highest level reached •Iterate by repeating the preceding 2 steps ...

Estimating probability of failure

... uncertainties in the simulation. • The limit on S, or the resistance of the system is denoted as R(X). The limit state function is g=R-S. • Failure occurs when g=R-S<0. • Unfortunately an alternate definition is system response is denoted as R and resistance by C for capacity. ...

... uncertainties in the simulation. • The limit on S, or the resistance of the system is denoted as R(X). The limit state function is g=R-S. • Failure occurs when g=R-S<0. • Unfortunately an alternate definition is system response is denoted as R and resistance by C for capacity. ...

Post-doc position Convergence of adaptive Markov Chain Monte

... study of the equi-energy sampler; this algorithm is general enough to provide answers for improving state partition-based MCMC algorithms and to provide answers to molecular dynamics simulations. Another example of adaptive MCMC coming from some techniques used in molecular dynamics for the computat ...

... study of the equi-energy sampler; this algorithm is general enough to provide answers for improving state partition-based MCMC algorithms and to provide answers to molecular dynamics simulations. Another example of adaptive MCMC coming from some techniques used in molecular dynamics for the computat ...

cours1

... We have seen that simulation methods, and in particular the Monte Carlo method, are based on random sampling. Ideally, random sampling would be executed by physical experiments (throwing dice, spinning roulette wheels,...). This would lead to truly random numbers. However, a typical implementation o ...

... We have seen that simulation methods, and in particular the Monte Carlo method, are based on random sampling. Ideally, random sampling would be executed by physical experiments (throwing dice, spinning roulette wheels,...). This would lead to truly random numbers. However, a typical implementation o ...

spdf(X) or (X)

... Analytic operation on continuous distributions difficult; instead operations on discrete distributions implemented : •F (Forward) : Given a function, estimates the distribution of next node in the formula hierarchy •Q (Quantize) : Discretize a pdf to operate on its samples •B (Bandpass) : Decrements ...

... Analytic operation on continuous distributions difficult; instead operations on discrete distributions implemented : •F (Forward) : Given a function, estimates the distribution of next node in the formula hierarchy •Q (Quantize) : Discretize a pdf to operate on its samples •B (Bandpass) : Decrements ...

Introduction - ODU Computer Science

... numbers • If we can manage to make the uncertainty fairly negligible, Monte Carlo can serve a useful purpose – Good experiments • Ensure the sample should be more rather than less representative in the Monte Carlo computation – Good representation of conclusions • Indicates how likely the solution c ...

... numbers • If we can manage to make the uncertainty fairly negligible, Monte Carlo can serve a useful purpose – Good experiments • Ensure the sample should be more rather than less representative in the Monte Carlo computation – Good representation of conclusions • Indicates how likely the solution c ...

F2004

... This course provides an introduction to stochastic operations research. It deals with the formulation and solution of some industrial and business problems involving uncertainty. The emphasis is on mathematical models and their applications. Textbook: Course Notes Prerequisite: Introductory courses ...

... This course provides an introduction to stochastic operations research. It deals with the formulation and solution of some industrial and business problems involving uncertainty. The emphasis is on mathematical models and their applications. Textbook: Course Notes Prerequisite: Introductory courses ...

Course Wrapup Physics 3730/6720 Spring Semester 2016

... Numerical integration; trapezoid, Monte Carlo... ...

... Numerical integration; trapezoid, Monte Carlo... ...

Monte Carlo Methods in Scientific Computing

... been particularly extensively studied and led to spectacular results: The first-order transition of the corresponding bulk liquid crystal is drastically softened in the porous glass and becomes continuous, an effect that was not attributed to finitesize effects but rather to the influence of random ...

... been particularly extensively studied and led to spectacular results: The first-order transition of the corresponding bulk liquid crystal is drastically softened in the porous glass and becomes continuous, an effect that was not attributed to finitesize effects but rather to the influence of random ...

Monte Carlo Methods

... terminal velocity). The results of the probabilistic analysis can take many forms depending on the specific application. In the example of the thurster where the terminal velocity is critical to the performance and efficiency of the thruster, the following information might be desired from a probabi ...

... terminal velocity). The results of the probabilistic analysis can take many forms depending on the specific application. In the example of the thurster where the terminal velocity is critical to the performance and efficiency of the thruster, the following information might be desired from a probabi ...

Computational Complexity of Two and Multistage Stochastic Programming Problems

... ABSTRACT A standard approach to solving stochastic programming problems is by discretization of the involved probability distributions and consequently solving the obtained (large scale) optimization problem. With increase of the number of random parameters, this typically results in an exponential ...

... ABSTRACT A standard approach to solving stochastic programming problems is by discretization of the involved probability distributions and consequently solving the obtained (large scale) optimization problem. With increase of the number of random parameters, this typically results in an exponential ...

mod

... a scheme based upon measuring the times between cosmic ray hits in a detector, or on some physical process such as the generation of noise in an electronic circuit. Such numbers would be random in the sense that it would be impossible to predict the value of the next number from previous numbers but ...

... a scheme based upon measuring the times between cosmic ray hits in a detector, or on some physical process such as the generation of noise in an electronic circuit. Such numbers would be random in the sense that it would be impossible to predict the value of the next number from previous numbers but ...

Monte Carlo Method www.AssignmentPoint.com Monte Carlo

... These flows of probability distributions can always be interpreted as the distributions of the random states of a Markov process whose transition probabilities depends on the distributions of the current random states (see McKean-Vlasov processes, nonlinear filtering equation). In other instances we ...

... These flows of probability distributions can always be interpreted as the distributions of the random states of a Markov process whose transition probabilities depends on the distributions of the current random states (see McKean-Vlasov processes, nonlinear filtering equation). In other instances we ...

Lecture 1 () - Strongly Correlated Systems

... TR satisfies the detailed balance condition but TS satisfies only the stationarity condition. ...

... TR satisfies the detailed balance condition but TS satisfies only the stationarity condition. ...

Monte Carlo Simulations

... accomplished by means of an efficient Monte Carlo method, even in cases where no explicit formula for the a priori distribution is available. The best-known importance sampling method, the Metropolis algorithm, can be generalized, and this gives a method that allows analysis of (possibly highly nonl ...

... accomplished by means of an efficient Monte Carlo method, even in cases where no explicit formula for the a priori distribution is available. The best-known importance sampling method, the Metropolis algorithm, can be generalized, and this gives a method that allows analysis of (possibly highly nonl ...

Markov Chain Monte Carlo (MCMC)

... Monte Carlo methods (or Monte Carlo experiments) are a broad class of computational algorithms that rely on repeated random sampling to obtain numerical results ...

... Monte Carlo methods (or Monte Carlo experiments) are a broad class of computational algorithms that rely on repeated random sampling to obtain numerical results ...

Statistical Computing and Simulation

... Evaluate the following quantities by both numerical and Monte Carlo integration, and compare their errors with respect to the numbers of observations used. Also, propose at least two simulation methods to reduce the variance of Monte Carlo integration and compare their variances. ...

... Evaluate the following quantities by both numerical and Monte Carlo integration, and compare their errors with respect to the numbers of observations used. Also, propose at least two simulation methods to reduce the variance of Monte Carlo integration and compare their variances. ...

Summary Team members: Weiqian Yan, Kanchan Khurad, and Yi

... about a system by simulating it with random sampling. It is often the simplest way to solve a problem. A Monte Carlo method is any process that consumes random numbers, each calculation is numerical experiment and Sources of errors must be controllable. Clustering is the task of grouping a set of ob ...

... about a system by simulating it with random sampling. It is often the simplest way to solve a problem. A Monte Carlo method is any process that consumes random numbers, each calculation is numerical experiment and Sources of errors must be controllable. Clustering is the task of grouping a set of ob ...

VARIOUS ESTIMATIONS OF π AS

... The Monte Carlo method uses pseudo-random numbers (numbers which are generated by a formula using the selection of one random “seed” number) as values for certain variables in algorithms to generate random variates of chosen probability functions to be used in simulations of statistical models or to ...

... The Monte Carlo method uses pseudo-random numbers (numbers which are generated by a formula using the selection of one random “seed” number) as values for certain variables in algorithms to generate random variates of chosen probability functions to be used in simulations of statistical models or to ...

Statistical Computing and Simulation

... could count “nlminb” as one of the method (for replacing Newton’s method). Also, similar to what we saw in the class, discuss what is the influence of starting points to the number of iterations. Experiment with as many variance reduction techniques as you can think of to apply the problem of evalua ...

... could count “nlminb” as one of the method (for replacing Newton’s method). Also, similar to what we saw in the class, discuss what is the influence of starting points to the number of iterations. Experiment with as many variance reduction techniques as you can think of to apply the problem of evalua ...

Monte Carlo method

Monte Carlo methods (or Monte Carlo experiments) are a broad class of computational algorithms that rely on repeated random sampling to obtain numerical results. They are often used in physical and mathematical problems and are most useful when it is difficult or impossible to use other mathematical methods. Monte Carlo methods are mainly used in three distinct problem classes: optimization, numerical integration, and generating draws from a probability distribution.In physics-related problems, Monte Carlo methods are quite useful for simulating systems with many coupled degrees of freedom, such as fluids, disordered materials, strongly coupled solids, and cellular structures (see cellular Potts model, interacting particle systems, McKean-Vlasov processes, kinetic models of gases). Other examples include modeling phenomena with significant uncertainty in inputs such as the calculation of risk in business and, in math, evaluation of multidimensional definite integrals with complicated boundary conditions. In application to space and oil exploration problems, Monte Carlo–based predictions of failure, cost overruns and schedule overruns are routinely better than human intuition or alternative ""soft"" methods.In principle, Monte Carlo methods can be used to solve any problem having a probabilistic interpretation. By the law of large numbers, integrals described by the expected value of some random variable can be approximated by taking the empirical mean (a.k.a. the sample mean) of independent samples of the variable. When the probability distribution of the variable is too complex, we often use a Markov Chain Monte Carlo (MCMC) sampler. The central idea is to design a judicious Markov chain model with a prescribed stationary probability distribution. By the ergodic theorem, the stationary probability distribution is approximated by the empirical measures of the random states of the MCMC sampler.In other important problems we are interested in generating draws from a sequence of probability distributions satisfying a nonlinear evolution equation. These flows of probability distributions can always be interpreted as the distributions of the random states of a Markov process whose transition probabilities depends on the distributions of the current random states (see McKean-Vlasov processes, nonlinear filtering equation). In other instances we are given a flow of probability distributions with an increasing level of sampling complexity (path spaces models with an increasing time horizon, Boltzmann-Gibbs measures associated with decreasing temperature parameters, and many others). These models can also be seen as the evolution of the law of the random states of a nonlinear Markov chain. A natural way to simulate these sophisticated nonlinear Markov processes is to sample a large number of copies of the process, replacing in the evolution equation the unknown distributions of the random states by the sampled empirical measures. In contrast with traditional Monte Carlo and Markov chain Monte Carlo methodologies these mean field particle techniques rely on sequential interacting samples. The terminology mean field reflects the fact that each of the samples (a.k.a. particles, individuals, walkers, agents, creatures, or phenotypes) interacts with the empirical measures of the process. When the size of the system tends to infinity, these random empirical measures converge to the deterministic distribution of the random states of the nonlinear Markov chain, so that the statistical interaction between particles vanishes.