Microprocessor Types and Specifications

... The latest development is the introduction of dual-core processors from both Intel and AMD. Dual-core processors have two full CPU cores operating off of one CPU package—in essence enabling a single processor to perform the work of two processors. Although dual-core processors don’t make games (whic ...

... The latest development is the introduction of dual-core processors from both Intel and AMD. Dual-core processors have two full CPU cores operating off of one CPU package—in essence enabling a single processor to perform the work of two processors. Although dual-core processors don’t make games (whic ...

Threads Threads, User vs. Kernel Threads, Java Threads, Threads

... Threads can also be supported in Kernel Space The kernel has data structure and functionality to deal with threads Most modern OSes support kernel threads In fact, Linux doesn’t really make a difference between processes and threads (same data structure) ...

... Threads can also be supported in Kernel Space The kernel has data structure and functionality to deal with threads Most modern OSes support kernel threads In fact, Linux doesn’t really make a difference between processes and threads (same data structure) ...

HIGH PERFORMANCE COMPUTING APPLIED TO CLOUD COMPUTING 2015

... 2.1.2 History of High Performance Computing The birth of high performance computing can be traced back to the start of commercial computing in the 1950´s. At that time, the only type of commercially available computing was mainframe computing. One of the main tasks required was billing, a task that ...

... 2.1.2 History of High Performance Computing The birth of high performance computing can be traced back to the start of commercial computing in the 1950´s. At that time, the only type of commercially available computing was mainframe computing. One of the main tasks required was billing, a task that ...

Threads - TheToppersWay

... memory and artithmetic/logic units for each of several cores. This would allow each core to perform operations at the same time as the others. ...

... memory and artithmetic/logic units for each of several cores. This would allow each core to perform operations at the same time as the others. ...

Java Threads - Users.drew.edu

... • Even a single application is often expected to do more than one thing at a time. • Example: Streaming video application must simultaneously: – Read the digital audio off the network – Decompress it – Manage playback ...

... • Even a single application is often expected to do more than one thing at a time. • Example: Streaming video application must simultaneously: – Read the digital audio off the network – Decompress it – Manage playback ...

ASC Programming - Computer Science

... Parallel version normally executes both “bodies”. First finds the responders for the conditional If there are any responders, the responding PEs execute the body of the IF. Next identifies the non-responders for the conditional If there are non-responders, those PEs executes the body of the ...

... Parallel version normally executes both “bodies”. First finds the responders for the conditional If there are any responders, the responding PEs execute the body of the IF. Next identifies the non-responders for the conditional If there are non-responders, those PEs executes the body of the ...

Colin Roby and Jaewook Kim - WindowsThread

... Two hardware thread share one core This is known as simultaneous multi-threading (aka Hyper-Threading) ...

... Two hardware thread share one core This is known as simultaneous multi-threading (aka Hyper-Threading) ...

Operating Systems Abstractions for Software Packet Processing in Datacenters

... at the core of the datacenter design is to provide a highly available, high performance computing and storage infrastructure while relying solely on low cost, commodity components. Further, in the past few years, entire datacenters have become a commodity themselves, and are increasingly being netwo ...

... at the core of the datacenter design is to provide a highly available, high performance computing and storage infrastructure while relying solely on low cost, commodity components. Further, in the past few years, entire datacenters have become a commodity themselves, and are increasingly being netwo ...

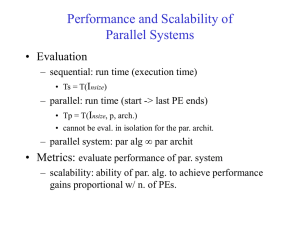

Performance and Scalability of Parallel Systems

... • Determines the easy with which a parallel system can maintain a constant efficiency and thus, achieve speedups increasing in proportion to the number of processors • A small isoefficiency function means that small increments in the problem size are sufficient for the efficient utilization of an in ...

... • Determines the easy with which a parallel system can maintain a constant efficiency and thus, achieve speedups increasing in proportion to the number of processors • A small isoefficiency function means that small increments in the problem size are sufficient for the efficient utilization of an in ...

Concurrency: Threads, Address Spaces, and Processes

... 1. Multiple applications need to be protected from one another. 2. Multiple applications may need to coordinate through additional mechanisms. ...

... 1. Multiple applications need to be protected from one another. 2. Multiple applications may need to coordinate through additional mechanisms. ...

Allinea Tools - Computational Information Systems Laboratory

... • Fetches values from every process, compares and then groups by value • Summary of NaNs, Infs and statistics ...

... • Fetches values from every process, compares and then groups by value • Summary of NaNs, Infs and statistics ...

The Benefits of Clustering in Shared Address Space Multiprocessors

... time of the shared cache as the number of processors per cluster increases. These latency values are based on previous studies of shared primary cache architectures[9]. The latencies of local and remote misses are consistent with the relative processor speeds, DRAM speeds and network speeds of moder ...

... time of the shared cache as the number of processors per cluster increases. These latency values are based on previous studies of shared primary cache architectures[9]. The latencies of local and remote misses are consistent with the relative processor speeds, DRAM speeds and network speeds of moder ...

Paper - George Karypis

... hand corner of the matrix will be idle for more time as the size of the matrix increases. But, the two dimensional cyclic mapping solves this problem by localizing the pipeline to a xed portion of the matrix independent of the size of the matrix. This is a very desirable property for achieving sca ...

... hand corner of the matrix will be idle for more time as the size of the matrix increases. But, the two dimensional cyclic mapping solves this problem by localizing the pipeline to a xed portion of the matrix independent of the size of the matrix. This is a very desirable property for achieving sca ...

NVIDIA® DirectTouch™ Architecture Whitepaper

... Simplified Design- DirectTouch architecture allows the touch panel to directly interface with the mainboard instead of going through a flex touch control module. The flash memory, static RAM, power regulators, and the crystal required for the touch controller are reduced or eliminated. The interface ...

... Simplified Design- DirectTouch architecture allows the touch panel to directly interface with the mainboard instead of going through a flex touch control module. The flash memory, static RAM, power regulators, and the crystal required for the touch controller are reduced or eliminated. The interface ...

Multi-core processor

A multi-core processor is a single computing component with two or more independent actual processing units (called ""cores""), which are the units that read and execute program instructions. The instructions are ordinary CPU instructions such as add, move data, and branch, but the multiple cores can run multiple instructions at the same time, increasing overall speed for programs amenable to parallel computing. Manufacturers typically integrate the cores onto a single integrated circuit die (known as a chip multiprocessor or CMP), or onto multiple dies in a single chip package.Processors were originally developed with only one core. In the mid 1980s Rockwell International manufactured versions of the 6502 with two 6502 cores on one chip as the R65C00, R65C21, and R65C29, sharing the chip's pins on alternate clock phases. Other multi-core processors were developed in the early 2000s by Intel, AMD and others.Multi-core processors may have two cores (dual-core CPUs, for example, AMD Phenom II X2 and Intel Core Duo), four cores (quad-core CPUs, for example, AMD Phenom II X4, Intel's i5 and i7 processors), six cores (hexa-core CPUs, for example, AMD Phenom II X6 and Intel Core i7 Extreme Edition 980X), eight cores (octa-core CPUs, for example, Intel Xeon E7-2820 and AMD FX-8350), ten cores (deca-core CPUs, for example, Intel Xeon E7-2850), or more.A multi-core processor implements multiprocessing in a single physical package. Designers may couple cores in a multi-core device tightly or loosely. For example, cores may or may not share caches, and they may implement message passing or shared-memory inter-core communication methods. Common network topologies to interconnect cores include bus, ring, two-dimensional mesh, and crossbar. Homogeneous multi-core systems include only identical cores, heterogeneous multi-core systems have cores that are not identical. Just as with single-processor systems, cores in multi-core systems may implement architectures such as superscalar, VLIW, vector processing, SIMD, or multithreading.Multi-core processors are widely used across many application domains including general-purpose, embedded, network, digital signal processing (DSP), and graphics.The improvement in performance gained by the use of a multi-core processor depends very much on the software algorithms used and their implementation. In particular, possible gains are limited by the fraction of the software that can be run in parallel simultaneously on multiple cores; this effect is described by Amdahl's law. In the best case, so-called embarrassingly parallel problems may realize speedup factors near the number of cores, or even more if the problem is split up enough to fit within each core's cache(s), avoiding use of much slower main system memory. Most applications, however, are not accelerated so much unless programmers invest a prohibitive amount of effort in re-factoring the whole problem. The parallelization of software is a significant ongoing topic of research.