Thermodynamics and Heat Powered Cycles

... This integrated, engineering textbook is the result of fourteen semesters of CyclePad usage and evaluation of a course designed to exploit the power of the software, and to chart a path that truly integrates the computer with education. The primary aim is to give students a thorough grounding in bot ...

... This integrated, engineering textbook is the result of fourteen semesters of CyclePad usage and evaluation of a course designed to exploit the power of the software, and to chart a path that truly integrates the computer with education. The primary aim is to give students a thorough grounding in bot ...

Role of the Sarcoplasmic Reticulum Ca2+-ATPase

... the dissipation of the proton electrochemical gradient formed across the inner mitochondrial membrane during respiration. In order to restore the gradient and to prevent the decrease of the cytosolic ATP concentration, the proton leakage promoted by the UCP leads to increased mitochondrial respirati ...

... the dissipation of the proton electrochemical gradient formed across the inner mitochondrial membrane during respiration. In order to restore the gradient and to prevent the decrease of the cytosolic ATP concentration, the proton leakage promoted by the UCP leads to increased mitochondrial respirati ...

2002 Lyman ETFS

... The experimental design was such to insure that meaningful, spatially-resolved heat transfer coefficients could be made along several streamwise louvers over a range of Reynolds numbers. To insure good measurement resolution, the louver model was scaled up by a factor of 20. The flow conditions were ...

... The experimental design was such to insure that meaningful, spatially-resolved heat transfer coefficients could be made along several streamwise louvers over a range of Reynolds numbers. To insure good measurement resolution, the louver model was scaled up by a factor of 20. The flow conditions were ...

Aalborg Universitet Cooling of the Building Structure by Night-time Ventilation Artmann, Nikolai

... assess the impact of different parameters, such as slab thickness, material properties and the surface heat transfer, the dynamic heat storage capacity of building elements was quantified based on an analytical solution of one-dimensional heat conduction in a slab with convective boundary condition. ...

... assess the impact of different parameters, such as slab thickness, material properties and the surface heat transfer, the dynamic heat storage capacity of building elements was quantified based on an analytical solution of one-dimensional heat conduction in a slab with convective boundary condition. ...

hw2-sp11 - Learn Thermo HOME

... 97.11 Btu/lbm 175.90 Btu/lbm the system contains a subcooled liquid and temperature must be less than the saturation temperature. We can now use the TFT to directly evaluate T. ...

... 97.11 Btu/lbm 175.90 Btu/lbm the system contains a subcooled liquid and temperature must be less than the saturation temperature. We can now use the TFT to directly evaluate T. ...

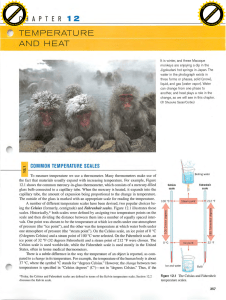

A Heat Transfer Textbook by John H. Lienhard IV and John H

... Verne’s description in Around the World in Eighty Days in which, to win a race, a crew burns the inside of a ship to power the steam engine. The combustion of nonrenewable fossil energy sources (and, more recently, the fission of uranium) has led to remarkably intense energy releases in power-generat ...

... Verne’s description in Around the World in Eighty Days in which, to win a race, a crew burns the inside of a ship to power the steam engine. The combustion of nonrenewable fossil energy sources (and, more recently, the fission of uranium) has led to remarkably intense energy releases in power-generat ...

Unsteady coupled convection, conduction and radiation simulations

... In the aeronautical industry, energy generation relies almost exclusively in the combustion of hydrocarbons. The best way to improve the efficiency of such systems, while controlling their environmental impact, is to optimize the combustion process. With the continuous rise of computational power, s ...

... In the aeronautical industry, energy generation relies almost exclusively in the combustion of hydrocarbons. The best way to improve the efficiency of such systems, while controlling their environmental impact, is to optimize the combustion process. With the continuous rise of computational power, s ...

Unsteady coupled convection, conduction and radiation simulations

... In the aeronautical industry, energy generation relies almost exclusively in the combustion of hydrocarbons. The best way to improve the efficiency of such systems, while controlling their environmental impact, is to optimize the combustion process. With the continuous rise of computational power, s ...

... In the aeronautical industry, energy generation relies almost exclusively in the combustion of hydrocarbons. The best way to improve the efficiency of such systems, while controlling their environmental impact, is to optimize the combustion process. With the continuous rise of computational power, s ...

2007 Lynch TE

... that region results in high heat transfer rates, and also tends to sweep coolant away from the endwall surface. Assembly of individual turbine components inherently results in gaps between parts. The large operational range of a gas turbine results in significant thermal expansion, making these gaps ...

... that region results in high heat transfer rates, and also tends to sweep coolant away from the endwall surface. Assembly of individual turbine components inherently results in gaps between parts. The large operational range of a gas turbine results in significant thermal expansion, making these gaps ...

A relation between continental heat flow and the seismic reflectivity

... Different approaches to the study of the cause of the reflectivity of the lower crust include both attempts to trace the lower crustal layering to surface outcrop or to drillable subcrop in areas of deep erosion, and also attempts to relate the distribution of reflectivity to geologic history, tecto ...

... Different approaches to the study of the cause of the reflectivity of the lower crust include both attempts to trace the lower crustal layering to surface outcrop or to drillable subcrop in areas of deep erosion, and also attempts to relate the distribution of reflectivity to geologic history, tecto ...

Energy performance of buildings — Calculation of energy use for

... Total heat transfer by ventilation.......................................................................................................35 Ventilation heat transfer coefficients ................................................................................................36 Input data and boundar ...

... Total heat transfer by ventilation.......................................................................................................35 Ventilation heat transfer coefficients ................................................................................................36 Input data and boundar ...

SCHAUM`S OUTLINE OF THEORY AND PROBLEMS OF HEAT

... text and/or (2) a supplemental aid for students taking a college course in heat transfer at the junior or senior level. To fulfil1 these dual roles, there are several factors that must be considered. One of these is the long-standing argument over the nature of treatment, that is, mathematical deriv ...

... text and/or (2) a supplemental aid for students taking a college course in heat transfer at the junior or senior level. To fulfil1 these dual roles, there are several factors that must be considered. One of these is the long-standing argument over the nature of treatment, that is, mathematical deriv ...

Thermodynamics

... the properties of the matter are considered as continuous functions of space variables. Although any matter is composed of several molecules, the concept of continuum assumes a continuous distribution of mass within the matter or system with no empty space, instead of the actual conglomeration of se ...

... the properties of the matter are considered as continuous functions of space variables. Although any matter is composed of several molecules, the concept of continuum assumes a continuous distribution of mass within the matter or system with no empty space, instead of the actual conglomeration of se ...

Concentration Processes under Tubesheet Sludge Piles in Nuclear

... Solute Concentration under Sludge Piles On the free, unobstructed tube surfaces of a nuclear steam generator, heat is transferred by nucleate boiling. In this process, the phase change occurs on the tube surface. The bubbles generated move away from the surface due to buoyancy forces and large quant ...

... Solute Concentration under Sludge Piles On the free, unobstructed tube surfaces of a nuclear steam generator, heat is transferred by nucleate boiling. In this process, the phase change occurs on the tube surface. The bubbles generated move away from the surface due to buoyancy forces and large quant ...

Heat exchanger

A heat exchanger is a device used to transfer heat between one or more fluids. The fluids may be separated by a solid wall to prevent mixing or they may be in direct contact. They are widely used in space heating, refrigeration, air conditioning, power stations, chemical plants, petrochemical plants, petroleum refineries, natural-gas processing, and sewage treatment. The classic example of a heat exchanger is found in an internal combustion engine in which a circulating fluid known as engine coolant flows through radiator coils and air flows past the coils, which cools the coolant and heats the incoming air.