Introduction to Matrices

... on the right. In this way, the transformation will read like a sentence. This is especially important when more than one transformation takes place. For example, if we wish to transform a vector v by the matrices A, B, and C, in that order, we write vABC. Notice that the matrices are listed in order ...

... on the right. In this way, the transformation will read like a sentence. This is especially important when more than one transformation takes place. For example, if we wish to transform a vector v by the matrices A, B, and C, in that order, we write vABC. Notice that the matrices are listed in order ...

A Superfast Algorithm for Confluent Rational Tangential

... of parameters. Manipulating directly on these parameters allows us to design efficient fast algorithms. One of the most fundamental matrix problems is that of multiplying a (structured) matrix with a vector. Many fundamental algorithms such as convolution, Fast Fourier Transform, Fast Cosine/Sine Tr ...

... of parameters. Manipulating directly on these parameters allows us to design efficient fast algorithms. One of the most fundamental matrix problems is that of multiplying a (structured) matrix with a vector. Many fundamental algorithms such as convolution, Fast Fourier Transform, Fast Cosine/Sine Tr ...

- 1 - AMS 147 Computational Methods and Applications Lecture 17

... 2) go back to the modeling process to formulate a well-conditioned system A geometric view an ill-conditioned system: [Draw two nearly perpendicular lines to show a well-conditioned system] [Draw two nearly parallel lines to show an ill-conditioned system] ...

... 2) go back to the modeling process to formulate a well-conditioned system A geometric view an ill-conditioned system: [Draw two nearly perpendicular lines to show a well-conditioned system] [Draw two nearly parallel lines to show an ill-conditioned system] ...

2.2 Addition and Subtraction of Matrices and

... The m × n zero matrix, denoted 0m×n (or simply 0, if the dimensions are clear), is the m × n matrix whose elements are all zeros. In the case of the n × n zero matrix, we may write 0n . We now collect a few properties of the zero matrix. The first of these below indicates that the zero matrix plays a ...

... The m × n zero matrix, denoted 0m×n (or simply 0, if the dimensions are clear), is the m × n matrix whose elements are all zeros. In the case of the n × n zero matrix, we may write 0n . We now collect a few properties of the zero matrix. The first of these below indicates that the zero matrix plays a ...

Matrix Completion from Noisy Entries

... size |E| = 80 and |E| = 160 are shown. In both cases, we can see that the RMSE converges to the information theoretic lower bound described later in this section. The fit error decays exponentially with the number iterations and converges to the standard deviation of the noise which is 0.001. This i ...

... size |E| = 80 and |E| = 160 are shown. In both cases, we can see that the RMSE converges to the information theoretic lower bound described later in this section. The fit error decays exponentially with the number iterations and converges to the standard deviation of the noise which is 0.001. This i ...

Speicher

... We want to consider more complicated situations, built out of simple cases (like Gaussian or Wishart) by doing operations like ...

... We want to consider more complicated situations, built out of simple cases (like Gaussian or Wishart) by doing operations like ...

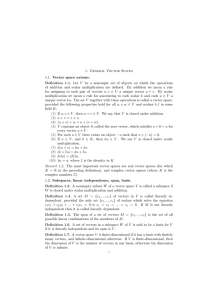

Systems of Linear Equations in Fields

... (2) If A is an (m × n) matrix and B is an (n × p) matrix, then (AB)ij = A(i) B (j) . Thus the (i, j)-entry of AB is the product of row i of A with column j of B. (3) If the number of columns of A is not the same as the number of rows of B, then A and B cannot be multiplied. ...

... (2) If A is an (m × n) matrix and B is an (n × p) matrix, then (AB)ij = A(i) B (j) . Thus the (i, j)-entry of AB is the product of row i of A with column j of B. (3) If the number of columns of A is not the same as the number of rows of B, then A and B cannot be multiplied. ...

cg-type algorithms to solve symmetric matrix equations

... was taken to be the zero matrix. The right hand side B was chosen such that the exact solution X is a matrix of order n × s whose ith column has all entries equal to one except the ith entry which is zero. The tests were stopped as soon as the stopping criterion k b(i) − Ax(i) k2 ...

... was taken to be the zero matrix. The right hand side B was chosen such that the exact solution X is a matrix of order n × s whose ith column has all entries equal to one except the ith entry which is zero. The tests were stopped as soon as the stopping criterion k b(i) − Ax(i) k2 ...

QUANTUM GROUPS AND HADAMARD MATRICES Introduction A

... Definition 1.2. A magic unitary is a square matrix u ∈ M n (A), all whose rows and columns are partitions of unity in A. The terminology comes from a vague similarity with magic squares, to be investigated later on. For the moment we are rather interested in the continuing the above classical/quantu ...

... Definition 1.2. A magic unitary is a square matrix u ∈ M n (A), all whose rows and columns are partitions of unity in A. The terminology comes from a vague similarity with magic squares, to be investigated later on. For the moment we are rather interested in the continuing the above classical/quantu ...

10 The Singular Value Decomposition

... first is in the orientation of the singular vectors. One can flip any right singular vector, provided that the corresponding left singular vector is flipped as well, and still obtain a valid SVD. Singular vectors must be flipped in pairs (a left vector and its corresponding right vector) because the ...

... first is in the orientation of the singular vectors. One can flip any right singular vector, provided that the corresponding left singular vector is flipped as well, and still obtain a valid SVD. Singular vectors must be flipped in pairs (a left vector and its corresponding right vector) because the ...

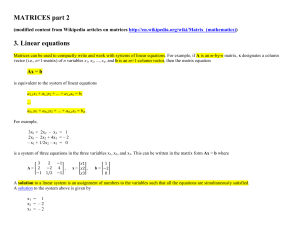

MATRICES part 2 3. Linear equations

... The vectors v1, v2, ..., vk are said to be linearly dependent if there exist a finite number of scalars a1, a2, ..., ak, not all zero, such that where zero denotes the zero vector. Otherwise, we said that vectors v1, v2, ..., vk are linearly independent. The Gaussian elimination algorithm can be app ...

... The vectors v1, v2, ..., vk are said to be linearly dependent if there exist a finite number of scalars a1, a2, ..., ak, not all zero, such that where zero denotes the zero vector. Otherwise, we said that vectors v1, v2, ..., vk are linearly independent. The Gaussian elimination algorithm can be app ...