Regression model approach to predict missing values in

... techniques. Data cleaning can be applied to remove noise and correct inconsistencies in the data. Data integration merges data from multiple sources into a coherent data store, such as a data warehouse or a data cube. Data transformations, such as normalization, may be applied. Data reduction can re ...

... techniques. Data cleaning can be applied to remove noise and correct inconsistencies in the data. Data integration merges data from multiple sources into a coherent data store, such as a data warehouse or a data cube. Data transformations, such as normalization, may be applied. Data reduction can re ...

the paper - Wharton Financial Institutions Center

... It is important to understand the realm of Japanese land prices. Appendix B contains the annual mean nominal rate of return for Japanese commercial land. Rates of return for Japanese commercial land are highly volatile over time. What happened to Japan’s land markets between 1982 and 2006? Figure 1 ...

... It is important to understand the realm of Japanese land prices. Appendix B contains the annual mean nominal rate of return for Japanese commercial land. Rates of return for Japanese commercial land are highly volatile over time. What happened to Japan’s land markets between 1982 and 2006? Figure 1 ...

The Ridge Regression Estimated Linear Probability Model: A Report

... the RR estimated LPM. Each residual, ui, under the LPM has variance xib(1 xib) . Hence, the LPM can be viewed as a linear model with observations having distinct variances, in Saccucci’s sense. Therefore, it is not unreasonable to conjecture the following existence property: k > 0 MSE ( bR L ...

... the RR estimated LPM. Each residual, ui, under the LPM has variance xib(1 xib) . Hence, the LPM can be viewed as a linear model with observations having distinct variances, in Saccucci’s sense. Therefore, it is not unreasonable to conjecture the following existence property: k > 0 MSE ( bR L ...

SPECIFYING ECONOMETRIC MODELS

... Berkson's transformation in the case of probit is 0-1(sj/mj); in the case of logit is log(sj/(mj-sj)); in the case of linear is sj; and in the case of the exponential model is log(sj/mj). It is a fairly general proposition that the asymptotic approximation is improved by using the transformation F- ...

... Berkson's transformation in the case of probit is 0-1(sj/mj); in the case of logit is log(sj/(mj-sj)); in the case of linear is sj; and in the case of the exponential model is log(sj/mj). It is a fairly general proposition that the asymptotic approximation is improved by using the transformation F- ...

Chi-Square

... – Alternative Hypothesis: • There is an association between Gender and Purchase • Probability of purchasing items more than $100 is the same for both sexes. ...

... – Alternative Hypothesis: • There is an association between Gender and Purchase • Probability of purchasing items more than $100 is the same for both sexes. ...

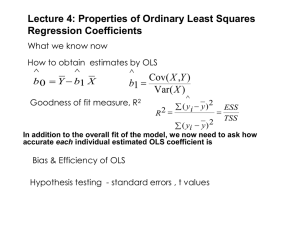

Coefficient of determination

In statistics, the coefficient of determination, denoted R2 or r2 and pronounced R squared, is a number that indicates how well data fit a statistical model – sometimes simply a line or a curve. An R2 of 1 indicates that the regression line perfectly fits the data, while an R2 of 0 indicates that the line does not fit the data at all. This latter can be because the data is utterly non-linear, or because it is random.It is a statistic used in the context of statistical models whose main purpose is either the prediction of future outcomes or the testing of hypotheses, on the basis of other related information. It provides a measure of how well observed outcomes are replicated by the model, as the proportion of total variation of outcomes explained by the model (pp. 187, 287).There are several definitions of R2 that are only sometimes equivalent. One class of such cases includes that of simple linear regression where r2 is used instead of R2. In this case, if an intercept is included, then r2 is simply the square of the sample correlation coefficient (i.e., r) between the outcomes and their predicted values. If additional explanators are included, R2 is the square of the coefficient of multiple correlation. In both such cases, the coefficient of determination ranges from 0 to 1.Important cases where the computational definition of R2 can yield negative values, depending on the definition used, arise where the predictions that are being compared to the corresponding outcomes have not been derived from a model-fitting procedure using those data, and where linear regression is conducted without including an intercept. Additionally, negative values of R2 may occur when fitting non-linear functions to data. In cases where negative values arise, the mean of the data provides a better fit to the outcomes than do the fitted function values, according to this particular criterion.