A NoSQL data management infrastructure for bridge monitoring , Sean O’Connor

... This section discusses a sensor data management framework and the selection of data standards and the data management tools. There exist many NoSQL database systems, each has its own strengths and disadvantages. Careful evaluation of the tools is necessary for successful development of a data manage ...

... This section discusses a sensor data management framework and the selection of data standards and the data management tools. There exist many NoSQL database systems, each has its own strengths and disadvantages. Careful evaluation of the tools is necessary for successful development of a data manage ...

Some Thoughts on Data Warehouses

... As with many developments, there are a number of ‘inescapables’ (much like fees and taxes) Unavoidable realities are a recognition that the ideal model is an ideal, not a practicality nor reality A realistic model is referred to a ‘descriptive’ - the ideal model is classed as ‘normative’. ...

... As with many developments, there are a number of ‘inescapables’ (much like fees and taxes) Unavoidable realities are a recognition that the ideal model is an ideal, not a practicality nor reality A realistic model is referred to a ‘descriptive’ - the ideal model is classed as ‘normative’. ...

'How Do I Love Thee? Let Me Count the Ways.' SAS® Software as a Part of the Corporate Information Factory

... management, ad hoc querying, production analysis, and reporting, OLAP, data mining, ad nausium. This approach complicates the CIF system, increases costs, and reduces ROI. In this best-of-breed solution, SAS Software is often deployed only in an analytical role that clearly fails to take advantage o ...

... management, ad hoc querying, production analysis, and reporting, OLAP, data mining, ad nausium. This approach complicates the CIF system, increases costs, and reduces ROI. In this best-of-breed solution, SAS Software is often deployed only in an analytical role that clearly fails to take advantage o ...

unit iii database management systems

... Edgor Codd at IBM came with new database called RDBMS. In 1980 then came SQL Architecture- Structure Query Language. In 1980 to 1990 there were advances in DBMS e.g. DB2, ORACLE. ...

... Edgor Codd at IBM came with new database called RDBMS. In 1980 then came SQL Architecture- Structure Query Language. In 1980 to 1990 there were advances in DBMS e.g. DB2, ORACLE. ...

Data in a PaaS World: A Guide for New Applications

... application’s data. Rather than copying the on-premises world, the PaaS approach rethinks how we build applications and work with data for the cloud. PaaS data services (which are also sometimes referred to as Database as a Service) have a number of advantages over the IaaS approach of running a DB ...

... application’s data. Rather than copying the on-premises world, the PaaS approach rethinks how we build applications and work with data for the cloud. PaaS data services (which are also sometimes referred to as Database as a Service) have a number of advantages over the IaaS approach of running a DB ...

A System for Integrated Management of Data, Accuracy, and Lineage

... sources into their own databases, both as raw values and to create new derived data. The accuracy and reliability of data obtained from these outside sources must be captured along with the data, and can also change over time. This application domain can exploit all of Trio’s features. Furthermore i ...

... sources into their own databases, both as raw values and to create new derived data. The accuracy and reliability of data obtained from these outside sources must be captured along with the data, and can also change over time. This application domain can exploit all of Trio’s features. Furthermore i ...

Invisible Loading: Access-Driven Data Transfer

... for data extraction). We provide a basic library of operators, such as filter, aggregate, ... etc, that behave differently depending on the data source. Several MapReduce programming environments, like PigLatin [14], provide similar libraries of operators. Such libraries generally facilitate the use ...

... for data extraction). We provide a basic library of operators, such as filter, aggregate, ... etc, that behave differently depending on the data source. Several MapReduce programming environments, like PigLatin [14], provide similar libraries of operators. Such libraries generally facilitate the use ...

Building Real-Time Data Pipelines

... HTAP: Bringing OLTP and OLAP Together When working with transactions and analytics independently, many challenges have already been solved. For example, if you want to focus on just transactions, or just analytics, there are many existing database and data warehouse solutions: • If you want to load ...

... HTAP: Bringing OLTP and OLAP Together When working with transactions and analytics independently, many challenges have already been solved. For example, if you want to focus on just transactions, or just analytics, there are many existing database and data warehouse solutions: • If you want to load ...

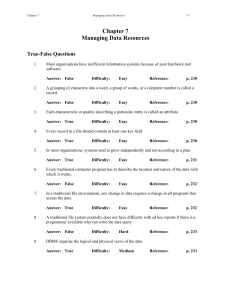

Chapter 7: Managing Data Resources

... A traditional file system normally does not have difficulty with ad hoc reports if there is a programmer available who can write the data query. Answer: False ...

... A traditional file system normally does not have difficulty with ad hoc reports if there is a programmer available who can write the data query. Answer: False ...

Chapter 1 - azharunisel

... A transaction (production) databases reflects the daily operations of an organization. As the name implies, a transaction database records transactions such as the sale of a product, the enrolment of a student, or the opening of a checking account. Such transactions are time-critical and must be rec ...

... A transaction (production) databases reflects the daily operations of an organization. As the name implies, a transaction database records transactions such as the sale of a product, the enrolment of a student, or the opening of a checking account. Such transactions are time-critical and must be rec ...

Data Warehouse Design and Management: Theory and Practice

... between them are recorded in tabular form. It is used both for transactional systems and DWs, but data are optimized differently because of different requirements that characterize the two types of systems. Transactional systems are engineered to manage daily operations, then the database is optimiz ...

... between them are recorded in tabular form. It is used both for transactional systems and DWs, but data are optimized differently because of different requirements that characterize the two types of systems. Transactional systems are engineered to manage daily operations, then the database is optimiz ...

®

... load and transform data ahead of ingest minimizes interference with query workloads. Further benefits are gained through managing database locking and logging and system resource usage with the software tools available with DB2 and IBM Optim™ software available with the IBM Smart Analytics System. ...

... load and transform data ahead of ingest minimizes interference with query workloads. Further benefits are gained through managing database locking and logging and system resource usage with the software tools available with DB2 and IBM Optim™ software available with the IBM Smart Analytics System. ...

3 A Practitioner`s G uide to Data Management and Data Integration

... a great deal of innovative thought has been put into making data retrieval faster and faster. For example, a great step forward was cost-based optimization, or planning a query based on minimizing the expense to execute it, invented in 1979 by Patricia Selinger [14]. Similarly, because relational te ...

... a great deal of innovative thought has been put into making data retrieval faster and faster. For example, a great step forward was cost-based optimization, or planning a query based on minimizing the expense to execute it, invented in 1979 by Patricia Selinger [14]. Similarly, because relational te ...

Lightweight Symmetric Encryption Algorithm for Secure Database

... a database without any effort, and they use important data in a wrong way. Database encryption has the potential to secure data at rest by providing data encryption, especially for sensitive data, avoiding the risks such as misuse of the data [1]. In order to achieve a high level of security, the co ...

... a database without any effort, and they use important data in a wrong way. Database encryption has the potential to secure data at rest by providing data encryption, especially for sensitive data, avoiding the risks such as misuse of the data [1]. In order to achieve a high level of security, the co ...

Word - 1913 KB - Department of the Environment

... catchments. The database also contains descriptive data to provide the user with contextual information to aid the interpretation of the CFEV assessment data. Until recently, only the output data which represented the results of the CFEV assessment was stored in the database (e.g. features within th ...

... catchments. The database also contains descriptive data to provide the user with contextual information to aid the interpretation of the CFEV assessment data. Until recently, only the output data which represented the results of the CFEV assessment was stored in the database (e.g. features within th ...

Database semantic integrity for a network data manager

... support system requirements have not been identified. However, the extremely systematic approach which is presented requires complete specification at database design time. Operational use would require investigation of the issues of sizing and flexibility. 2.3.2 McLeod McLeod's approach [MCLED 76, ...

... support system requirements have not been identified. However, the extremely systematic approach which is presented requires complete specification at database design time. Operational use would require investigation of the issues of sizing and flexibility. 2.3.2 McLeod McLeod's approach [MCLED 76, ...

Data Consistency Management

... exchanged between multiple systems including Non-ABAP system Goal Comparison of two sources to detect missing table entries or inconsistent field contents without the need to write additional coding ...

... exchanged between multiple systems including Non-ABAP system Goal Comparison of two sources to detect missing table entries or inconsistent field contents without the need to write additional coding ...

Lecture Notes Part 1 - 354KB

... Logical source contents (books, new cars). Source capabilities (can answer SQL queries?) Source completeness (has all books). Physical properties of source and network. Statistics about the data (like in an RDBMS) Source reliability Mirror sources? Update frequency. ...

... Logical source contents (books, new cars). Source capabilities (can answer SQL queries?) Source completeness (has all books). Physical properties of source and network. Statistics about the data (like in an RDBMS) Source reliability Mirror sources? Update frequency. ...

dbTouch: Analytics at your Fingertips

... via touch input. For example, an attribute of a given table may be represented by a column shape. Users are able to apply gestures on this shape to get a feeling of the data stored in the column, i.e., to scan, to run aggregates on part of the data, or even to run complex queries. The fundamental c ...

... via touch input. For example, an attribute of a given table may be represented by a column shape. Users are able to apply gestures on this shape to get a feeling of the data stored in the column, i.e., to scan, to run aggregates on part of the data, or even to run complex queries. The fundamental c ...

www.cs.laurentian.ca

... consists of fact tables and dimension tables Information stored inside data warehouse – precalculated totals and counts, information regarding source, data and time of origin for historical context ...

... consists of fact tables and dimension tables Information stored inside data warehouse – precalculated totals and counts, information regarding source, data and time of origin for historical context ...

Why Migrate from MySQL to Cassandra? White Paper BY DATASTAX CORPORATION June 2012

... designed for, even its strongest supporters admit that it is not architected to tackle the new wave of big data applications being developed today. In fact, in the same way that the needs of late 20th century web companies helped give birth to MySQL and drive its success, modern businesses today tha ...

... designed for, even its strongest supporters admit that it is not architected to tackle the new wave of big data applications being developed today. In fact, in the same way that the needs of late 20th century web companies helped give birth to MySQL and drive its success, modern businesses today tha ...

Course chapter 8: Data Warehousing

... The source systems maintain little historical data, and with a good data warehouse, the source systems can be relieved of much of the responsibility for representing the past. Each source system is often a natural stovepipe application1, where little investment has been made to sharing common data s ...

... The source systems maintain little historical data, and with a good data warehouse, the source systems can be relieved of much of the responsibility for representing the past. Each source system is often a natural stovepipe application1, where little investment has been made to sharing common data s ...

Replication Extracts from Books Online

... demands on network resources, and prevent troubleshooting later. Consider these areas when planning for replication: Whether replicated data needs to be updated, and by whom. Your data distribution needs regarding consistency, autonomy, and latency. The replication environment, including busin ...

... demands on network resources, and prevent troubleshooting later. Consider these areas when planning for replication: Whether replicated data needs to be updated, and by whom. Your data distribution needs regarding consistency, autonomy, and latency. The replication environment, including busin ...

issn: 2278-6244 extract transform load data with etl tools like

... We all want to load our data warehouse regularly so that it can assist its purpose of facilitating business analysis [1]. To do this, data from one or more operational systems desires to be extracted and copied into the warehouse. The process of extracting data from source systems and carrying it in ...

... We all want to load our data warehouse regularly so that it can assist its purpose of facilitating business analysis [1]. To do this, data from one or more operational systems desires to be extracted and copied into the warehouse. The process of extracting data from source systems and carrying it in ...

Sharded Parallel Mapreduce in Mongodb for Online Aggregation

... bars in a graphical user interface. The precision of the estimated result increases as more and more input tuples handled. In this system, users can both observe the progress of their aggregation queries and control execution of these queries on the fly. It allows interactive exploration of large, c ...

... bars in a graphical user interface. The precision of the estimated result increases as more and more input tuples handled. In this system, users can both observe the progress of their aggregation queries and control execution of these queries on the fly. It allows interactive exploration of large, c ...

Big data

Big data is a broad term for data sets so large or complex that traditional data processing applications are inadequate. Challenges include analysis, capture, data curation, search, sharing, storage, transfer, visualization, and information privacy. The term often refers simply to the use of predictive analytics or other certain advanced methods to extract value from data, and seldom to a particular size of data set. Accuracy in big data may lead to more confident decision making. And better decisions can mean greater operational efficiency, cost reduction and reduced risk.Analysis of data sets can find new correlations, to ""spot business trends, prevent diseases, combat crime and so on."" Scientists, business executives, practitioners of media and advertising and governments alike regularly meet difficulties with large data sets in areas including Internet search, finance and business informatics. Scientists encounter limitations in e-Science work, including meteorology, genomics, connectomics, complex physics simulations, and biological and environmental research.Data sets grow in size in part because they are increasingly being gathered by cheap and numerous information-sensing mobile devices, aerial (remote sensing), software logs, cameras, microphones, radio-frequency identification (RFID) readers, and wireless sensor networks. The world's technological per-capita capacity to store information has roughly doubled every 40 months since the 1980s; as of 2012, every day 2.5 exabytes (2.5×1018) of data were created; The challenge for large enterprises is determining who should own big data initiatives that straddle the entire organization.Work with big data is necessarily uncommon; most analysis is of ""PC size"" data, on a desktop PC or notebook that can handle the available data set.Relational database management systems and desktop statistics and visualization packages often have difficulty handling big data. The work instead requires ""massively parallel software running on tens, hundreds, or even thousands of servers"". What is considered ""big data"" varies depending on the capabilities of the users and their tools, and expanding capabilities make Big Data a moving target. Thus, what is considered ""big"" one year becomes ordinary later. ""For some organizations, facing hundreds of gigabytes of data for the first time may trigger a need to reconsider data management options. For others, it may take tens or hundreds of terabytes before data size becomes a significant consideration.""