ARCH MODELS a Contents Abstract 2961 1. Introduction 2961 2

... modeling centered on the conditional first moments, with any temporal dependencies in the higher order moments treated as a nuisance. The increased importance played by risk and uncertainty considerations in modern economic theory, however, has necessitated the development of new econometric time se ...

... modeling centered on the conditional first moments, with any temporal dependencies in the higher order moments treated as a nuisance. The increased importance played by risk and uncertainty considerations in modern economic theory, however, has necessitated the development of new econometric time se ...

ICOM4015-lec18

... • Mathematically speaking, a map is a function from one set, the key set, to another set, the value set • Every key in a map has a unique value • A value may be associated with several keys • Classes that implement the Map interface ...

... • Mathematically speaking, a map is a function from one set, the key set, to another set, the value set • Every key in a map has a unique value • A value may be associated with several keys • Classes that implement the Map interface ...

Impact of Dividend Policy on Market Capitalization of Firms Listed in

... Dividend policy is an important tool use by companies to determine the ratio of dividend payments and reserves for future investments. This study attempts to explore the impact of a firms’ dividend policy on its market capitalization. For this purpose 10 years data beginning from 2005 to 2014 of com ...

... Dividend policy is an important tool use by companies to determine the ratio of dividend payments and reserves for future investments. This study attempts to explore the impact of a firms’ dividend policy on its market capitalization. For this purpose 10 years data beginning from 2005 to 2014 of com ...

Monetary policy, doubts and asset prices

... framework, the welfare-maximizing policy of the paternalistic policymaker is more accommodating and involves an increase in inflation following positive productivity shocks. Distorted beliefs enter the stochastic discount factor. This creates a wedge between average real marginal costs (or average o ...

... framework, the welfare-maximizing policy of the paternalistic policymaker is more accommodating and involves an increase in inflation following positive productivity shocks. Distorted beliefs enter the stochastic discount factor. This creates a wedge between average real marginal costs (or average o ...

Skip Lists: A Probabilistic Alternative to Balanced Trees

... not have access to the levels of nodes; otherwise, he could create situations with worst-case running times by deleting all nodes that were not level 1. The probabilities of poor running times for successive operations on the same data structure are NOT independent; two successive searches for the s ...

... not have access to the levels of nodes; otherwise, he could create situations with worst-case running times by deleting all nodes that were not level 1. The probabilities of poor running times for successive operations on the same data structure are NOT independent; two successive searches for the s ...

Introduction

... downgrades will be avoided and investors will be more protected. Portfolio managers will base on a more valid model. Bank regulation is supported by credit-risk models at the level of the capital requirements. Securitization allowed them to avoid excessive capital provisions in the light of Basel I ...

... downgrades will be avoided and investors will be more protected. Portfolio managers will base on a more valid model. Bank regulation is supported by credit-risk models at the level of the capital requirements. Securitization allowed them to avoid excessive capital provisions in the light of Basel I ...

Structures

... Each pointer is 4 bytes Allocates 400 bytes total Pointers do not point to allocated space ...

... Each pointer is 4 bytes Allocates 400 bytes total Pointers do not point to allocated space ...

Paper - Springer

... NP-complete. The search algorithm operates correctly, even if consistent produces false positives, because every tuple is checked before it is output. In other words, you can use a heuristic for satisfiability, which may always return >. However, the quality of consistent has a severe effect on the ...

... NP-complete. The search algorithm operates correctly, even if consistent produces false positives, because every tuple is checked before it is output. In other words, you can use a heuristic for satisfiability, which may always return >. However, the quality of consistent has a severe effect on the ...

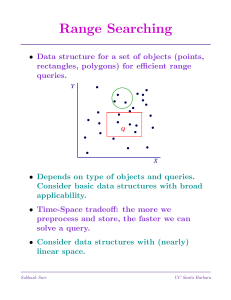

Recent developments in linear quadtree

... The quadtree memory management system, described in greater detail in phase II of this project3, is based on use of a structure termed the ‘linear quadtree’6. The leaf nodes making up the quadtree of an image are stored in a list. In the variation which we have implemented each leaf node consists of ...

... The quadtree memory management system, described in greater detail in phase II of this project3, is based on use of a structure termed the ‘linear quadtree’6. The leaf nodes making up the quadtree of an image are stored in a list. In the variation which we have implemented each leaf node consists of ...

CS 130 A: Data Structures and Algorithms

... NPL(X) : length of shortest path from X to a null pointer Leftist heap : heap-ordered binary tree in which NPL(leftchild(X)) >= NPLl(rightchild(X)) for every node X. Therefore, npl(X) = length of the right path from X also, NPL(root) log(N+1) proof: show by induction that NPL(root) = r implies t ...

... NPL(X) : length of shortest path from X to a null pointer Leftist heap : heap-ordered binary tree in which NPL(leftchild(X)) >= NPLl(rightchild(X)) for every node X. Therefore, npl(X) = length of the right path from X also, NPL(root) log(N+1) proof: show by induction that NPL(root) = r implies t ...

... The Tableau method is a formal proof procedure existing for several logics. It is based in a refutation or indirect proof procedure; showing a formula X is valid by intending to assert it is not with a syntactical expression. The expression is broken down syntactically by splitting it in several cas ...

Design Patterns for Self-Balancing Trees

... tree and the extrinsic, order-dependent calculations needed to maintain its balance. The resulting morass of data manipulations hides the underlying concepts and hampers the students’ learning. We seek to alleviate the difficulties faced by students by offering an object-oriented (OO) formulation of ...

... tree and the extrinsic, order-dependent calculations needed to maintain its balance. The resulting morass of data manipulations hides the underlying concepts and hampers the students’ learning. We seek to alleviate the difficulties faced by students by offering an object-oriented (OO) formulation of ...

Heaps Simplified Bernhard Haeupler , Siddhartha Sen , and Robert E. Tarjan

... matching Fredman’s lower bound, but its analysis is complicated and yields larger constant factors; the other uses lg lg n + 1 bits per node and has small constant factors. In Section 4 we address the question of whether key decrease can be further simplified. We close in Section 5 with open problem ...

... matching Fredman’s lower bound, but its analysis is complicated and yields larger constant factors; the other uses lg lg n + 1 bits per node and has small constant factors. In Section 4 we address the question of whether key decrease can be further simplified. We close in Section 5 with open problem ...

Vector

... getDataAtCurrent—returns the data part of the node that the iterator (current) is at moreToIterate—returns a boolean value that will be true if the iterator is not at the end of the list resetIteration—moves the iterator to the beginning of the list Can write methods to add and delete nodes at the i ...

... getDataAtCurrent—returns the data part of the node that the iterator (current) is at moreToIterate—returns a boolean value that will be true if the iterator is not at the end of the list resetIteration—moves the iterator to the beginning of the list Can write methods to add and delete nodes at the i ...

Document

... Therefore on average we need to do (0+n)/2 shifts for inserting a random element in the array. Normally, when we talk about the complexity of operations (i.e the number of steps needed to perform that operation) we don’t care about the multiplied or added ...

... Therefore on average we need to do (0+n)/2 shifts for inserting a random element in the array. Normally, when we talk about the complexity of operations (i.e the number of steps needed to perform that operation) we don’t care about the multiplied or added ...

Lattice model (finance)

For other meanings, see lattice model (disambiguation)In finance, a lattice model [1] is a technique applied to the valuation of derivatives, where, because of path dependence in the payoff, 1) a discretized model is required and 2) Monte Carlo methods fail to account for optimal decisions to terminate the derivative by early exercise. For equity options, a typical example would be pricing an American option, where a decision as to option exercise is required at ""all"" times (any time) before and including maturity. A continuous model, on the other hand, such as Black Scholes, would only allow for the valuation of European options, where exercise is on the option's maturity date. For interest rate derivatives lattices are additionally useful in that they address many of the issues encountered with continuous models, such as pull to par.