Random Variables - Noblestatman.com

... A discrete random variable X has a countable number of possible values. The probability distribution of X lists the values and their probabilities. Value of X ...

... A discrete random variable X has a countable number of possible values. The probability distribution of X lists the values and their probabilities. Value of X ...

LOYOLA COLLEGE (AUTONOMOUS), CHENNAI – 600 034

... first ‘n’ observations and S2 be the unbiased estimator of the population variance based on the first ‘n’ observations. Find the constant ‘k’ so that the statistic k( M – Xn+1) /S follows a t- distribution. (b) Let X have a Poisson distribution with parameter . Assume that the unknown is a value ...

... first ‘n’ observations and S2 be the unbiased estimator of the population variance based on the first ‘n’ observations. Find the constant ‘k’ so that the statistic k( M – Xn+1) /S follows a t- distribution. (b) Let X have a Poisson distribution with parameter . Assume that the unknown is a value ...

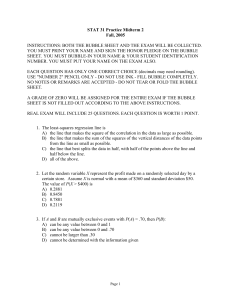

STAT 31 Practice Midterm 2 Fall, 2005 INSTRUCTIONS: BOTH THE

... changes during the first year of life. He plots these averages versus the age X (in months) and decides to fit a least-squares regression line to the data with X as the explanatory variable and Y as the response variable. He computes the following ...

... changes during the first year of life. He plots these averages versus the age X (in months) and decides to fit a least-squares regression line to the data with X as the explanatory variable and Y as the response variable. He computes the following ...

Slides Set 12

... Randomized Algorithms • Instead of relying on a (perhaps incorrect) assumption that inputs exhibit some distribution, make your own input distribution by, say, permuting the input randomly or taking some other random action • On the same input, a randomized algorithm ...

... Randomized Algorithms • Instead of relying on a (perhaps incorrect) assumption that inputs exhibit some distribution, make your own input distribution by, say, permuting the input randomly or taking some other random action • On the same input, a randomized algorithm ...

Generating Random Factored Numbers, Easily

... tests before success. Bach's algorithm uses only an expected O(log N) tests. For either algorithm, primality The key to understanding this algorithm is that tests can be implemented efficiently by a randomized each prime p < N is included in the sequence indepenalgorithm [3], or as shown in the foll ...

... tests before success. Bach's algorithm uses only an expected O(log N) tests. For either algorithm, primality The key to understanding this algorithm is that tests can be implemented efficiently by a randomized each prime p < N is included in the sequence indepenalgorithm [3], or as shown in the foll ...

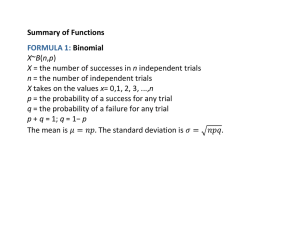

Formula Sheet

... model in constructing the Restricted model. Let T be the number of observations in the data set. Let k be the number of RHS variables plus one for intercept in the Unrestricted model. ...

... model in constructing the Restricted model. Let T be the number of observations in the data set. Let k be the number of RHS variables plus one for intercept in the Unrestricted model. ...

Law of large numbers

In probability theory, the law of large numbers (LLN) is a theorem that describes the result of performing the same experiment a large number of times. According to the law, the average of the results obtained from a large number of trials should be close to the expected value, and will tend to become closer as more trials are performed.The LLN is important because it ""guarantees"" stable long-term results for the averages of some random events. For example, while a casino may lose money in a single spin of the roulette wheel, its earnings will tend towards a predictable percentage over a large number of spins. Any winning streak by a player will eventually be overcome by the parameters of the game. It is important to remember that the LLN only applies (as the name indicates) when a large number of observations are considered. There is no principle that a small number of observations will coincide with the expected value or that a streak of one value will immediately be ""balanced"" by the others (see the gambler's fallacy)