Ray pavloski

... is argued that three properties of a perceptual gestalt might be employed in bridging the gap between these two domains: perceptual gestalts are hidden from objective observation, they are stable, and they are organized at multiple levels. Evidence from my recent research shows how simulations of mo ...

... is argued that three properties of a perceptual gestalt might be employed in bridging the gap between these two domains: perceptual gestalts are hidden from objective observation, they are stable, and they are organized at multiple levels. Evidence from my recent research shows how simulations of mo ...

Application Of Evolutionary Neural Network Architecture

... Abstract (Cont): The advantage of employing ...

... Abstract (Cont): The advantage of employing ...

neural network

... The ‘neuron’ units are connected by a network of weighted links passing signals from one neuron to another The outgoing connection splits into a number of branches that all transmit the same output signal. The outgoing branches are the incoming connections of other neurons in the network Neural netw ...

... The ‘neuron’ units are connected by a network of weighted links passing signals from one neuron to another The outgoing connection splits into a number of branches that all transmit the same output signal. The outgoing branches are the incoming connections of other neurons in the network Neural netw ...

Introduction to Financial Prediction using Artificial Intelligent Method

... The dendrites are extensions of a neuron which connect to other neurons to form a neural network, while synapses are a gateway which connects to dendrites that come from other neurons. A biological neuron may thus be connected to other neurons as well as accepting connections from other neurons, and ...

... The dendrites are extensions of a neuron which connect to other neurons to form a neural network, while synapses are a gateway which connects to dendrites that come from other neurons. A biological neuron may thus be connected to other neurons as well as accepting connections from other neurons, and ...

Neural Networks

... To build a neuron based computer with as little as 0.1% of the performance of the human brain. Use this model to perform tasks that would be difficult to achieve using conventional computations. ...

... To build a neuron based computer with as little as 0.1% of the performance of the human brain. Use this model to perform tasks that would be difficult to achieve using conventional computations. ...

Exercise Sheet 6 - Machine Learning

... output neuron. Download the training pattern file from the course website and open it. Try different learning algorithms with different parameter settings and observe the results with the Error Graph View and the Projection View. (a) Use the Backpropagation learning algorithm to train a MLP for the ...

... output neuron. Download the training pattern file from the course website and open it. Try different learning algorithms with different parameter settings and observe the results with the Error Graph View and the Projection View. (a) Use the Backpropagation learning algorithm to train a MLP for the ...

Lecture notes - University of Sussex

... •So we can forget all sub-threshold activity and concentrate on spikes (action potentials), which are the signals sent to other neurons ...

... •So we can forget all sub-threshold activity and concentrate on spikes (action potentials), which are the signals sent to other neurons ...

neural-networks

... • A neural network is composed of a number of nodes, or units, connected by links. Each link has a numeric weight associated with it. • Weights are the primary means of long-term storage in neural networks, and learning usually takes place by updating the weights. • Each unit has a set of input link ...

... • A neural network is composed of a number of nodes, or units, connected by links. Each link has a numeric weight associated with it. • Weights are the primary means of long-term storage in neural networks, and learning usually takes place by updating the weights. • Each unit has a set of input link ...

Application of ART neural networks in Wireless sensor networks

... with ART neural networks. There are two basic techniques: Fast learning ○ new values of W are assigned in at discreet moments in time and are determined by algebraic equations Slow learning ○ values of W at given point in time are determined by values of continuous functions at that point and de ...

... with ART neural networks. There are two basic techniques: Fast learning ○ new values of W are assigned in at discreet moments in time and are determined by algebraic equations Slow learning ○ values of W at given point in time are determined by values of continuous functions at that point and de ...

Slide 1

... Each neuron receives inputs from many other neurons Cortical neurons use spikes to communicate Neurons spike once they “aggregate enough stimuli” through input spikes The effect of each input spike on the neuron is controlled by a synaptic weight. Weights can be positive or negative Synapt ...

... Each neuron receives inputs from many other neurons Cortical neurons use spikes to communicate Neurons spike once they “aggregate enough stimuli” through input spikes The effect of each input spike on the neuron is controlled by a synaptic weight. Weights can be positive or negative Synapt ...

Learning about Learning - by Directly Driving Networks of Neurons

... New behaviors require new patterns of neural activity among the population of neurons that control behavior. How can the brain find a pattern of activity appropriate for the desired behavior? Why does that learning process take time? To tackle questions like these, we reverse the normal order of ope ...

... New behaviors require new patterns of neural activity among the population of neurons that control behavior. How can the brain find a pattern of activity appropriate for the desired behavior? Why does that learning process take time? To tackle questions like these, we reverse the normal order of ope ...

Neural networks.

... is near its extreme values (minimum or maximum) and larger corrections when the activation is in its middle range. Each correction has the immediate effect of making the error signal smaller if a similar input is applied to the unit. In general, supervised learning rules implement optimization algor ...

... is near its extreme values (minimum or maximum) and larger corrections when the activation is in its middle range. Each correction has the immediate effect of making the error signal smaller if a similar input is applied to the unit. In general, supervised learning rules implement optimization algor ...

Neural Networks for Data Mining

... random elements. For instance, in the beginning the network is carefully initialized with random numbers. When this is repeated, the same input set may yield very different networks. Sometimes they differ in performance, one showing good behavior, while others behave badly. Note that it is perfectly ...

... random elements. For instance, in the beginning the network is carefully initialized with random numbers. When this is repeated, the same input set may yield very different networks. Sometimes they differ in performance, one showing good behavior, while others behave badly. Note that it is perfectly ...

LECTURE FIVE

... During operation, units can be updated either synchronously or asynchronously. With synchronous updating, all units update their activation simultaneously; with asynchronous updating, each unit has a (usually fixed) probability of updating its activation at a time t, and usually only one unit will ...

... During operation, units can be updated either synchronously or asynchronously. With synchronous updating, all units update their activation simultaneously; with asynchronous updating, each unit has a (usually fixed) probability of updating its activation at a time t, and usually only one unit will ...

Artificial Intelligence CSC 361

... 1960s: Widrow and Hoff explored Perceptron networks (which they called “Adalines”) and the delta rule. ...

... 1960s: Widrow and Hoff explored Perceptron networks (which they called “Adalines”) and the delta rule. ...

sheets DA 7

... recurrent activity. This mechanism may explain the encoding of both stimulus in retinal coordinates (s) and gaze (g) encountered before in parietal cortical neurons. ...

... recurrent activity. This mechanism may explain the encoding of both stimulus in retinal coordinates (s) and gaze (g) encountered before in parietal cortical neurons. ...

Document

... recurrent activity. This mechanism may explain the encoding of both stimulus in retinal coordinates (s) and gaze (g) encountered before in parietal cortical neurons. ...

... recurrent activity. This mechanism may explain the encoding of both stimulus in retinal coordinates (s) and gaze (g) encountered before in parietal cortical neurons. ...

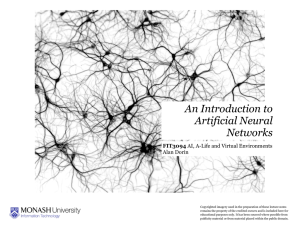

(Early Period) - Connectionism

... Connectionism is a movement in cognitive science that seeks to explain intellectual abilities using artificial neural networks. Neural networks are simplified models of the brain composed of large numbers of units (the analogs of neurons) together with weights that measure the strength of connection ...

... Connectionism is a movement in cognitive science that seeks to explain intellectual abilities using artificial neural networks. Neural networks are simplified models of the brain composed of large numbers of units (the analogs of neurons) together with weights that measure the strength of connection ...