KERNEL PRINCIPAL - Open Electronic Archive of Kharkov

... networks (ANN ), that tune their parameters without a teacher on the basis of the self-learning paradigm [3], are widely used in solving various problems of Data Mining, Exploratory Data Analysis etc. Among these tasks, most frequently encountered in the Text Mining, Web Mining, Medical Data Mining, ...

... networks (ANN ), that tune their parameters without a teacher on the basis of the self-learning paradigm [3], are widely used in solving various problems of Data Mining, Exploratory Data Analysis etc. Among these tasks, most frequently encountered in the Text Mining, Web Mining, Medical Data Mining, ...

Artificial Neural Networks

... Only requires inputs. Through time an ANN learns to organize and cluster data by itself. • Reinforcement Learning An ANN from the given input produces some output, and the ANN is rewarded or punished based on the output it created. ...

... Only requires inputs. Through time an ANN learns to organize and cluster data by itself. • Reinforcement Learning An ANN from the given input produces some output, and the ANN is rewarded or punished based on the output it created. ...

Cai, D.; Tao, L.; Rangan, A.; McLaughlin, D. Kinetic Theory for Neuronal Network Dynamics. Comm. Math. Sci 4 (2006), no. 1, 97-12.

... conductance-based integrate-and-fire (I&F) neurons, a full kinetic description without introduction of new parameters is derived. After a brief description of the dynamics of conductance - based I&F neural networks, for the dynamics of a single I&F neuron with an infinitely fast conductance driven b ...

... conductance-based integrate-and-fire (I&F) neurons, a full kinetic description without introduction of new parameters is derived. After a brief description of the dynamics of conductance - based I&F neural networks, for the dynamics of a single I&F neuron with an infinitely fast conductance driven b ...

ppt - UTK-EECS

... When a neurotransmitter binds to a receptor on the postsynaptic side of the synapse, it results in a change of the postsynaptic cell's excitability: it makes the postsynaptic cell either more or less likely to fire an action potential. If the number of excitatory postsynaptic events are large enough ...

... When a neurotransmitter binds to a receptor on the postsynaptic side of the synapse, it results in a change of the postsynaptic cell's excitability: it makes the postsynaptic cell either more or less likely to fire an action potential. If the number of excitatory postsynaptic events are large enough ...

Artificial Neural Networks

... Hidden layer uses statistical clustering techniques to train Good at pattern recognition ...

... Hidden layer uses statistical clustering techniques to train Good at pattern recognition ...

Artificial Neural Networks - Introduction -

... nervous system to perform these behaviours. An appropriate model/simulation of the nervous system should be able to produce similar responses and behaviours in artificial systems. The nervous system is build by relatively simple units, the neurons, so copying their behavior and functionality should ...

... nervous system to perform these behaviours. An appropriate model/simulation of the nervous system should be able to produce similar responses and behaviours in artificial systems. The nervous system is build by relatively simple units, the neurons, so copying their behavior and functionality should ...

Neural networks

... Universal approximation theorem states that a feed-forward network with a single hidden layer containing a finite number of neurons can approximate any continuous functions ...

... Universal approximation theorem states that a feed-forward network with a single hidden layer containing a finite number of neurons can approximate any continuous functions ...

Slide 1

... • The output layer performs a simple weighted sum (i.e. w∙x). – If the RBFN is used for regression then this output is fine. – However, if pattern classification is required, then a hardlimiter or sigmoid function could be placed on the output neurons to give 0/1 output values ...

... • The output layer performs a simple weighted sum (i.e. w∙x). – If the RBFN is used for regression then this output is fine. – However, if pattern classification is required, then a hardlimiter or sigmoid function could be placed on the output neurons to give 0/1 output values ...

Introduction to knowledge-based systems

... Various types of neurons Various network architectures Various learning algorithms Various applications ...

... Various types of neurons Various network architectures Various learning algorithms Various applications ...

Document

... – First layer consists of inputs – The last layer is the output layer – Every neuron that’s not in the input nor in the output layer is in some hidden layer ...

... – First layer consists of inputs – The last layer is the output layer – Every neuron that’s not in the input nor in the output layer is in some hidden layer ...

Part 7.2 Neural Networks

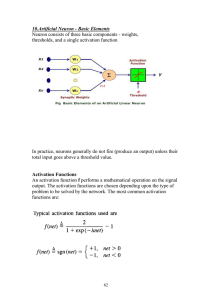

... only present it with the input but also with a value that we require the network to produce. For example, if we present the network with [1,1] for the AND function the target value will be 1 Output , O : The output value from the neuron Ij : Inputs being presented to the neuron Wj : Weight from inpu ...

... only present it with the input but also with a value that we require the network to produce. For example, if we present the network with [1,1] for the AND function the target value will be 1 Output , O : The output value from the neuron Ij : Inputs being presented to the neuron Wj : Weight from inpu ...

Computer lab 9: Neural networks

... Assignment 2: Comparison of ORBF networks with multilayer perceptron and optimization methods 1. Import the data set from wave.xls to SAS (call it wave) and plot the response variable (Y) versus the explanatory variable (X) using the GPLOT procedure. 2. How many peaks can you see in that function? L ...

... Assignment 2: Comparison of ORBF networks with multilayer perceptron and optimization methods 1. Import the data set from wave.xls to SAS (call it wave) and plot the response variable (Y) versus the explanatory variable (X) using the GPLOT procedure. 2. How many peaks can you see in that function? L ...