Computational Intelligence

... episodic memories. On this background, a few kinds of associative neural networks, their advanced associative features and concluding abilities will be presented. It will be shown how various data relations can be implemented and represented in these associative neural graph structures. This will al ...

... episodic memories. On this background, a few kinds of associative neural networks, their advanced associative features and concluding abilities will be presented. It will be shown how various data relations can be implemented and represented in these associative neural graph structures. This will al ...

hierarchical intelligent simulation

... offers symbolic methods, to determine directly functional behavior; their instantiation result in the correspondent numerical methods. To simulation, the hierarchical principle offers the advantage of adaptable modeling: Models are described by the user, following a general accepted paradigm (e.g., ...

... offers symbolic methods, to determine directly functional behavior; their instantiation result in the correspondent numerical methods. To simulation, the hierarchical principle offers the advantage of adaptable modeling: Models are described by the user, following a general accepted paradigm (e.g., ...

Person Movement Prediction Using Neural Networks

... The challenge is to transfer these algorithms to work with context information. Mozer [6] proposed an Adaptive Control of Home Environments (ACHE). ACHE monitors the environment, observes the actions taken by the inhabitants, and attempts to predict their next actions, by learning the anticipation n ...

... The challenge is to transfer these algorithms to work with context information. Mozer [6] proposed an Adaptive Control of Home Environments (ACHE). ACHE monitors the environment, observes the actions taken by the inhabitants, and attempts to predict their next actions, by learning the anticipation n ...

Bayesian Computation in Recurrent Neural Circuits

... suggesting that these neurons are involved in accumulating evidence (interpreted as log likelihoods) over time. Similar activity has also been reported in the primate area LIP (Shadlen & Newsome, 2001). A mathematical model based on log-likelihood ratios was found to be consistent with the observed ...

... suggesting that these neurons are involved in accumulating evidence (interpreted as log likelihoods) over time. Similar activity has also been reported in the primate area LIP (Shadlen & Newsome, 2001). A mathematical model based on log-likelihood ratios was found to be consistent with the observed ...

5. hierarchical multimodal language modeling

... Figure 6. System state of the model of cortical language areas after simulation step 13. The ‘_blank’ representing the word border between words “bot” and “put” is recognized in area A2 which activates the ‘OFF’ representation in af-A4 which deactivates area A4 for one simulation step. Immediately a ...

... Figure 6. System state of the model of cortical language areas after simulation step 13. The ‘_blank’ representing the word border between words “bot” and “put” is recognized in area A2 which activates the ‘OFF’ representation in af-A4 which deactivates area A4 for one simulation step. Immediately a ...

Estimation and Improve Routing Protocol Mobile Ad

... the brain, process information. The key element of this paradigm is the novel structure of the information processing system. An ANN are configure for a specific application, such as pattern recognition or data classification, through a learning process. Learning in biological systems involves adjus ...

... the brain, process information. The key element of this paradigm is the novel structure of the information processing system. An ANN are configure for a specific application, such as pattern recognition or data classification, through a learning process. Learning in biological systems involves adjus ...

Encoding and Retrieval of Episodic Memories: Role of Hippocampus

... learning of a single word in a list learning experiment. Note that these are not semantic representations of the word which would be activated in a wide range of different contexts—rather, they code the activation of the semantic representation in a specific episode. As described below, in each regi ...

... learning of a single word in a list learning experiment. Note that these are not semantic representations of the word which would be activated in a wide range of different contexts—rather, they code the activation of the semantic representation in a specific episode. As described below, in each regi ...

Spiking Neural Networks: Principles and Challenges

... to the computation have to be expressed in terms of the spikes that spiking neurons communicate with. The challenge is that the nature of the neural code (or neural codes) is an unresolved topic of research in neuroscience. However, based on what is known from biology, a number of neural information ...

... to the computation have to be expressed in terms of the spikes that spiking neurons communicate with. The challenge is that the nature of the neural code (or neural codes) is an unresolved topic of research in neuroscience. However, based on what is known from biology, a number of neural information ...

Advanced Applications of Neural Networks and Artificial Intelligence

... computing technology in computer science fields. This paper reviews the field of Artificial intelligence and focusing on recent applications which uses Artificial Neural Networks (ANN‟s) and Artificial Intelligence (AI). It also considers the integration of neural networks with other computing metho ...

... computing technology in computer science fields. This paper reviews the field of Artificial intelligence and focusing on recent applications which uses Artificial Neural Networks (ANN‟s) and Artificial Intelligence (AI). It also considers the integration of neural networks with other computing metho ...

Machine Learning Methods for Decision Support

... and unsupervised ML methods? Supervised learning: - Give to the learning algorithm several instances of input-output pairs; the algorithm learns to predict the correct output that corresponds to some inputs (not only previously seen but also previously unseen ones (“generalization”)). - In our origi ...

... and unsupervised ML methods? Supervised learning: - Give to the learning algorithm several instances of input-output pairs; the algorithm learns to predict the correct output that corresponds to some inputs (not only previously seen but also previously unseen ones (“generalization”)). - In our origi ...

Presentation file I - Discovery Systems Laboratory

... unsupervised ML methods? Supervised learning: - Give to the learning algorithm several instances of input-output pairs; the algorithm learns to predict the correct output that corresponds to some inputs (not only previously seen but also previously unseen ones (“generalization”)). - In our original ...

... unsupervised ML methods? Supervised learning: - Give to the learning algorithm several instances of input-output pairs; the algorithm learns to predict the correct output that corresponds to some inputs (not only previously seen but also previously unseen ones (“generalization”)). - In our original ...

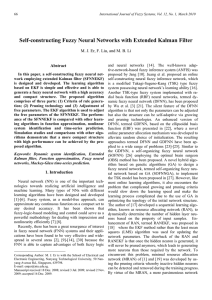

Self-constructing Fuzzy Neural Networks with Extended Kalman Filter

... generate a fuzzy neural network with a high accuracy Another TSK-type fuzzy system implemented with raand compact structure. The proposed algorithm dial basis function (RBF) neural networks, termed dycomprises of three parts: (1) Criteria of rule genera- namic fuzzy neural network (DFNN), has been p ...

... generate a fuzzy neural network with a high accuracy Another TSK-type fuzzy system implemented with raand compact structure. The proposed algorithm dial basis function (RBF) neural networks, termed dycomprises of three parts: (1) Criteria of rule genera- namic fuzzy neural network (DFNN), has been p ...

Semantic Networks: Visualizations of Knowledge

... and internal representations adds to this picture. Brachman’s lowest level has the machine concepts of "atoms and pointers", betraying his preference for implementations built using Lisp. Of course, any symbol system can be implemented using any data structure with adequate features. Traditional imp ...

... and internal representations adds to this picture. Brachman’s lowest level has the machine concepts of "atoms and pointers", betraying his preference for implementations built using Lisp. Of course, any symbol system can be implemented using any data structure with adequate features. Traditional imp ...

Musical Composer Identification through Probabilistic and

... that, roughly speaking, determines the range of each class and consequently its performance [16,19]. Probabilistic Neural Networks have been recently utilized in musical applications [3]. Feedforward Neural Networks. Although, many different models of Artificial Neural Network have been proposed, th ...

... that, roughly speaking, determines the range of each class and consequently its performance [16,19]. Probabilistic Neural Networks have been recently utilized in musical applications [3]. Feedforward Neural Networks. Although, many different models of Artificial Neural Network have been proposed, th ...

Decoding a Temporal Population Code

... continuous processing with no stimulus-triggered resets. We find a large range of classification performance, showing that the no-reset strategy is significantly outperformed by the different types of stimulus-triggered initializations. Building on these results, we discuss possible implementations ...

... continuous processing with no stimulus-triggered resets. We find a large range of classification performance, showing that the no-reset strategy is significantly outperformed by the different types of stimulus-triggered initializations. Building on these results, we discuss possible implementations ...

Artificial Intelligence and Decision Systems Course notes

... field of psychology, that is dedicated to understanding how the human mind works. Herbert Simon and Alan Newall have contributed enormously to the field, precisely on this approach of making programs based on our understanding of the human mind. There is however one hidden assumption: that the mecha ...

... field of psychology, that is dedicated to understanding how the human mind works. Herbert Simon and Alan Newall have contributed enormously to the field, precisely on this approach of making programs based on our understanding of the human mind. There is however one hidden assumption: that the mecha ...

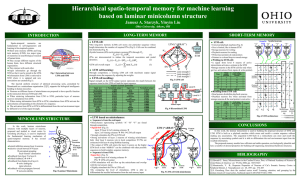

From/To LTM - Ohio University

... building in human neocortex. Neurons on different layers of minicolumns are proposed to have specific function in the interaction between STM and LTM. When retrieving information from LTM to STM, particular layer of neurons receives stimulation from LTM. When storing information from STM to LT ...

... building in human neocortex. Neurons on different layers of minicolumns are proposed to have specific function in the interaction between STM and LTM. When retrieving information from LTM to STM, particular layer of neurons receives stimulation from LTM. When storing information from STM to LT ...

applying artificial neural networks in slope stability related

... interpretation is difficult to be explained and after that, they can make predictions on a set of new input data. This property makes the ANNs to be more advanced against empirical and statistical methods, which require prior knowledge of the data distribution and also the nature of the relationship ...

... interpretation is difficult to be explained and after that, they can make predictions on a set of new input data. This property makes the ANNs to be more advanced against empirical and statistical methods, which require prior knowledge of the data distribution and also the nature of the relationship ...

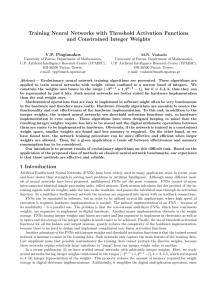

Training Neural Networks with Threshold Activation Functions and Constrained Integer Weights

... Artificial Feedforward Neural Networks (FNNs) have been widely used in many application areas in recent years and have shown their strength in solving hard problems in Artificial Intelligence. Although many different models of neural networks have been proposed, multilayered FNNs are the most common ...

... Artificial Feedforward Neural Networks (FNNs) have been widely used in many application areas in recent years and have shown their strength in solving hard problems in Artificial Intelligence. Although many different models of neural networks have been proposed, multilayered FNNs are the most common ...

BCM Theory

... (time constant ~20 sec; see [8]) the fast scale modulatory component does not play an important role in the post-lesion DCN activity. Therefore, the activity of DCN neurons is controlled by the two components of slow and medium scale modulatory mechanisms (brown and green curves). Note that the DCN ...

... (time constant ~20 sec; see [8]) the fast scale modulatory component does not play an important role in the post-lesion DCN activity. Therefore, the activity of DCN neurons is controlled by the two components of slow and medium scale modulatory mechanisms (brown and green curves). Note that the DCN ...

Transfer Learning through Indirect Encoding - Eplex

... comes naturally from the domain. That way, CPPNs can compute the connectivity of the substrate as a function of that geometry. The x and y coordinates of each input unit are in the range [−1, 1]. Furthermore, the output layer of the substrate matches the dimensions of the BEV so that the CPPN can ex ...

... comes naturally from the domain. That way, CPPNs can compute the connectivity of the substrate as a function of that geometry. The x and y coordinates of each input unit are in the range [−1, 1]. Furthermore, the output layer of the substrate matches the dimensions of the BEV so that the CPPN can ex ...

Fading memory and kernel properties of generic cortical microcircuit

... very short) time windows, regardless of the complexity of the input. In this case the domain D and range R consist of time-varying functions u(Æ), y(Æ) (with analog inputs and outputs), rather than of static character strings. If one excites a sufficiently complex recurrent circuit (or other medium) w ...

... very short) time windows, regardless of the complexity of the input. In this case the domain D and range R consist of time-varying functions u(Æ), y(Æ) (with analog inputs and outputs), rather than of static character strings. If one excites a sufficiently complex recurrent circuit (or other medium) w ...

Introduction to Sequence Analysis for Human Behavior Understanding

... point of the sequence. The combination of factorization and independence assumptions has thus made it possible to reduce the number of parameters and model long sequences with a compact and tractable representation. Probabilistic graphical models offer a theoretic framework where factorization and i ...

... point of the sequence. The combination of factorization and independence assumptions has thus made it possible to reduce the number of parameters and model long sequences with a compact and tractable representation. Probabilistic graphical models offer a theoretic framework where factorization and i ...

Steel Production and Its Uses

... companies to discover hidden knowledge which is present in their databases. It employees the usage of AI techniques such as neural networks, fuzzy logic, and genetic algorithms upon mass quantities of data to try to find and understand various hidden trends or relationships within this information. ...

... companies to discover hidden knowledge which is present in their databases. It employees the usage of AI techniques such as neural networks, fuzzy logic, and genetic algorithms upon mass quantities of data to try to find and understand various hidden trends or relationships within this information. ...

mayberry.sardsrn

... to step through the parse (such as that in figure 1), generating a compressed distributed representation of the top element of the stack at each step (formed by a R AAM network: section 4.1). The network reads the sequence of words one word at a time, and each time either shifts the word onto the st ...

... to step through the parse (such as that in figure 1), generating a compressed distributed representation of the top element of the stack at each step (formed by a R AAM network: section 4.1). The network reads the sequence of words one word at a time, and each time either shifts the word onto the st ...

Hierarchical temporal memory

Hierarchical temporal memory (HTM) is an online machine learning model developed by Jeff Hawkins and Dileep George of Numenta, Inc. that models some of the structural and algorithmic properties of the neocortex. HTM is a biomimetic model based on the memory-prediction theory of brain function described by Jeff Hawkins in his book On Intelligence. HTM is a method for discovering and inferring the high-level causes of observed input patterns and sequences, thus building an increasingly complex model of the world.Jeff Hawkins states that HTM does not present any new idea or theory, but combines existing ideas to mimic the neocortex with a simple design that provides a large range of capabilities. HTM combines and extends approaches used in Sparse distributed memory, Bayesian networks, spatial and temporal clustering algorithms, while using a tree-shaped hierarchy of nodes that is common in neural networks.