Shannon entropy: a rigorous mathematical notion at the

... of many-body systems and how it accounts for their macroscopic behavior (Honerkamp 1998; Castiglione et al. 2008). Establishing the relationships between statistical entropy, statistical mechanics and thermodynamic entropy was initiated by Jaynes (Jaynes 1957; Jaynes 1982b). In an initially totally ...

... of many-body systems and how it accounts for their macroscopic behavior (Honerkamp 1998; Castiglione et al. 2008). Establishing the relationships between statistical entropy, statistical mechanics and thermodynamic entropy was initiated by Jaynes (Jaynes 1957; Jaynes 1982b). In an initially totally ...

On Generalized Measures of Information with

... Special thanks are due to Vinita who corrected many of my drafts of papers, this thesis, all the way from DC and WI. Thanks to Vinita, Moski and Madhulatha for their care. I am forever indebted to my sister Kalyani for her prayers. My special thanks are due to my sister Sasi and her husband and to m ...

... Special thanks are due to Vinita who corrected many of my drafts of papers, this thesis, all the way from DC and WI. Thanks to Vinita, Moski and Madhulatha for their care. I am forever indebted to my sister Kalyani for her prayers. My special thanks are due to my sister Sasi and her husband and to m ...

Mathematical Structures in Computer Science Shannon entropy: a

... of the relationships between the different notions of entropy as they appear today, rather than from a historical perspective, and to highlight the connections between probability, information theory, dynamical systems theory and statistical physics. I will base my presentation on mathematical result ...

... of the relationships between the different notions of entropy as they appear today, rather than from a historical perspective, and to highlight the connections between probability, information theory, dynamical systems theory and statistical physics. I will base my presentation on mathematical result ...

Entropy (information theory)

... Main article: Diversity index to the entropy rate in the case of a stationary process.) Other quantities of information are also used to compare Entropy is one of several ways to measure diversity. or relate different sources of information. Specifically, Shannon entropy is the logarithm of 1 D, the I ...

... Main article: Diversity index to the entropy rate in the case of a stationary process.) Other quantities of information are also used to compare Entropy is one of several ways to measure diversity. or relate different sources of information. Specifically, Shannon entropy is the logarithm of 1 D, the I ...

The Five Greatest Applications of Markov Chains.

... model and an extended set of data might be more successful at identifying an author solely by mathematical analysis of his writings [22]. 3. C. E. Shannon’s Application to Information Theory. When Claude E. Shannon introduced “A Mathematical Theory of Communication” [30] in 1948, his intention was t ...

... model and an extended set of data might be more successful at identifying an author solely by mathematical analysis of his writings [22]. 3. C. E. Shannon’s Application to Information Theory. When Claude E. Shannon introduced “A Mathematical Theory of Communication” [30] in 1948, his intention was t ...

Full text in PDF form

... the exponential of Rényi’s entropy. Since S(·, α) is in fact a continuum of measures of Ess, it is necessary to find out which of them would be the most appropriate measure(s) of Ess. It seems that S(·, 1) = exp(H(·)), where H(·) is Shannon’s entropy, is the best choice; cf. Sect. 4 and 5. We also ...

... the exponential of Rényi’s entropy. Since S(·, α) is in fact a continuum of measures of Ess, it is necessary to find out which of them would be the most appropriate measure(s) of Ess. It seems that S(·, 1) = exp(H(·)), where H(·) is Shannon’s entropy, is the best choice; cf. Sect. 4 and 5. We also ...

On fuzzy information theory

... Coding theory is one of the most important and direct applications of information theory. It can be subdivided into source coding theory and channel coding theory. Using a statistical description for data, information theory quantifies the number of bits needed to describe the data, which is the inf ...

... Coding theory is one of the most important and direct applications of information theory. It can be subdivided into source coding theory and channel coding theory. Using a statistical description for data, information theory quantifies the number of bits needed to describe the data, which is the inf ...

Entropy Measures vs. Kolmogorov Complexity

... if this property also holds for Rényi and Tsallis entropies. The answer is no. If we replace H by R or by T , the inequalities (1) are no longer true (unless α = 1). We also analyze the validity of the relationship (1), replacing Kolmogorov complexity by its time-bounded version, proving that it ho ...

... if this property also holds for Rényi and Tsallis entropies. The answer is no. If we replace H by R or by T , the inequalities (1) are no longer true (unless α = 1). We also analyze the validity of the relationship (1), replacing Kolmogorov complexity by its time-bounded version, proving that it ho ...

A Characterization of Entropy in Terms of Information Loss

... Some examples may help to clarify this point. Consider the only possible map f : {a, b} → {c}. Suppose p is the probability measure on {a, b} such that each point has measure 1/2, while q is the unique probability measure on the set {c}. Then H(p) = ln 2, while H(q) = 0. The information loss associa ...

... Some examples may help to clarify this point. Consider the only possible map f : {a, b} → {c}. Suppose p is the probability measure on {a, b} such that each point has measure 1/2, while q is the unique probability measure on the set {c}. Then H(p) = ln 2, while H(q) = 0. The information loss associa ...

created by shannon martin gracey

... George. Based on an assumed mean weight of 140 lb, the boat was certified to carry 50 people. A subsequent investigation showed that most of the passengers weighed more than 200 lb, and the boat should have been certified for a much smaller number of passengers. A water taxi sank in Baltimore’s inne ...

... George. Based on an assumed mean weight of 140 lb, the boat was certified to carry 50 people. A subsequent investigation showed that most of the passengers weighed more than 200 lb, and the boat should have been certified for a much smaller number of passengers. A water taxi sank in Baltimore’s inne ...

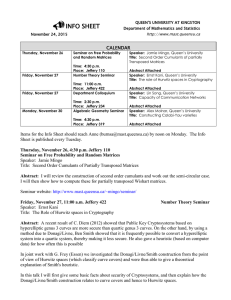

November 24th, 2015

... hyperelliptic genus 3 curves are more secure than quartic genus 3 curves. On the other hand, by using a method due to Donagi/Livne, Ben Smith showed that it is frequently possible to convert a hyperelliptic system into a quartic system, thereby making it less secure. He also gave a heuristic (based ...

... hyperelliptic genus 3 curves are more secure than quartic genus 3 curves. On the other hand, by using a method due to Donagi/Livne, Ben Smith showed that it is frequently possible to convert a hyperelliptic system into a quartic system, thereby making it less secure. He also gave a heuristic (based ...

A tribute to Claude Shannon - University of Arizona | Ecology and

... 1 ‘The mathematical theory of communication’ by Claude E. Shannon, Bell Telephone Laboratories. This paper is reprinted from the Bell System Technical Journal, July and October, 1948. This is the same paper with some corrections and additional references. 2 ‘Recent contributions to the mathematical ...

... 1 ‘The mathematical theory of communication’ by Claude E. Shannon, Bell Telephone Laboratories. This paper is reprinted from the Bell System Technical Journal, July and October, 1948. This is the same paper with some corrections and additional references. 2 ‘Recent contributions to the mathematical ...

Shannon entropy as a measure of uncertainty in positions and

... Shannon entropy does not possess these two properties. For instance, the unit of entropy S (x) (in SI units) is the logarithm of meter. This makes it impossible to check if the continuous Shannon entropy is positive or negative. On the other hand, if we introduce some length (momentum) scale in orde ...

... Shannon entropy does not possess these two properties. For instance, the unit of entropy S (x) (in SI units) is the logarithm of meter. This makes it impossible to check if the continuous Shannon entropy is positive or negative. On the other hand, if we introduce some length (momentum) scale in orde ...

The Mathematics of the Information Revolution Sanjoy K. Mitter

... What we are actually living in, is the “data age” in the sense that massive amounts of data on all aspects of modern life is available and accessible. The so-called Information revolution is yet to come. This distinction between data and information is an important one and needs to be made. We need ...

... What we are actually living in, is the “data age” in the sense that massive amounts of data on all aspects of modern life is available and accessible. The so-called Information revolution is yet to come. This distinction between data and information is an important one and needs to be made. We need ...