Roxy Peck`s collection of classroom voting questions for statistics

... 2. The method used to construct the interval will produce an interval that includes the value of the population proportion about 90% of the time in repeated sampling. 3. If 100 different random samples of size 60 from this population were each used to construct a confidence 90% confidence interval, ...

... 2. The method used to construct the interval will produce an interval that includes the value of the population proportion about 90% of the time in repeated sampling. 3. If 100 different random samples of size 60 from this population were each used to construct a confidence 90% confidence interval, ...

Commonly Used Statistical Packages in R

... moments: Functions to calculate: moments, Pearson's kurtosis, Geary's kurtosis and skewness; tests related to them. MNP: MNP fits the Bayesian multinomial probit model via Markov chain Monte Carlo. The multinomial probit model is often used to analyze the discrete choices made by individuals recorde ...

... moments: Functions to calculate: moments, Pearson's kurtosis, Geary's kurtosis and skewness; tests related to them. MNP: MNP fits the Bayesian multinomial probit model via Markov chain Monte Carlo. The multinomial probit model is often used to analyze the discrete choices made by individuals recorde ...

Lecture 12 Qualitative Dependent Variables

... OLS or reformulate the adequate corresponding test statistics or confidence intervals. For the later options, we have to adjust standard errors, t, F , and LM statistics so that they are valid in the presence of heteroskedasticity of unknown form. Such procedure is called heteroskedasticity-robust i ...

... OLS or reformulate the adequate corresponding test statistics or confidence intervals. For the later options, we have to adjust standard errors, t, F , and LM statistics so that they are valid in the presence of heteroskedasticity of unknown form. Such procedure is called heteroskedasticity-robust i ...

PPT

... So, what do the significance tests from this model tell us and what do they not tell us about the model we have plotted? We know whether or not the slope of the Y-X1 regression line = 0 for the mean of X2 (t-test of the X1 weight). We know whether or not the slope of the Y-X1 regression weight chan ...

... So, what do the significance tests from this model tell us and what do they not tell us about the model we have plotted? We know whether or not the slope of the Y-X1 regression line = 0 for the mean of X2 (t-test of the X1 weight). We know whether or not the slope of the Y-X1 regression weight chan ...

STK4900/9900 - Lecture 2 Program Comparing two groups

... realizations of random variables, while x1 , … , xn are considered to be fixed (i.e. non-random) and the εi's are random errors (noise) ...

... realizations of random variables, while x1 , … , xn are considered to be fixed (i.e. non-random) and the εi's are random errors (noise) ...

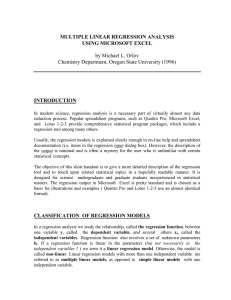

MULTIPLE LINEAR REGRESSION ANALYSIS

... independent variables. Regression function also involves a set of unknown parameters bi. If a regression function is linear in the parameters (but not necessarily in the independent variables ! ) we term it a linear regression model. Otherwise, the model is called non-linear. Linear regression model ...

... independent variables. Regression function also involves a set of unknown parameters bi. If a regression function is linear in the parameters (but not necessarily in the independent variables ! ) we term it a linear regression model. Otherwise, the model is called non-linear. Linear regression model ...

Additional Topics on Testing and Bayesian Statistics

... estimation results; how to derive statistical properties of random variables, functions of random variables, and functions of sample data; the characteristics of different probability distributions and major advantages and limitations of using these distributions for statistical or econometric model ...

... estimation results; how to derive statistical properties of random variables, functions of random variables, and functions of sample data; the characteristics of different probability distributions and major advantages and limitations of using these distributions for statistical or econometric model ...

Linear versus logistic regression when the dependent variable

... explanations of the results from surveys. With a reasonably large sample, random sampling error may often be rejected off hand as a possible cause of a tendency, as long as we are not interested in very weak effects. Secondly it is pertinent to ask how important the objection based on statistical th ...

... explanations of the results from surveys. With a reasonably large sample, random sampling error may often be rejected off hand as a possible cause of a tendency, as long as we are not interested in very weak effects. Secondly it is pertinent to ask how important the objection based on statistical th ...

Dr. Frank Wood - Columbia Statistics

... • The sampling distribution of F* when H0(β = 0) holds can be derived starting from Cochran’s theorem • Cochran’s theorem – If all n observations Yi come from the same normal distribution with mean µ and variance σ, and SSTO is decomposed into k sums of squares SSr, each with degrees of freedom df ...

... • The sampling distribution of F* when H0(β = 0) holds can be derived starting from Cochran’s theorem • Cochran’s theorem – If all n observations Yi come from the same normal distribution with mean µ and variance σ, and SSTO is decomposed into k sums of squares SSr, each with degrees of freedom df ...

Linear regression

In statistics, linear regression is an approach for modeling the relationship between a scalar dependent variable y and one or more explanatory variables (or independent variables) denoted X. The case of one explanatory variable is called simple linear regression. For more than one explanatory variable, the process is called multiple linear regression. (This term should be distinguished from multivariate linear regression, where multiple correlated dependent variables are predicted, rather than a single scalar variable.)In linear regression, data are modeled using linear predictor functions, and unknown model parameters are estimated from the data. Such models are called linear models. Most commonly, linear regression refers to a model in which the conditional mean of y given the value of X is an affine function of X. Less commonly, linear regression could refer to a model in which the median, or some other quantile of the conditional distribution of y given X is expressed as a linear function of X. Like all forms of regression analysis, linear regression focuses on the conditional probability distribution of y given X, rather than on the joint probability distribution of y and X, which is the domain of multivariate analysis.Linear regression was the first type of regression analysis to be studied rigorously, and to be used extensively in practical applications. This is because models which depend linearly on their unknown parameters are easier to fit than models which are non-linearly related to their parameters and because the statistical properties of the resulting estimators are easier to determine.Linear regression has many practical uses. Most applications fall into one of the following two broad categories: If the goal is prediction, or forecasting, or error reduction, linear regression can be used to fit a predictive model to an observed data set of y and X values. After developing such a model, if an additional value of X is then given without its accompanying value of y, the fitted model can be used to make a prediction of the value of y. Given a variable y and a number of variables X1, ..., Xp that may be related to y, linear regression analysis can be applied to quantify the strength of the relationship between y and the Xj, to assess which Xj may have no relationship with y at all, and to identify which subsets of the Xj contain redundant information about y.Linear regression models are often fitted using the least squares approach, but they may also be fitted in other ways, such as by minimizing the ""lack of fit"" in some other norm (as with least absolute deviations regression), or by minimizing a penalized version of the least squares loss function as in ridge regression (L2-norm penalty) and lasso (L1-norm penalty). Conversely, the least squares approach can be used to fit models that are not linear models. Thus, although the terms ""least squares"" and ""linear model"" are closely linked, they are not synonymous.