Ordinal Decision Models for Markov Decision Processes

... concerning rewards and the preference relation they induce over policies. A strictly increasing affine transformation of the reward function does not change the preferences over policies. Lemma 1. For λ > 0 and c ∈ R, the preferences over policies defined by Eq. 2 in (S, A, T, r) and (S, A, T, λr + ...

... concerning rewards and the preference relation they induce over policies. A strictly increasing affine transformation of the reward function does not change the preferences over policies. Lemma 1. For λ > 0 and c ∈ R, the preferences over policies defined by Eq. 2 in (S, A, T, r) and (S, A, T, λr + ...

Getting Started with PROC LOGISTIC

... regression models (those with three or more levels, or categories, of the dependent variable) can be implemented in the SAS System, discussion of these types of models, and how they are implemented in the SAS System, is beyond the scope of this paper and will not be considered ...

... regression models (those with three or more levels, or categories, of the dependent variable) can be implemented in the SAS System, discussion of these types of models, and how they are implemented in the SAS System, is beyond the scope of this paper and will not be considered ...

PowerPoint

... • Completely defines probability distribution over model structures with varying sets of objects • Intuition: Stochastic generative process with two kinds of steps: – Set the value of a function on a tuple of arguments – Add some number of objects to the world ...

... • Completely defines probability distribution over model structures with varying sets of objects • Intuition: Stochastic generative process with two kinds of steps: – Set the value of a function on a tuple of arguments – Add some number of objects to the world ...

Getting Started With PROC LOGISTIC

... dependent variable will achieve the value of one in the population from which the data are assumed to have been randomly sampled. The Odds Ratio Exponentiation of the parameter estimate(s) for the independent variable(s) in the model by the number e (about 2.17) yields the odds ratio, which is a mor ...

... dependent variable will achieve the value of one in the population from which the data are assumed to have been randomly sampled. The Odds Ratio Exponentiation of the parameter estimate(s) for the independent variable(s) in the model by the number e (about 2.17) yields the odds ratio, which is a mor ...

On-line Human Activity Recognition from Audio and Home

... S WEET-H OME [77]. All of them have integrated human activity modelling and recognition in their systems. Most of the progress made in the AR domain came from the computer vision domain [1]. However, the installation of video cameras in the user’s home is not only raising ethical questions [72], bu ...

... S WEET-H OME [77]. All of them have integrated human activity modelling and recognition in their systems. Most of the progress made in the AR domain came from the computer vision domain [1]. However, the installation of video cameras in the user’s home is not only raising ethical questions [72], bu ...

Na¨ıve Inference viewed as Computation

... inference is computationally intractable (Cooper, 1990). For some, this intractability does not vitiate the explanatory value of Bayesian inference viewed as an optimal solution for a cognitive or perceptual problem (e.g., Anderson, 1990). The point is made that such models can be viewed as theories ...

... inference is computationally intractable (Cooper, 1990). For some, this intractability does not vitiate the explanatory value of Bayesian inference viewed as an optimal solution for a cognitive or perceptual problem (e.g., Anderson, 1990). The point is made that such models can be viewed as theories ...

Aalborg Universitet Parameter learning in MTE networks using incomplete data

... Definition 1). Instead we follow the same procedure as for the conditional linear Gaussian distribution: for each of the continuous variables in Y ′ = {Y2 , . . . Yj }, split the variable Yi into a finite set of intervals and use the midpoint of the lth interval to represent Yi in that interval. The ...

... Definition 1). Instead we follow the same procedure as for the conditional linear Gaussian distribution: for each of the continuous variables in Y ′ = {Y2 , . . . Yj }, split the variable Yi into a finite set of intervals and use the midpoint of the lth interval to represent Yi in that interval. The ...

Variational Inference for Dirichlet Process Mixtures

... − Eq [log q(V, η ∗ , Z)] . To exploit this bound, we must find a family of variational distributions that approximates the distribution of the infinite-dimensional random measure G, where the random measure is expressed in terms of the infinite sets V = {V1 , V2 , . . .} and η ∗ = {η1∗ , η2∗ , . . . ...

... − Eq [log q(V, η ∗ , Z)] . To exploit this bound, we must find a family of variational distributions that approximates the distribution of the infinite-dimensional random measure G, where the random measure is expressed in terms of the infinite sets V = {V1 , V2 , . . .} and η ∗ = {η1∗ , η2∗ , . . . ...

Towards common-sense reasoning via conditional

... abstract activity, like the playing of chess, would be best. It can also be maintained that it is best to provide the machine with the best sense organs money can buy, and then teach it to understand and speak English. This process could follow the normal teaching of a child. Things would be pointed ...

... abstract activity, like the playing of chess, would be best. It can also be maintained that it is best to provide the machine with the best sense organs money can buy, and then teach it to understand and speak English. This process could follow the normal teaching of a child. Things would be pointed ...

Delirium superimposed on dementia: defining disease states and

... joining a latent class variable at time ti-2 to the latent class variable at ti . Two further assumptions, not appearing in the graphs of Figure 1, are homogeneity and stationarity. By homogeneity we mean that the relationship between manifest and latent variables does not change in time; in other w ...

... joining a latent class variable at time ti-2 to the latent class variable at ti . Two further assumptions, not appearing in the graphs of Figure 1, are homogeneity and stationarity. By homogeneity we mean that the relationship between manifest and latent variables does not change in time; in other w ...

Probabilistic Latent Variable Model for Sparse

... a small number are combined to represent any particular input. In this section, we present a brief motivation for this concept of sparsity and show how one can incorporate it in the framework of the latent variable model. The idea of sparsity originated from attempts at understanding the general inf ...

... a small number are combined to represent any particular input. In this section, we present a brief motivation for this concept of sparsity and show how one can incorporate it in the framework of the latent variable model. The idea of sparsity originated from attempts at understanding the general inf ...

Probabilistic Sense Sentiment Similarity through Hidden Emotions

... similarity between words to perform IQAP inference and SO prediction tasks respectively. In IQAPs, we employ the sentiment similarity between the adjectives in questions and answers to interpret the indirect answers. Figure 4 shows the algorithm for this purpose. SS(.,.) indicates sentiment similari ...

... similarity between words to perform IQAP inference and SO prediction tasks respectively. In IQAPs, we employ the sentiment similarity between the adjectives in questions and answers to interpret the indirect answers. Figure 4 shows the algorithm for this purpose. SS(.,.) indicates sentiment similari ...

Using Artificial Neural Network to Predict Collisions on Horizontal

... fields including transportation engineering prediction models. The traffic systems’ excessive variables and their complex character make it difficult to predict the results. The actual components of traffic predictive ability may be enhanced through the use of ANN analysis that is able to examine no ...

... fields including transportation engineering prediction models. The traffic systems’ excessive variables and their complex character make it difficult to predict the results. The actual components of traffic predictive ability may be enhanced through the use of ANN analysis that is able to examine no ...

Modelling Dynamic Causal Interactions with Bayesian Networks

... depends on the particular problem. A temporal random variable V in the net can take on a set of values v[i] with i∈{a,…,b,never}, where a and b are instants defining the limits of the temporal range of interest for V. For example, if V represents “being taken to hospital”, V=v[a] expresses that the ...

... depends on the particular problem. A temporal random variable V in the net can take on a set of values v[i] with i∈{a,…,b,never}, where a and b are instants defining the limits of the temporal range of interest for V. For example, if V represents “being taken to hospital”, V=v[a] expresses that the ...

Aalborg Universitet On local optima in learning bayesian networks

... and M1 6= M2 . Two DAGs G1 and G2 are equivalent if they represent the same model, i.e. M (G1 ) = M (G2 ). It is shown by Chickering (1995) that two DAGs G1 and G2 are equivalent iff there is a sequence of covered arc reversals that converts G1 into G2 . A model M is inclusion optimal w.r.t. a joint ...

... and M1 6= M2 . Two DAGs G1 and G2 are equivalent if they represent the same model, i.e. M (G1 ) = M (G2 ). It is shown by Chickering (1995) that two DAGs G1 and G2 are equivalent iff there is a sequence of covered arc reversals that converts G1 into G2 . A model M is inclusion optimal w.r.t. a joint ...

A Novel Connectionist System for Unconstrained Handwriting

... rely on the same hidden Markov models that have been used for decades in speech and handwriting recognition, despite their well-known shortcomings. This paper proposes an alternative approach based on a novel type of recurrent neural network, specifically designed for sequence labelling tasks where ...

... rely on the same hidden Markov models that have been used for decades in speech and handwriting recognition, despite their well-known shortcomings. This paper proposes an alternative approach based on a novel type of recurrent neural network, specifically designed for sequence labelling tasks where ...

Generative Adversarial Structured Networks

... architectures, such as convolutional neural networks [12], conditional models [11, 3], variational autoencoders [1], moment-matching networks [9], and many others. All of the above generate samples by passing noise through the input of a feed-forward neural network. One limitation of this approach i ...

... architectures, such as convolutional neural networks [12], conditional models [11, 3], variational autoencoders [1], moment-matching networks [9], and many others. All of the above generate samples by passing noise through the input of a feed-forward neural network. One limitation of this approach i ...

Indian Buffet Processes with Power-law Behavior

... both the prior of µ and the likelihood of each Zi |µ factorize over disjoint subsets of Θ. Secondly, µ must have fixed atoms at each θk∗ since otherwise the probability that there will be atoms among Z1 , . . . , Zn at precisely θk∗ is zero. The posterior mass at θk∗ is obtained by multiplying a Ber ...

... both the prior of µ and the likelihood of each Zi |µ factorize over disjoint subsets of Θ. Secondly, µ must have fixed atoms at each θk∗ since otherwise the probability that there will be atoms among Z1 , . . . , Zn at precisely θk∗ is zero. The posterior mass at θk∗ is obtained by multiplying a Ber ...

Negation Without Negation in Probabilistic Logic Programming

... “noise” variables N1 , N2 , . . . , NN . Each noise variable appears exactly once as a rule head, in special probabilistic rules called probabilistic facts with the form pi : ni . Other probabilistic rules are actually only syntactic sugar: p : head ← body is short for p : ni and head ← ni ∧ body, w ...

... “noise” variables N1 , N2 , . . . , NN . Each noise variable appears exactly once as a rule head, in special probabilistic rules called probabilistic facts with the form pi : ni . Other probabilistic rules are actually only syntactic sugar: p : head ← body is short for p : ni and head ← ni ∧ body, w ...

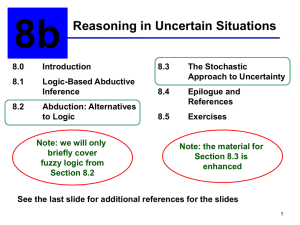

ch08b

... allowing graded beliefs. In addition, it provides a theory to assign beliefs to relations between propositions (e.g., pq), and related propositions (the notion of dependency). ...

... allowing graded beliefs. In addition, it provides a theory to assign beliefs to relations between propositions (e.g., pq), and related propositions (the notion of dependency). ...

Document

... – Concept formation (e.g., what are patterns of genomic instability as measured by array CGH that constitute molecular subtypes of lung cancer capable of guiding development of new treatments?); – Feature construction (e.g., how can mass-spectrometry signals be decomposed into individual variables t ...

... – Concept formation (e.g., what are patterns of genomic instability as measured by array CGH that constitute molecular subtypes of lung cancer capable of guiding development of new treatments?); – Feature construction (e.g., how can mass-spectrometry signals be decomposed into individual variables t ...

PDF

... Heavy-tailed distributions were first introduced by Pareto in the context of income distributions and were extensively studied by Lévy. Until Mandelbrot's work on fractals these types of distributions, also called power-law distributions, were often considered pathological cases. Recently, heavy-tai ...

... Heavy-tailed distributions were first introduced by Pareto in the context of income distributions and were extensively studied by Lévy. Until Mandelbrot's work on fractals these types of distributions, also called power-law distributions, were often considered pathological cases. Recently, heavy-tai ...

CS171 - Intro to AI - Discussion Section 4

... “A25 will get me there on time if there's no accident on the bridge and it doesn't rain and my tires remain intact etc etc.” (A1440 might reasonably be said to get me there on time but I'd have to stay overnight in the airport …) ...

... “A25 will get me there on time if there's no accident on the bridge and it doesn't rain and my tires remain intact etc etc.” (A1440 might reasonably be said to get me there on time but I'd have to stay overnight in the airport …) ...

Structured Regularizer for Neural Higher

... x, i.e. f m−n (yt−n+1:t ; t, x) = [1(yt−n+1:t = a1 ) gm (x, t), . . .]T where gm (x, t) is an arbitrary function. This function maps an input sub-sequence into a new feature space. In this work, we choose to use MLP networks for this function being able to model complex interactions among the variab ...

... x, i.e. f m−n (yt−n+1:t ; t, x) = [1(yt−n+1:t = a1 ) gm (x, t), . . .]T where gm (x, t) is an arbitrary function. This function maps an input sub-sequence into a new feature space. In this work, we choose to use MLP networks for this function being able to model complex interactions among the variab ...

novel sequence representations Reliable prediction of T

... sparse versus the Blosum sequence-encoding scheme constitutes two different approaches to represent sequence information to the neural network. In the sparse encoding the neural network is given very precise information about the sequence that corresponds to a given training example. One can say tha ...

... sparse versus the Blosum sequence-encoding scheme constitutes two different approaches to represent sequence information to the neural network. In the sparse encoding the neural network is given very precise information about the sequence that corresponds to a given training example. One can say tha ...

Hidden Markov model

A hidden Markov model (HMM) is a statistical Markov model in which the system being modeled is assumed to be a Markov process with unobserved (hidden) states. A HMM can be presented as the simplest dynamic Bayesian network. The mathematics behind the HMM was developed by L. E. Baum and coworkers. It is closely related to an earlier work on the optimal nonlinear filtering problem by Ruslan L. Stratonovich, who was the first to describe the forward-backward procedure.In simpler Markov models (like a Markov chain), the state is directly visible to the observer, and therefore the state transition probabilities are the only parameters. In a hidden Markov model, the state is not directly visible, but output, dependent on the state, is visible. Each state has a probability distribution over the possible output tokens. Therefore the sequence of tokens generated by an HMM gives some information about the sequence of states. Note that the adjective 'hidden' refers to the state sequence through which the model passes, not to the parameters of the model; the model is still referred to as a 'hidden' Markov model even if these parameters are known exactly.Hidden Markov models are especially known for their application in temporal pattern recognition such as speech, handwriting, gesture recognition, part-of-speech tagging, musical score following, partial discharges and bioinformatics.A hidden Markov model can be considered a generalization of a mixture model where the hidden variables (or latent variables), which control the mixture component to be selected for each observation, are related through a Markov process rather than independent of each other. Recently, hidden Markov models have been generalized to pairwise Markov models and triplet Markov models which allow consideration of more complex data structures and the modelling of nonstationary data.